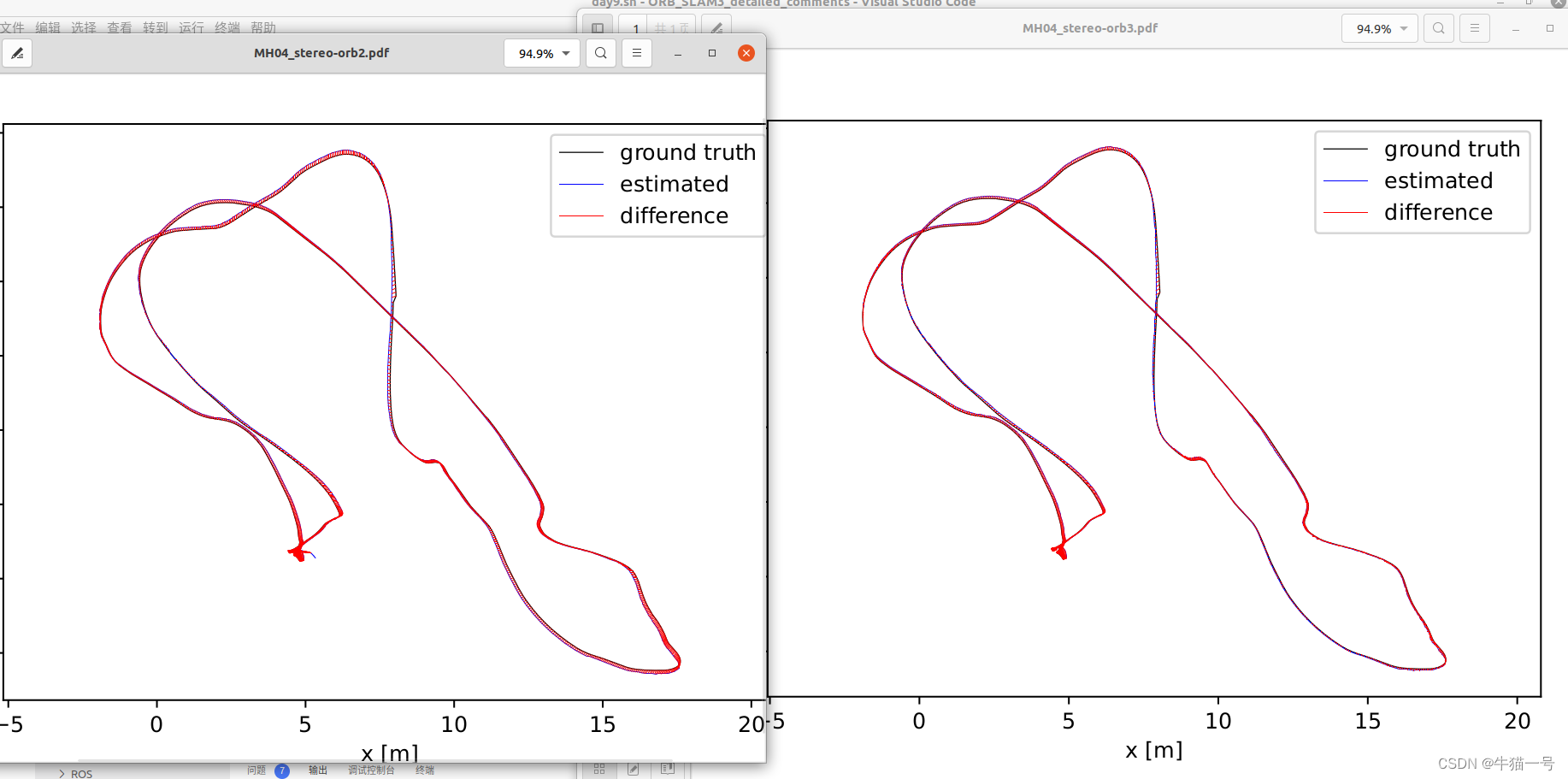

笔者刚跑了一下orb2和orb3的评估函数,如图所示

整体上结果差不多,可以看到orb2有个小角,推测是由于orb2没有多地图的设计导致它必须一直跟着跑图,而orb3有多地图的设计,可以“自断手臂”然后新图继续跟上。

评估代码来自orb2和orb3原生代码的./evaluation/evaluate_ate_scale.py,写了一个小脚本实现一起运行

#!/bin/bash

pathDatasetEuroc='/home/niumao/orbslam_ws/Datasets/EuRoC/MH_04_difficult'

pathtosave='/home/niumao/orbslam_ws/Datasets'

# 这里根据自己的文件路径去调整

cd ../../

# 这是orb3生成路径文件的脚本

# echo "Launching MH04 with Stereo sensor"

# ./Examples/Stereo/stereo_euroc ./Vocabulary/ORBvoc.txt ./Examples/Stereo/EuRoC.yaml "$pathDatasetEuroc" ./Examples/Stereo/EuRoC_TimeStamps/MH04.txt "$pathtosave"/temp/dataset-MH04_stereo

# 这是orb2生成路径文件的脚本

pathDatasetEurocLeft='/home/niumao/orbslam_ws/Datasets/EuRoC/MH_04_difficult/mav0/cam0/data'

pathDatasetEurocRight='/home/niumao/orbslam_ws/Datasets/EuRoC/MH_04_difficult/mav0/cam1/data'

cd ../

# echo "Launching MH04 with Stereo sensor"

# ./Examples/Stereo/stereo_euroc ./Vocabulary/ORBvoc.txt ./Examples/Stereo/EuRoC.yaml "$pathDatasetEurocLeft" "$pathDatasetEurocRight" ./Examples/Stereo/EuRoC_TimeStamps/MH04.txt

# echo "------------------------------------"

# 这里开始代码评估并生成结果pdf

echo "Evaluation of MH04 trajectory with Stereo sensor --ORB3--"

python ./evaluation/evaluate_ate_scale.py ./evaluation/Ground_truth/EuRoC_left_cam/MH04_GT_1E9.txt /home/niumao/orbslam_ws/Datasets/temp/dataset-MH04_stereo_f.txt --plot /home/niumao/orbslam_ws/Datasets/temp/MH04_stereo-orb3.pdf

echo "Evaluation of MH04 trajectory with Stereo sensor --ORB2--"

python ./evaluation/evaluate_ate_scale.py ./evaluation/Ground_truth/EuRoC_left_cam/MH04_GT_1E9.txt /home/niumao/orbslam_ws/Datasets/temp/CameraTrajectory.txt --plot /home/niumao/orbslam_ws/Datasets/temp/MH04_stereo-orb2.pdf

需要注意的点有:

# associate.py

# 由于python版本的不同,这里可能报错,把注释的部分改成非注释的部分即可

# first_keys = first_list.keys()

# second_keys = second_list.keys()

first_keys = list(first_list)

second_keys = list(second_list)

# evaluate_ate_scale.py

# first_stamps = first_list.keys() # 旧版本python

first_stamps = list(first_list)

# 若干代码...~...

# second_stamps = second_list.keys()

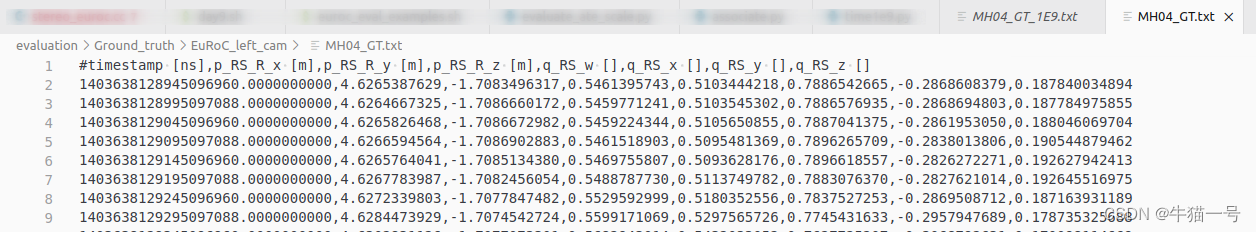

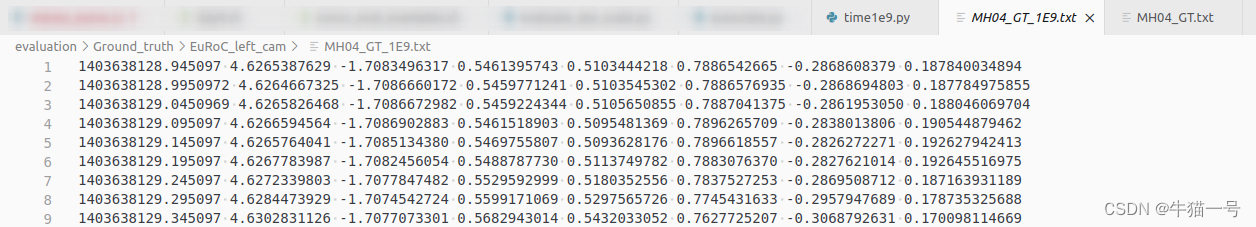

second_stamps = list(second_list)以及数据集的groundtruth文件第一列时间戳的单位是秒的9次方,要处理一下把第一列的数除以1e9,如下脚本为处理的一种参考

# time1e9.py

# 用法:获取MH04_GT.txt,丢掉第一行,将第一列除以1e9,生成MH04_GT_1E9.txt,存在的问题有这样子除出来的浮点型丢了一两个单位的精度,不过实际测试似乎问题不大

import sys

pathin = "./Ground_truth/EuRoC_left_cam/MH04_GT.txt" #数据来源

pathout = "./Ground_truth/EuRoC_left_cam/MH04_GT_1E9.txt"

import os

f = open(pathin,'r',encoding = 'UTF-8')

open(pathout,"w+").close()

list1 = [5000]

line = f.readline() # MH04_GT.txt第一行为参数注释,丢掉就行

# line = f.readline()

# -----单次调试----

'''

a = line.split(",")

b = a[0] #第一列数

c = a[1:] # c is a list

list1.append(b)

list1.append(c)

if b is not None:

b = float(b) / 1e9

with open(pathout,"a") as f_out:

# for i in list1:

first = str(b)

f_out.write(first + ' ')

for j in range(len(c)-1):

f_out.write(str(c[j]) + ' ')

f_out.write(str(c[(len(c)-1)]))

f_out.close()

'''

# ----

while line is not None and line !='':

line = f.readline()

a = line.split(",")

b = a[0] #第一列数

c = a[1:] # c is a list

list1.append(b)

list1.append(c)

# with open(pathout,"w") as f_out:

# f_out.write(str(list1))

# f_out.close()

if b != '':

b = float(b) / 1e9

with open(pathout,"a") as f_out:

first = str(b)

f_out.write(first + ' ')

for j in range(len(c)-1):

f_out.write(str(c[j]) + ' ')

f_out.write(str(c[(len(c)-1)]))

f_out.close()

# print(b)

f.close()

print("write done!")

处理前后结果如图

最后附上生成轨迹的代码

// ORBSLAM3 - stereo_euroc.cc

/**

* This file is part of ORB-SLAM3

*

* Copyright (C) 2017-2021 Carlos Campos, Richard Elvira, Juan J. Gómez Rodríguez, José M.M. Montiel and Juan D. Tardós, University of Zaragoza.

* Copyright (C) 2014-2016 Raúl Mur-Artal, José M.M. Montiel and Juan D. Tardós, University of Zaragoza.

*

* ORB-SLAM3 is free software: you can redistribute it and/or modify it under the terms of the GNU General Public

* License as published by the Free Software Foundation, either version 3 of the License, or

* (at your option) any later version.

*

* ORB-SLAM3 is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even

* the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

* GNU General Public License for more details.

*

* You should have received a copy of the GNU General Public License along with ORB-SLAM3.

* If not, see <http://www.gnu.org/licenses/>.

*/

#include<iostream>

#include<algorithm>

#include<fstream>

#include<iomanip>

#include<chrono>

#include<opencv2/core/core.hpp>

#include<System.h>

using namespace std;

void LoadImages(const string &strPathLeft, const string &strPathRight, const string &strPathTimes,

vector<string> &vstrImageLeft, vector<string> &vstrImageRight, vector<double> &vTimeStamps);

int main(int argc, char **argv)

{

if(argc < 5)

{

cerr << endl << "Usage: ./stereo_euroc path_to_vocabulary path_to_settings path_to_sequence_folder_1 path_to_times_file_1 (path_to_image_folder_2 path_to_times_file_2 ... path_to_image_folder_N path_to_times_file_N) (trajectory_file_name)" << endl;

return 1;

}

const int num_seq = (argc-3)/2;

cout << "num_seq = " << num_seq << endl;

bool bFileName= (((argc-3) % 2) == 1);

string file_name;

if (bFileName)

{

file_name = string(argv[argc-1]);

cout << "file name: " << file_name << endl;

}

// Load all sequences:

int seq;

vector< vector<string> > vstrImageLeft;

vector< vector<string> > vstrImageRight;

vector< vector<double> > vTimestampsCam;

vector<int> nImages;

vstrImageLeft.resize(num_seq);

vstrImageRight.resize(num_seq);

vTimestampsCam.resize(num_seq);

nImages.resize(num_seq);

int tot_images = 0;

for (seq = 0; seq<num_seq; seq++)

{

cout << "Loading images for sequence " << seq << "...";

string pathSeq(argv[(2*seq) + 3]);

string pathTimeStamps(argv[(2*seq) + 4]);

string pathCam0 = pathSeq + "/mav0/cam0/data";

string pathCam1 = pathSeq + "/mav0/cam1/data";

LoadImages(pathCam0, pathCam1, pathTimeStamps, vstrImageLeft[seq], vstrImageRight[seq], vTimestampsCam[seq]);

cout << "LOADED!" << endl;

nImages[seq] = vstrImageLeft[seq].size();

tot_images += nImages[seq];

}

// Vector for tracking time statistics

vector<float> vTimesTrack;

vTimesTrack.resize(tot_images);

cout << endl << "-------" << endl;

cout.precision(17);

// Create SLAM system. It initializes all system threads and gets ready to process frames.

ORB_SLAM3::System SLAM(argv[1],argv[2],ORB_SLAM3::System::STEREO, true); // 最后一个标记位是控制是否显示跑的过错可视化界面

cv::Mat imLeft, imRight;

for (seq = 0; seq<num_seq; seq++)

{

// Seq loop

double t_resize = 0;

double t_rect = 0;

double t_track = 0;

int num_rect = 0;

int proccIm = 0;

for(int ni=0; ni<nImages[seq]; ni++, proccIm++)

{

// Read left and right images from file

imLeft = cv::imread(vstrImageLeft[seq][ni],cv::IMREAD_UNCHANGED); //,cv::IMREAD_UNCHANGED);

imRight = cv::imread(vstrImageRight[seq][ni],cv::IMREAD_UNCHANGED); //,cv::IMREAD_UNCHANGED);

if(imLeft.empty())

{

cerr << endl << "Failed to load image at: "

<< string(vstrImageLeft[seq][ni]) << endl;

return 1;

}

if(imRight.empty())

{

cerr << endl << "Failed to load image at: "

<< string(vstrImageRight[seq][ni]) << endl;

return 1;

}

double tframe = vTimestampsCam[seq][ni];

#ifdef COMPILEDWITHC11

std::chrono::steady_clock::time_point t1 = std::chrono::steady_clock::now();

#else

std::chrono::monotonic_clock::time_point t1 = std::chrono::monotonic_clock::now();

#endif

// Pass the images to the SLAM system

SLAM.TrackStereo(imLeft,imRight,tframe, vector<ORB_SLAM3::IMU::Point>(), vstrImageLeft[seq][ni]);

#ifdef COMPILEDWITHC11

std::chrono::steady_clock::time_point t2 = std::chrono::steady_clock::now();

#else

std::chrono::monotonic_clock::time_point t2 = std::chrono::monotonic_clock::now();

#endif

#ifdef REGISTER_TIMES

t_track = t_resize + t_rect + std::chrono::duration_cast<std::chrono::duration<double,std::milli> >(t2 - t1).count();

SLAM.InsertTrackTime(t_track);

#endif

double ttrack= std::chrono::duration_cast<std::chrono::duration<double> >(t2 - t1).count();

vTimesTrack[ni]=ttrack;

// Wait to load the next frame

double T=0;

if(ni<nImages[seq]-1)

T = vTimestampsCam[seq][ni+1]-tframe;

else if(ni>0)

T = tframe-vTimestampsCam[seq][ni-1];

if(ttrack<T)

usleep((T-ttrack)*1e6); // 1e6

}

if(seq < num_seq - 1)

{

cout << "Changing the dataset" << endl;

SLAM.ChangeDataset();

}

}

// Stop all threads

SLAM.Shutdown();

// Save camera trajectory

if (bFileName)

{

// const string kf_file = "kf_" + string(argv[argc-1]) + ".txt";

// const string f_file = "f_" + string(argv[argc-1]) + ".txt";

const string kf_file = string(argv[argc-1]) + "_kf" + ".txt";

const string f_file = string(argv[argc-1]) + "_f" + ".txt";

SLAM.SaveTrajectoryEuRoC(f_file);

SLAM.SaveKeyFrameTrajectoryEuRoC(kf_file);

}

else

{

SLAM.SaveTrajectoryEuRoC("CameraTrajectory.txt");

SLAM.SaveKeyFrameTrajectoryEuRoC("KeyFrameTrajectory.txt");

}

return 0;

}

void LoadImages(const string &strPathLeft, const string &strPathRight, const string &strPathTimes,

vector<string> &vstrImageLeft, vector<string> &vstrImageRight, vector<double> &vTimeStamps)

{

ifstream fTimes;

fTimes.open(strPathTimes.c_str());

vTimeStamps.reserve(5000);

vstrImageLeft.reserve(5000);

vstrImageRight.reserve(5000);

while(!fTimes.eof())

{

string s;

getline(fTimes,s);

if(!s.empty())

{

stringstream ss;

ss << s;

vstrImageLeft.push_back(strPathLeft + "/" + ss.str() + ".png");

vstrImageRight.push_back(strPathRight + "/" + ss.str() + ".png");

double t;

ss >> t;

vTimeStamps.push_back(t/1e18); //1e9改成了1e18,跑出来第一列时间戳单位为秒

}

}

}

//ORBSLAM2 - stereo_euroc.cc

/**

* This file is part of ORB-SLAM2.

*

* Copyright (C) 2014-2016 Raúl Mur-Artal <raulmur at unizar dot es> (University of Zaragoza)

* For more information see <https://github.com/raulmur/ORB_SLAM2>

*

* ORB-SLAM2 is free software: you can redistribute it and/or modify

* it under the terms of the GNU General Public License as published by

* the Free Software Foundation, either version 3 of the License, or

* (at your option) any later version.

*

* ORB-SLAM2 is distributed in the hope that it will be useful,

* but WITHOUT ANY WARRANTY; without even the implied warranty of

* MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

* GNU General Public License for more details.

*

* You should have received a copy of the GNU General Public License

* along with ORB-SLAM2. If not, see <http://www.gnu.org/licenses/>.

*/

#include<iostream>

#include<algorithm>

#include<fstream>

#include<iomanip>

#include<chrono>

#include<opencv2/core/core.hpp>

#include<System.h>

#include<unistd.h>

using namespace std;

/**

* @brief 加载图像

* @param[in] strPathLeft 保存左目图像文件名称的文件的路径

* @param[in] strPathRight 保存右目图像文件名称的文件的路径

* @param[in] strPathTimes 保存图像时间戳的文件的路径

* @param[out] vstrImageLeft 左目图像序列中每张图像的文件名

* @param[out] vstrImageRight 右目图像序列中每张图像的文件名

* @param[out] vTimeStamps 图像序列中每张图像的时间戳(认为左目图像和右目图像的时间戳已经对齐)

*/

void LoadImages(const string &strPathLeft, const string &strPathRight, const string &strPathTimes,

vector<string> &vstrImageLeft, vector<string> &vstrImageRight, vector<double> &vTimeStamps);

int main(int argc, char **argv)

{

// step 0 参数检查

if(argc != 6)

{

cerr << endl << "Usage: ./stereo_euroc path_to_vocabulary path_to_settings path_to_left_folder path_to_right_folder path_to_times_file" << endl;

return 1;

}

// step 1 获取图像的访问路径

// Retrieve paths to images

// 保存左右目图像每张图像路径和时间戳的向量

vector<string> vstrImageLeft;

vector<string> vstrImageRight;

vector<double> vTimeStamp;

//获得图像位置

LoadImages(string(argv[3]), string(argv[4]), string(argv[5]), vstrImageLeft, vstrImageRight, vTimeStamp);

//检查图像序列是否为空

if(vstrImageLeft.empty() || vstrImageRight.empty())

{

cerr << "ERROR: No images in provided path." << endl;

return 1;

}

// 当然如果左右目图像的数目不一致也不可以

if(vstrImageLeft.size()!=vstrImageRight.size())

{

cerr << "ERROR: Different number of left and right images." << endl;

return 1;

}

// step 2 从给出的配置文件中读取去畸变参数

// Read rectification parameters

cv::FileStorage fsSettings(

argv[2], // path_to_settings

cv::FileStorage::READ); // 以只读方式打开

if(!fsSettings.isOpened())

{

cerr << "ERROR: Wrong path to settings" << endl;

return -1;

}

cv::Mat K_l, K_r, P_l, P_r, R_l, R_r, D_l, D_r;

//相机内参

fsSettings["LEFT.K"] >> K_l;

fsSettings["RIGHT.K"] >> K_r;

//TODO 目测是经过双目立体矫正后的投影矩阵

fsSettings["LEFT.P"] >> P_l;

fsSettings["RIGHT.P"] >> P_r;

// 修正变换矩阵,见下面的函数调用

fsSettings["LEFT.R"] >> R_l;

fsSettings["RIGHT.R"] >> R_r;

//去畸变参数

fsSettings["LEFT.D"] >> D_l;

fsSettings["RIGHT.D"] >> D_r;

//图像尺寸

int rows_l = fsSettings["LEFT.height"];

int cols_l = fsSettings["LEFT.width"];

int rows_r = fsSettings["RIGHT.height"];

int cols_r = fsSettings["RIGHT.width"];

//参数合法性检查(不为空就行)

if(K_l.empty() || K_r.empty() || P_l.empty() || P_r.empty() || R_l.empty() || R_r.empty() || D_l.empty() || D_r.empty() ||

rows_l==0 || rows_r==0 || cols_l==0 || cols_r==0)

{

cerr << "ERROR: Calibration parameters to rectify stereo are missing!" << endl;

return -1;

}

//存储四个映射矩阵

cv::Mat M1l,M2l,M1r,M2r;

//根据相机内参,去畸变参数以及立体矫正后,得到的新的相机内参来计算从原始图像到处理后理想双目图像的映射矩阵

//这个函数的定义参考:[https://blog.csdn.net/u013341645/article/details/78710740]

// 左目

cv::initUndistortRectifyMap( //计算无畸变和修正转换映射

K_l, //输入的相机内参矩阵 (单目标定阶段得到的相机内参矩阵)

D_l, //单目标定阶段得到的相机的去畸变参数

R_l, //可选的修正变换矩阵,3*3, 从 cv::stereoRectify 得来.如果这个矩阵为空矩阵,那么就将会被设置成为单位矩阵

P_l.rowRange(0,3).colRange(0,3), //新的相机内参矩阵

cv::Size(cols_l,rows_l), //在去畸变之前的图像尺寸

CV_32F, //第一个输出映射的类型

M1l, //第一个输出映射表

M2l); //第二个输出映射

// 右目

cv::initUndistortRectifyMap(K_r,D_r,R_r,P_r.rowRange(0,3).colRange(0,3),cv::Size(cols_r,rows_r),CV_32F,M1r,M2r);

// step 3 构造SLAM系统

// 获取图像数目

const int nImages = vstrImageLeft.size();

// Create SLAM system. It initializes all system threads and gets ready to process frames.

// 创建系统

ORB_SLAM2::System SLAM(argv[1],argv[2],ORB_SLAM2::System::STEREO,true); //true:开启显示界面

// Vector for tracking time statistics

vector<float> vTimesTrack;

vTimesTrack.resize(nImages);

cout << endl << "-------" << endl;

cout << "Start processing sequence ..." << endl;

cout << "Images in the sequence: " << nImages << endl << endl;

// Main loop

// step 4 对每一张输入的图像执行追踪

cv::Mat imLeft, imRight, imLeftRect, imRightRect;

for(int ni=0; ni<nImages; ni++)

{

// Read left and right images from file

// step 4.1 读取原始图像

imLeft = cv::imread(vstrImageLeft[ni],CV_LOAD_IMAGE_UNCHANGED);

imRight = cv::imread(vstrImageRight[ni],CV_LOAD_IMAGE_UNCHANGED);

//合法性检查

if(imLeft.empty())

{

cerr << endl << "Failed to load image at: "

<< string(vstrImageLeft[ni]) << endl;

return 1;

}

if(imRight.empty())

{

cerr << endl << "Failed to load image at: "

<< string(vstrImageRight[ni]) << endl;

return 1;

}

// step 4.2 对左右目图像进行双目矫正和去畸变处理

//参考博客 [https://blog.csdn.net/sss_369/article/details/52983123]

// 左目

cv::remap( //重映射,就是把一幅图像中某位置的像素放置到另一个图片指定位置的过程。

imLeft, //输入图像

imLeftRect, //输出图像

M1l, //第一个映射矩阵表

M2l, //第二个映射矩阵

cv::INTER_LINEAR);

// 右目

cv::remap(imRight,imRightRect,M1r,M2r,cv::INTER_LINEAR);

double tframe = vTimeStamp[ni];

// step 4.3 开始计时

#ifdef COMPILEDWITHC11

std::chrono::steady_clock::time_point t1 = std::chrono::steady_clock::now();

#else

std::chrono::monotonic_clock::time_point t1 = std::chrono::monotonic_clock::now();

#endif

// Pass the images to the SLAM system

// step 4.5 开始追踪

SLAM.TrackStereo(imLeftRect,imRightRect,tframe);

// step 4.6 追踪完成,停止计时,计算追踪时间

#ifdef COMPILEDWITHC11

std::chrono::steady_clock::time_point t2 = std::chrono::steady_clock::now();

#else

std::chrono::monotonic_clock::time_point t2 = std::chrono::monotonic_clock::now();

#endif

double ttrack= std::chrono::duration_cast<std::chrono::duration<double> >(t2 - t1).count();

vTimesTrack[ni]=ttrack;

// Wait to load the next frame

// step 4.7 等待一段时间以符合下一帧图像的时间戳

double T=0;

if(ni<nImages-1)

T = vTimeStamp[ni+1]-tframe;

else if(ni>0)

T = tframe-vTimeStamp[ni-1];

if(ttrack<T)

usleep((T-ttrack)*1e6);

}

// step 5 追踪过程执行完毕,退出系统

// Stop all threads

SLAM.Shutdown();

// Tracking time statistics

// step 6 统计追踪时间

sort(vTimesTrack.begin(),vTimesTrack.end());

float totaltime = 0;

for(int ni=0; ni<nImages; ni++)

{

totaltime+=vTimesTrack[ni];

}

cout << "-------" << endl << endl;

cout << "median tracking time: " << vTimesTrack[nImages/2] << endl;

cout << "mean tracking time: " << totaltime/nImages << endl;

// Save camera trajectory

// step 7 以TUM格式保存轨迹文件(普通帧+ 关键帧)

// const string kf_file = string(argv[argc]) + "_kf" + ".txt";

// SLAM.SaveTrajectoryTUM(kf_file);

SLAM.SaveTrajectoryTUM("CameraTrajectory.txt");

return 0;

}

//加载图像,和单目中的做法一样

void LoadImages(const string &strPathLeft, const string &strPathRight, const string &strPathTimes,

vector<string> &vstrImageLeft, vector<string> &vstrImageRight, vector<double> &vTimeStamps)

{

ifstream fTimes;

fTimes.open(strPathTimes.c_str());

vTimeStamps.reserve(5000);

vstrImageLeft.reserve(5000);

vstrImageRight.reserve(5000);

while(!fTimes.eof())

{

string s;

getline(fTimes,s);

if(!s.empty())

{

stringstream ss;

ss << s;

vstrImageLeft.push_back(strPathLeft + "/" + ss.str() + ".png");

vstrImageRight.push_back(strPathRight + "/" + ss.str() + ".png");

double t;

ss >> t;

vTimeStamps.push_back(t/1e9); //除时间戳让单位到秒

}

}

}

参考文献:

https://blog.csdn.net/william_hehe/article/details/80160508

985

985

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?