File Formats

We provide the RGB-D datasets from the Kinect in the following format:

注意两点:深度表达的方式和数据存储方式

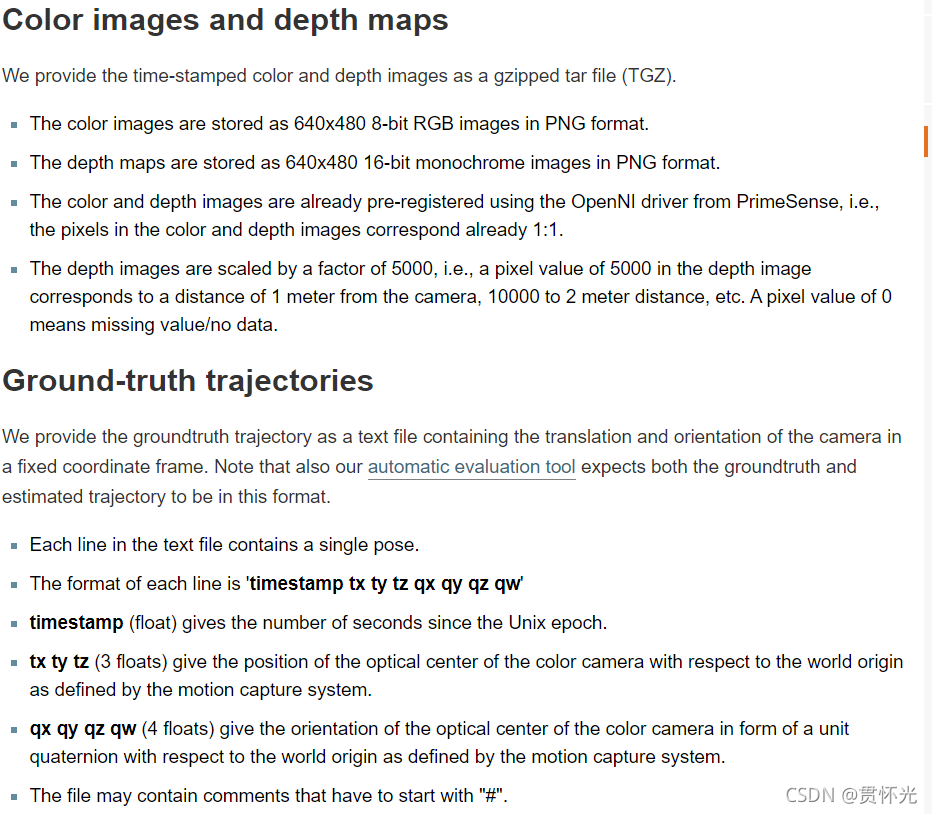

Color images and depth maps

We provide the time-stamped color and depth images as a gzipped tar file (TGZ).

The color images are stored as 640x480 8-bit RGB images in PNG format.

The depth maps are stored as 640x480 16-bit monochrome images in PNG format.

The color and depth images are already pre-registered using the OpenNI driver from PrimeSense, i.e., the pixels in the color and depth images correspond already 1:1.

The depth images are scaled by a factor of 5000, i.e., a pixel value of 5000 in the depth image corresponds to a distance of 1 meter from the camera, 10000 to 2 meter distance, etc. A pixel value of 0 means missing value/no data.

Ground-truth trajectories

We provide the groundtruth trajectory as a text file containing the translation and orientation of the camera in a fixed coordinate frame. Note that also our automatic evaluation tool expects both the groundtruth and estimated trajectory to be in this format.

Each line in the text file contains a single pose.

The format of each line is ‘timestamp tx ty tz qx qy qz qw’

timestamp (float) gives the number of seconds since the Unix epoch.给出自开始进程以来的秒数

tx ty tz (3 floats) give the position of the optical center of the color camera with respect to the world origin as defined by the motion capture system.给出了彩色摄像机的光学中心相对于运动捕捉系统定义的世界原点的位置。

qx qy qz qw (4 floats) give the orientation of the optical center of the color camera in form of a unit quaternion with respect to the world origin as defined by the motion capture system.以单位四元数的形式给出彩色相机光学中心相对于运动捕捉系统定义的世界原点的方向。

The file may contain comments that have to start with “#”.

该文件可能包含以“#”开头的注释。

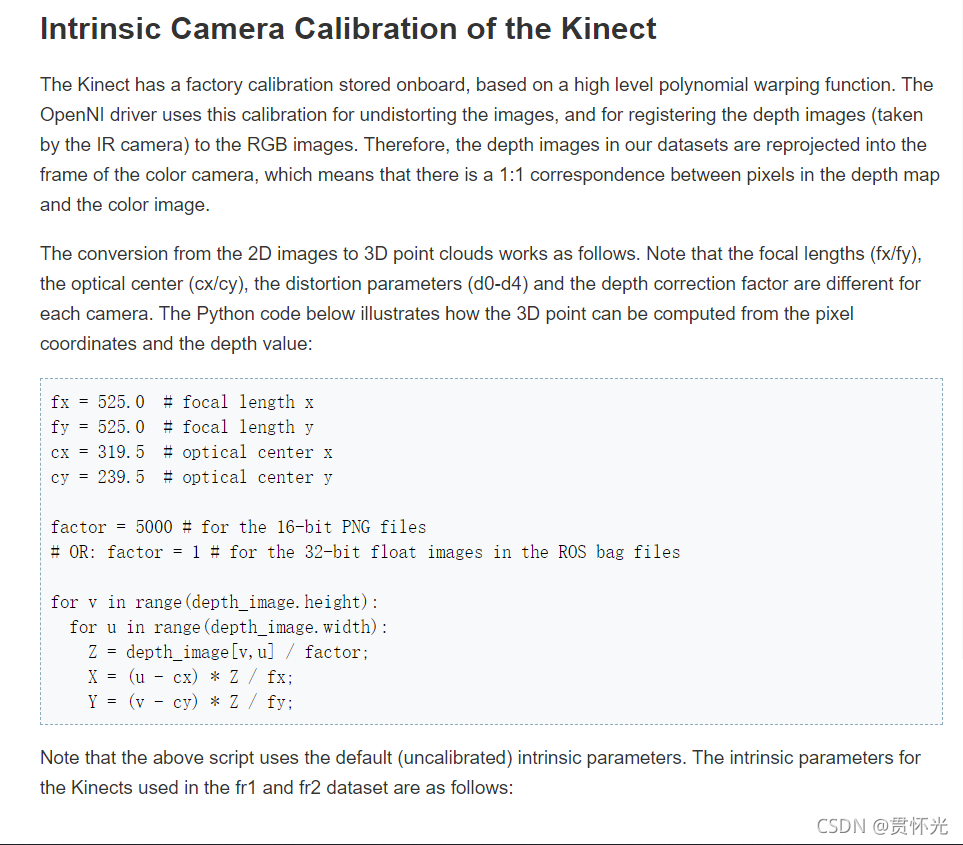

Intrinsic Camera Calibration of the Kinect

The Kinect has a factory calibration stored onboard, based on a high level polynomial warping function. The OpenNI driver uses this calibration for undistorting the images, and for registering the depth images (taken by the IR camera) to the RGB images. Therefore, the depth images in our datasets are reprojected into the frame of the color camera, which means that there is a 1:1 correspondence between pixels in the depth map and the color image.

The conversion from the 2D images to 3D point clouds works as follows. Note that the focal lengths (fx/fy), the optical center (cx/cy), the distortion parameters (d0-d4) and the depth correction factor are different for each camera. The Python code below illustrates how the 3D point can be computed from the pixel coordinates and the depth value:

Kinect的内置摄像头校准

Kinect具有基于高级多项式扭曲函数的车载工厂校准。OpenNI驱动程序使用此校准来消除图像失真,并将深度图像(由红外摄像机拍摄)注册到RGB图像。因此,我们的数据集中的深度图像被重新投影到彩色摄像机的帧中,这意味着深度图中的像素与彩色图像之间存在1:1的对应关系。

从二维图像到三维点云的转换工作如下。请注意**,每个摄像机的焦距(fx/fy)、光学中心(cx/cy)、畸变参数(d0-d4)和深度校正系数都不同。**下面的Python代码说明了如何从像素坐标和深度值计算3D点

参考地址:https://vision.in.tum.de/data/datasets/rgbd-dataset/file_formats

797

797

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?