我们在使用源码编译带cuda的opencv时,需要设置显卡的CUDA_ARCH_BIN,本文介绍一下获得该值的方法

方法一

安装好cuda之后,可以从cuda sample中获得

cd /usr/local/cuda/samples/1_Utilities/deviceQuery

sudo make -j8./deviceQuery如上,运行后输出信息如下

-

nvidia@nvidia-X10SRA:/usr/local/cuda/samples/1_Utilities/deviceQuery$ ./deviceQuery -

./deviceQuery Starting... -

CUDA Device Query (Runtime API) version (CUDART static linking) -

Detected 3 CUDA Capable device(s) -

Device 0: "Tesla T4" -

CUDA Driver Version / Runtime Version 10.2 / 10.2 -

CUDA Capability Major/Minor version number: 7.5 -

Total amount of global memory: 15110 MBytes (15843721216 bytes) -

(40) Multiprocessors, ( 64) CUDA Cores/MP: 2560 CUDA Cores -

GPU Max Clock rate: 1590 MHz (1.59 GHz) -

Memory Clock rate: 5001 Mhz -

Memory Bus Width: 256-bit -

L2 Cache Size: 4194304 bytes -

Maximum Texture Dimension Size (x,y,z) 1D=(131072), 2D=(131072, 65536), 3D=(16384, 16384, 16384) -

Maximum Layered 1D Texture Size, (num) layers 1D=(32768), 2048 layers -

Maximum Layered 2D Texture Size, (num) layers 2D=(32768, 32768), 2048 layers -

Total amount of constant memory: 65536 bytes -

Total amount of shared memory per block: 49152 bytes -

Total number of registers available per block: 65536 -

Warp size: 32 -

Maximum number of threads per multiprocessor: 1024 -

Maximum number of threads per block: 1024 -

Max dimension size of a thread block (x,y,z): (1024, 1024, 64) -

Max dimension size of a grid size (x,y,z): (2147483647, 65535, 65535) -

Maximum memory pitch: 2147483647 bytes -

Texture alignment: 512 bytes -

Concurrent copy and kernel execution: Yes with 3 copy engine(s) -

Run time limit on kernels: No -

Integrated GPU sharing Host Memory: No -

Support host page-locked memory mapping: Yes -

Alignment requirement for Surfaces: Yes -

Device has ECC support: Enabled -

Device supports Unified Addressing (UVA): Yes -

Device supports Compute Preemption: Yes -

Supports Cooperative Kernel Launch: Yes -

Supports MultiDevice Co-op Kernel Launch: Yes -

Device PCI Domain ID / Bus ID / location ID: 0 / 2 / 0 -

Compute Mode: -

< Default (multiple host threads can use ::cudaSetDevice() with device simultaneously) > -

……

可以看到的相关信息,包括cuda capability为7.5,2560个cuda核心等

方法二

如果安装cuda时没有安装samples,则可以使用下边的方法

git clone https://github.com/NVIDIA-AI-IOT/deepstream_tlt_apps.gitcd deepstream_tlt_apps/TRT-OSS/x86nvcc deviceQuery.cpp -o deviceQuery./deviceQuery同样会输出上边类似的信息

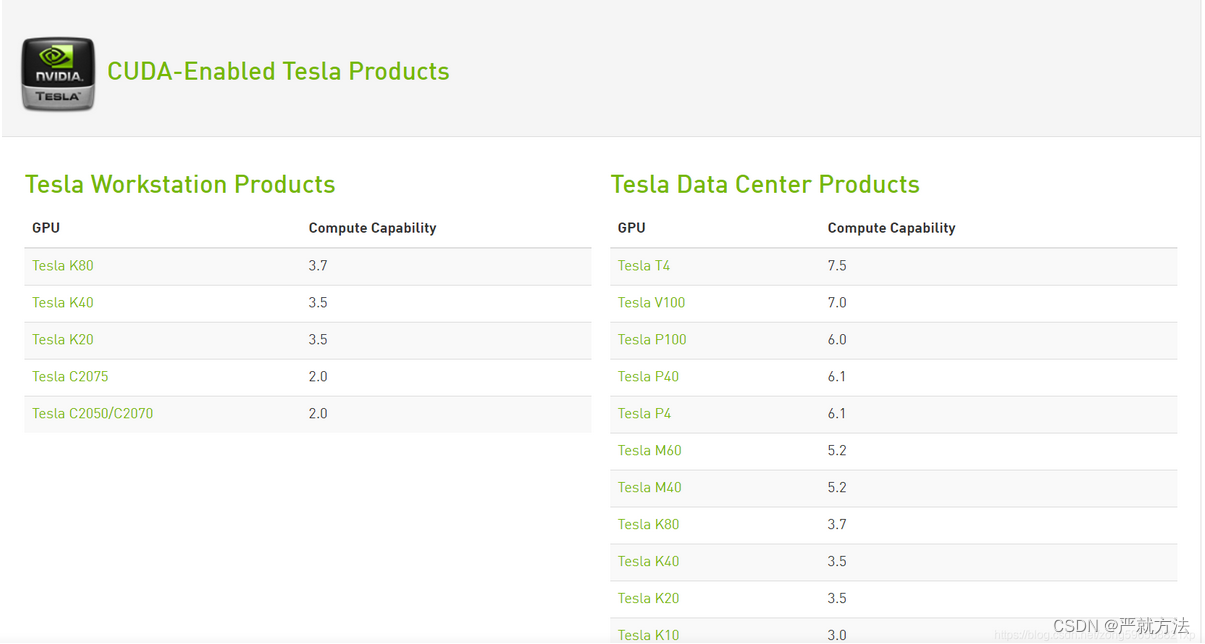

方法三

官网查询,选择自己对应的显卡型号查

313

313

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?