1.介绍ChatGLM3-6B

ChatGLM3-6B大模型是智谱AI和清华大学 KEG 实验室联合发布的对话预训练模型。

1.1 模型规模

模型规模通常用参数数量(parameters)来衡量。参数数量越多,模型理论上越强大,但也更耗费资源。以下是一些典型模型的参数数量对比:

ChatTGLM3-6b:6 billion (60亿) 参数

GPT-3:175 billion (1750亿) 参数

BERT Large:340 million (3.4亿) 参数

GPT-2:1.5 billion (15亿) 参数

从参数数量上看,ChatTGLM3-6b比BERT和GPT-2大,但远小于GPT-3。

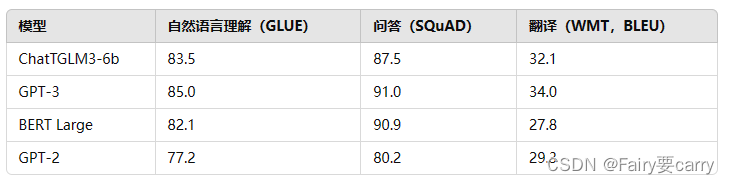

1.2 性能对比

性能可以通过在各种基准测试(benchmarks)上的表现来衡量,例如自然语言理解、问答、翻译等任务。以下是一些假设数据(具体的数值可能会有所不同,但用于说明差异):

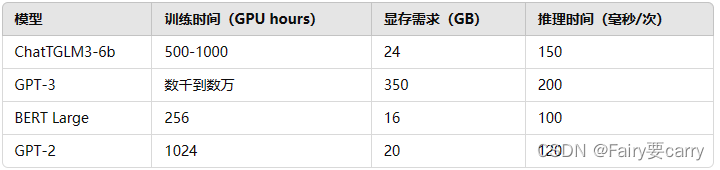

1.3 资源消耗对比表

这些数据表明,ChatTGLM3-6b在资源消耗上比GPT-3低,但比BERT和GPT-2高一些。

2. 部署ChatGLM3-6B大模型

适用于本地交互和测试,适合个人用户和开发者进行快速迭代和调试,命令行模式。

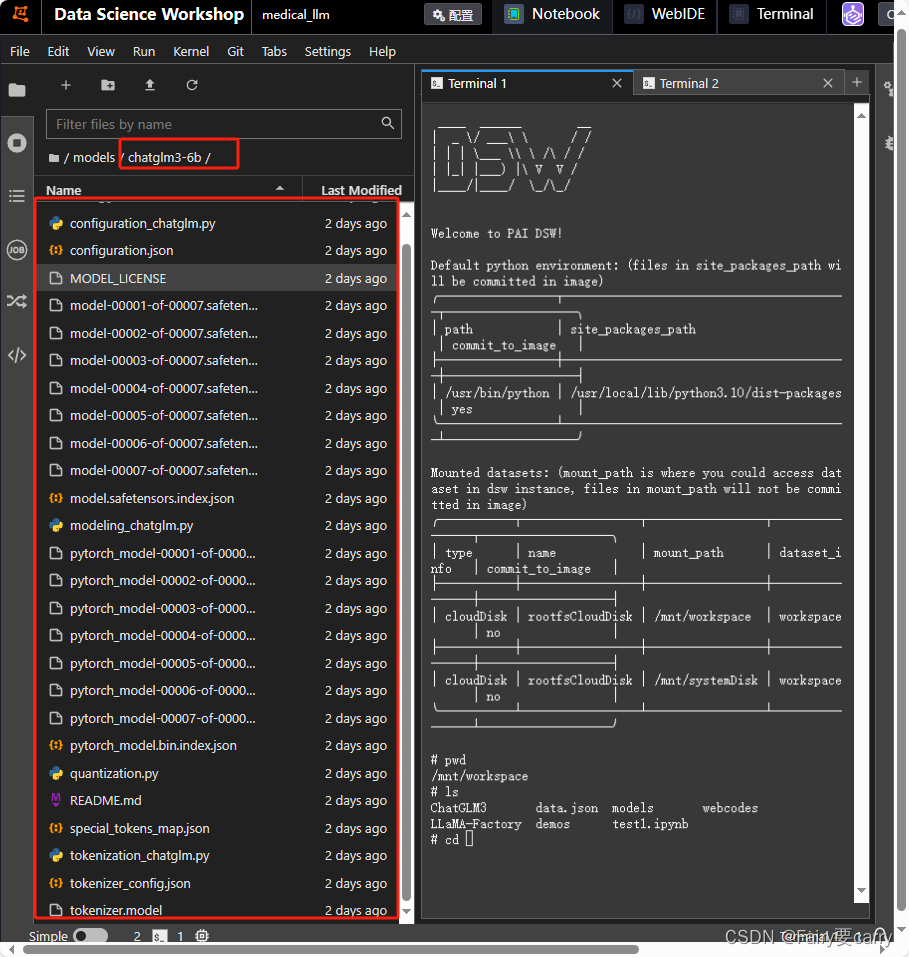

1. 下载大模型

mkdir models

cd models

apt update

# 能够拉长文本形式的文件

apt install git-lfs

# 克隆chatGLM3-6b大模型

git clone https://www.modelscope.cn/ZhipuAI/chatglm3-6b.git

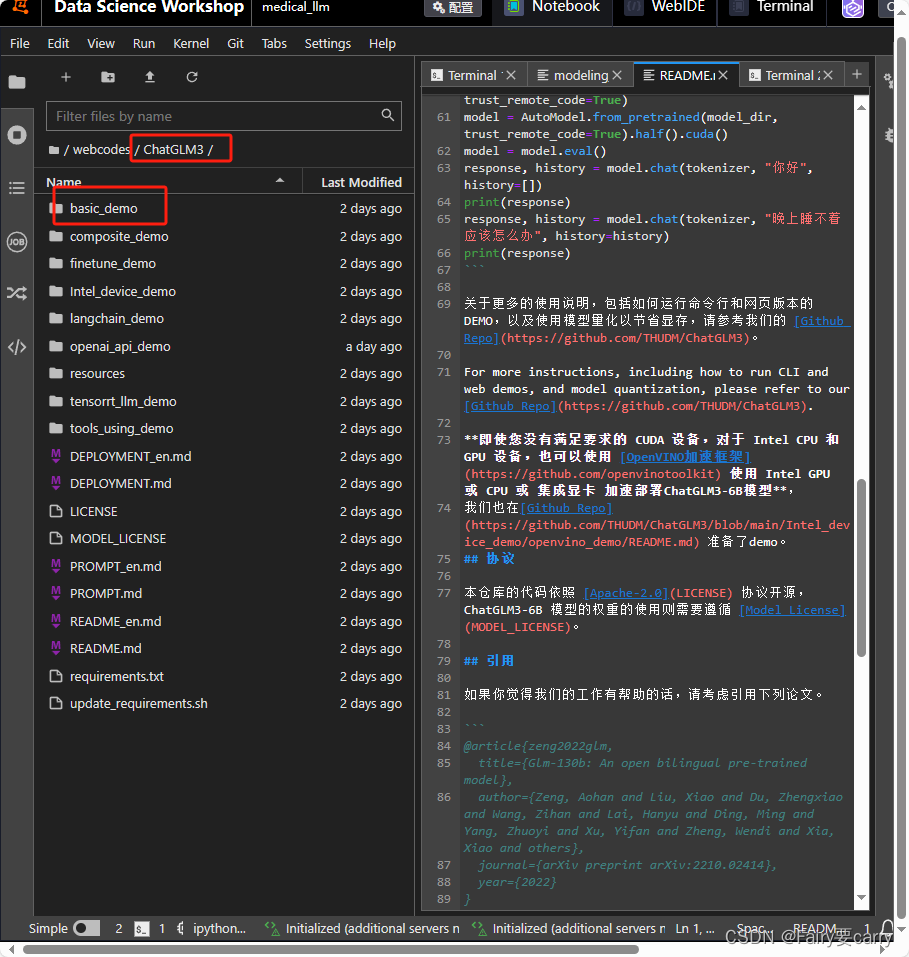

2.下载项目

我们通过下面的 命令行 和 网页版 调用我们部署的本地大模型

mkdir webcodes

cd webcodes

# 下载chatglm3-6b web_demo项目

git clone https://github.com/THUDM/ChatGLM3.git

# 安装依赖

pip install -r requirements.txt

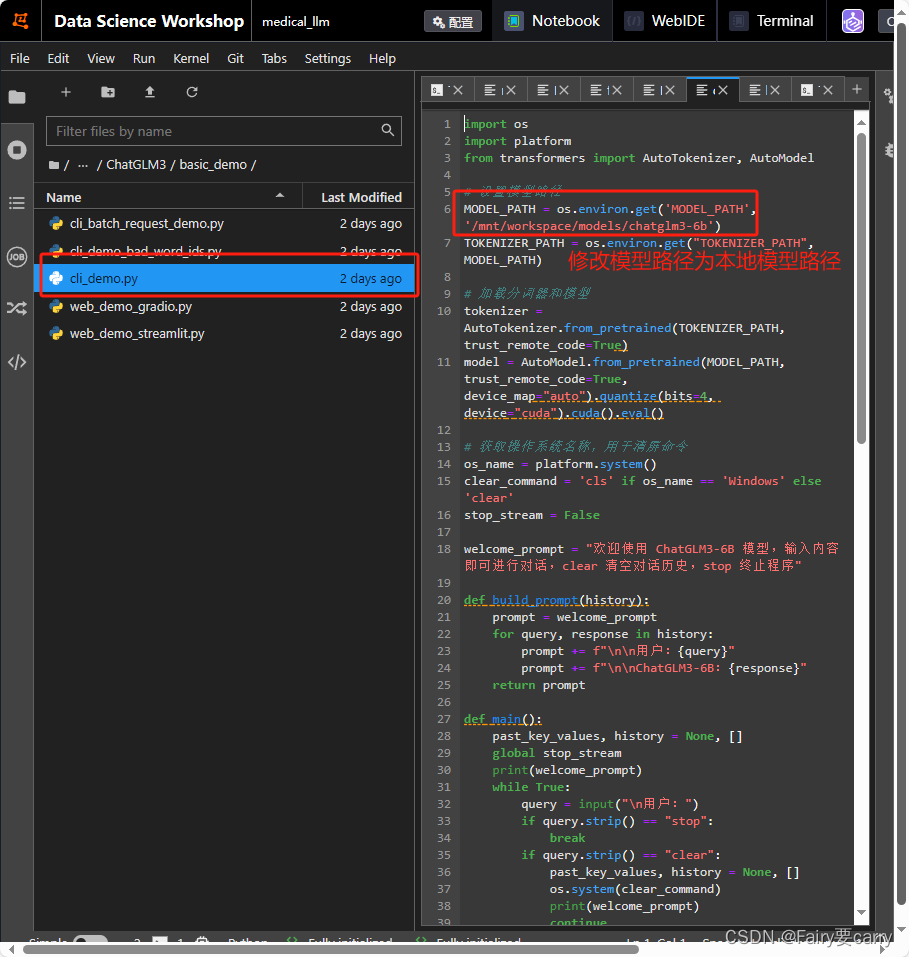

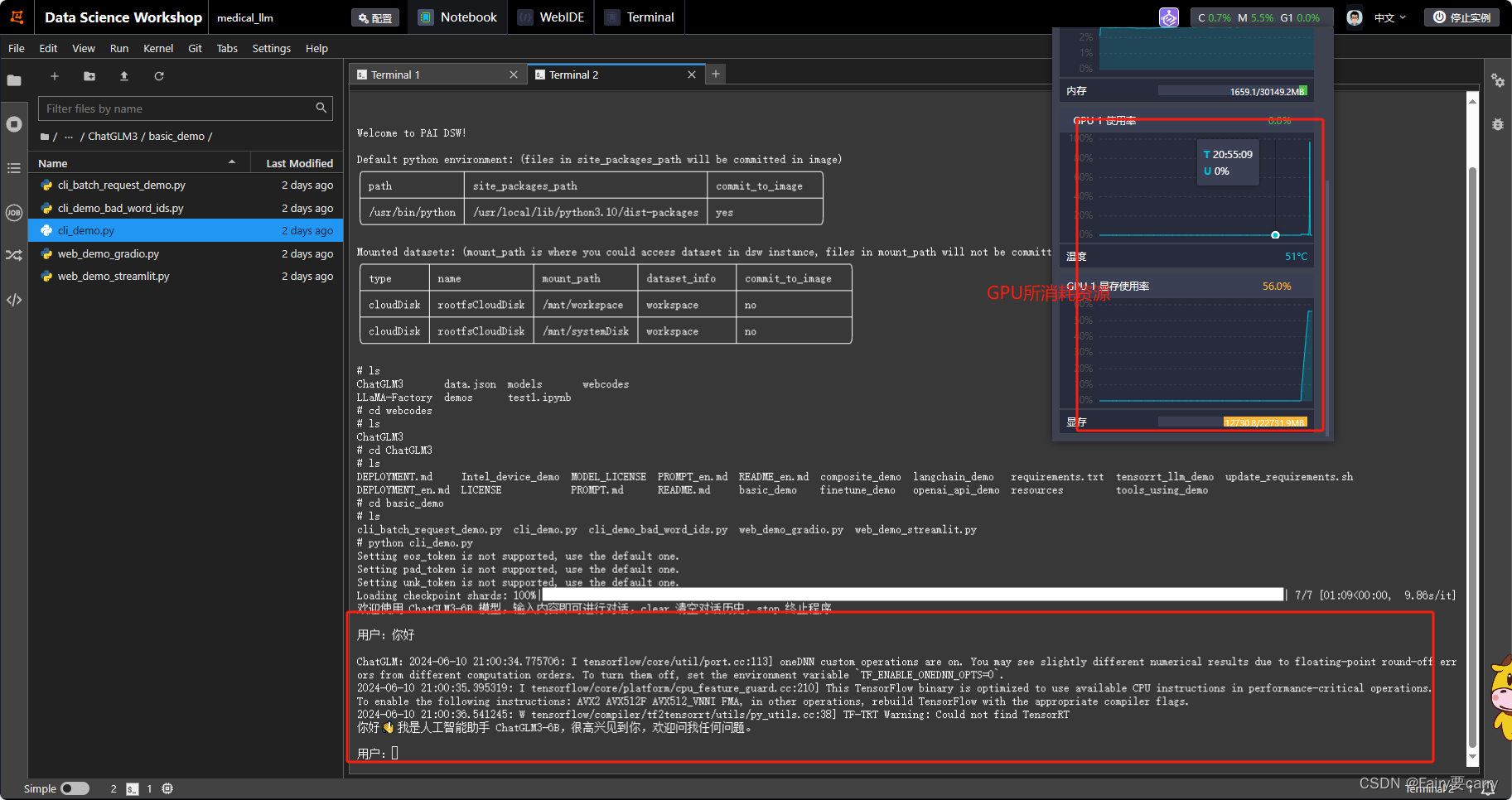

启动(启动之前需要修改大模型路径,如果没有修改默认从Hugging Face下载【需要魔法】),以下为小黑窗启动为例:

# 小黑窗启动命令

python cli_demo.py

# 网页端启动

streamlit run web_demo_streamlit.py

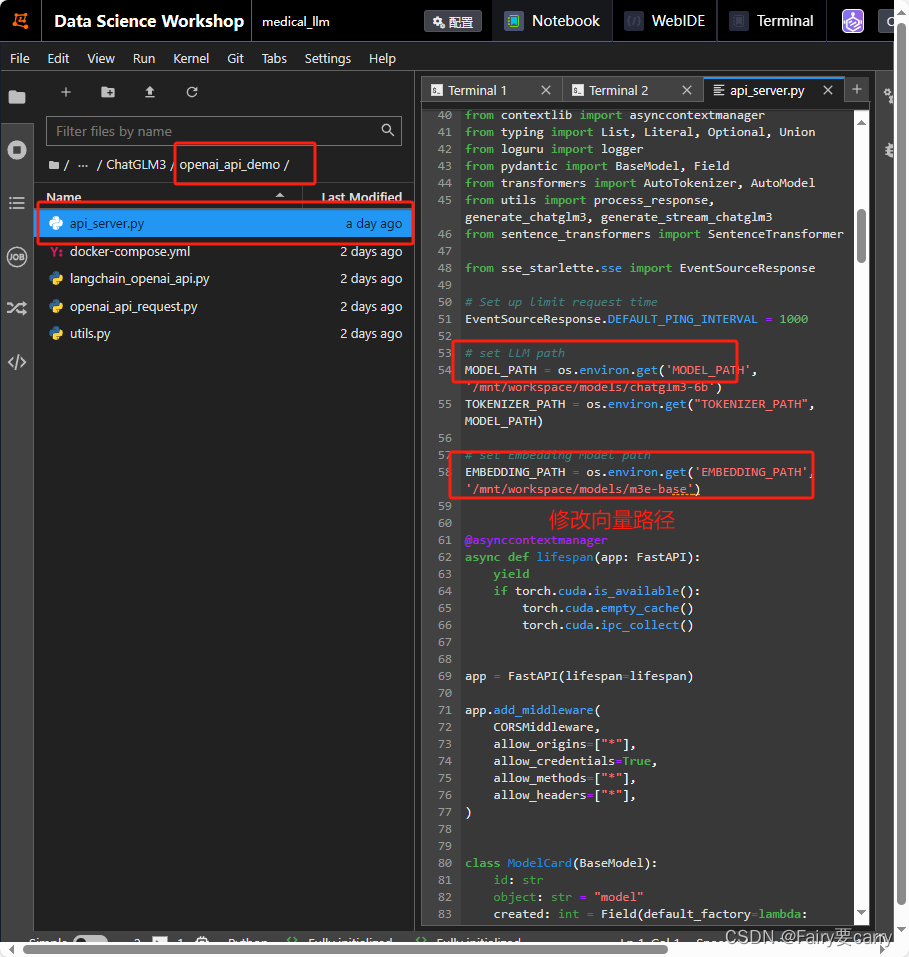

3. OpenAPI的部署

适用于需要将ChatGLM3-6B模型作为服务提供的场景,提供了丰富的API接口和灵活的部署选项【处理了文本的嵌入】。

1.下载向量,这里我以m3e为例子

cd models

# 克隆m3e向量模型

git clone https://www.modelscope.cn/xrunda/m3e-base.git

2.修改大模型路径

3,运行启动

cd openai_api_demo

python api_server.py

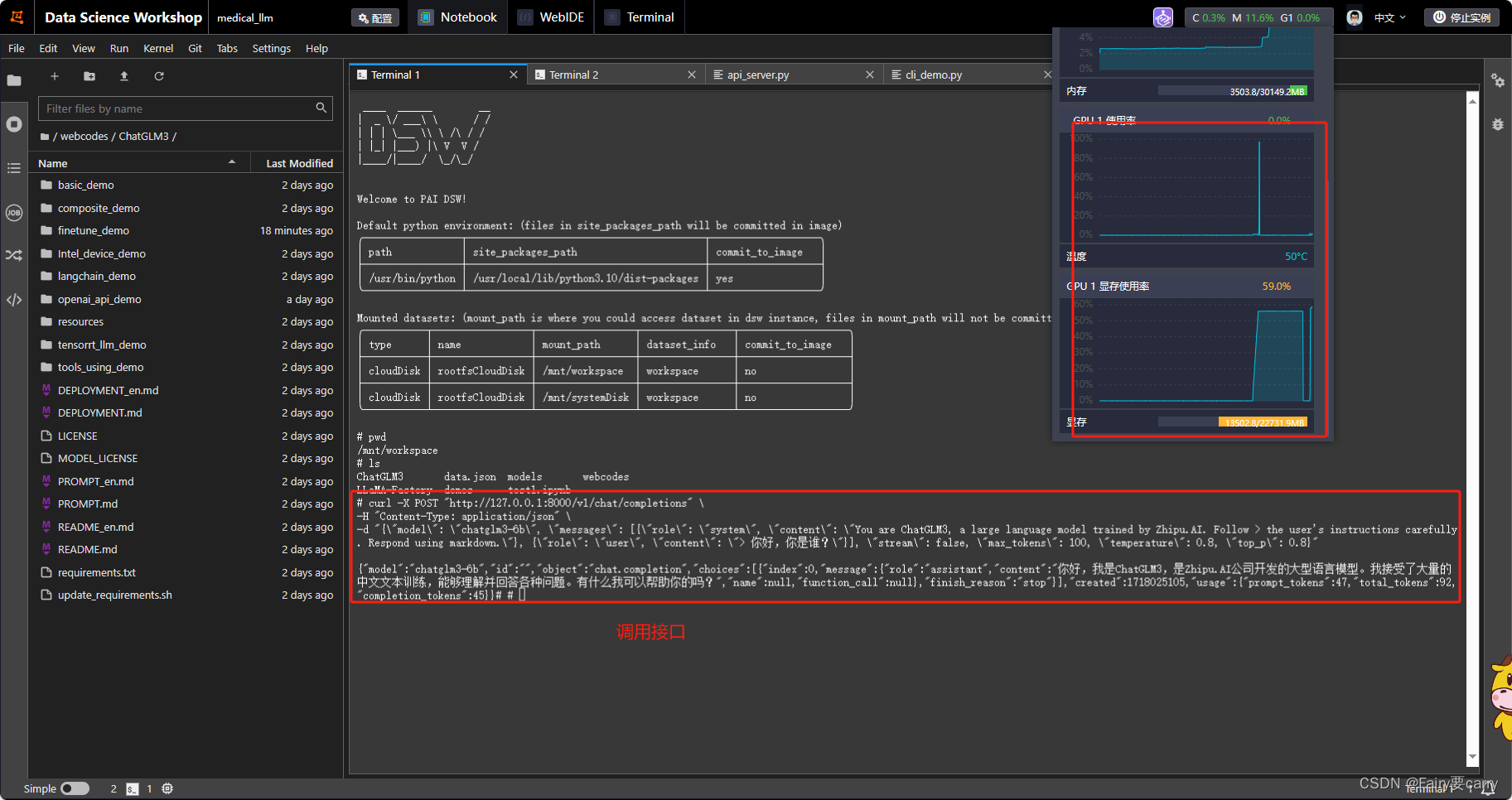

4. 测试

curl -X POST "http://127.0.0.1:8000/v1/chat/completions" \

-H "Content-Type: application/json" \

-d "{\"model\": \"chatglm3-6b\", \"messages\": [{\"role\": \"system\", \"content\": \"You are ChatGLM3, a large language model trained by Zhipu.AI. Follow the user's instructions carefully. Respond using markdown.\"}, {\"role\": \"user\", \"content\": \"你好,你是谁?\"}], \"stream\": false, \"max_tokens\": 100, \"temperature\": 0.8, \"top_p\": 0.8}"

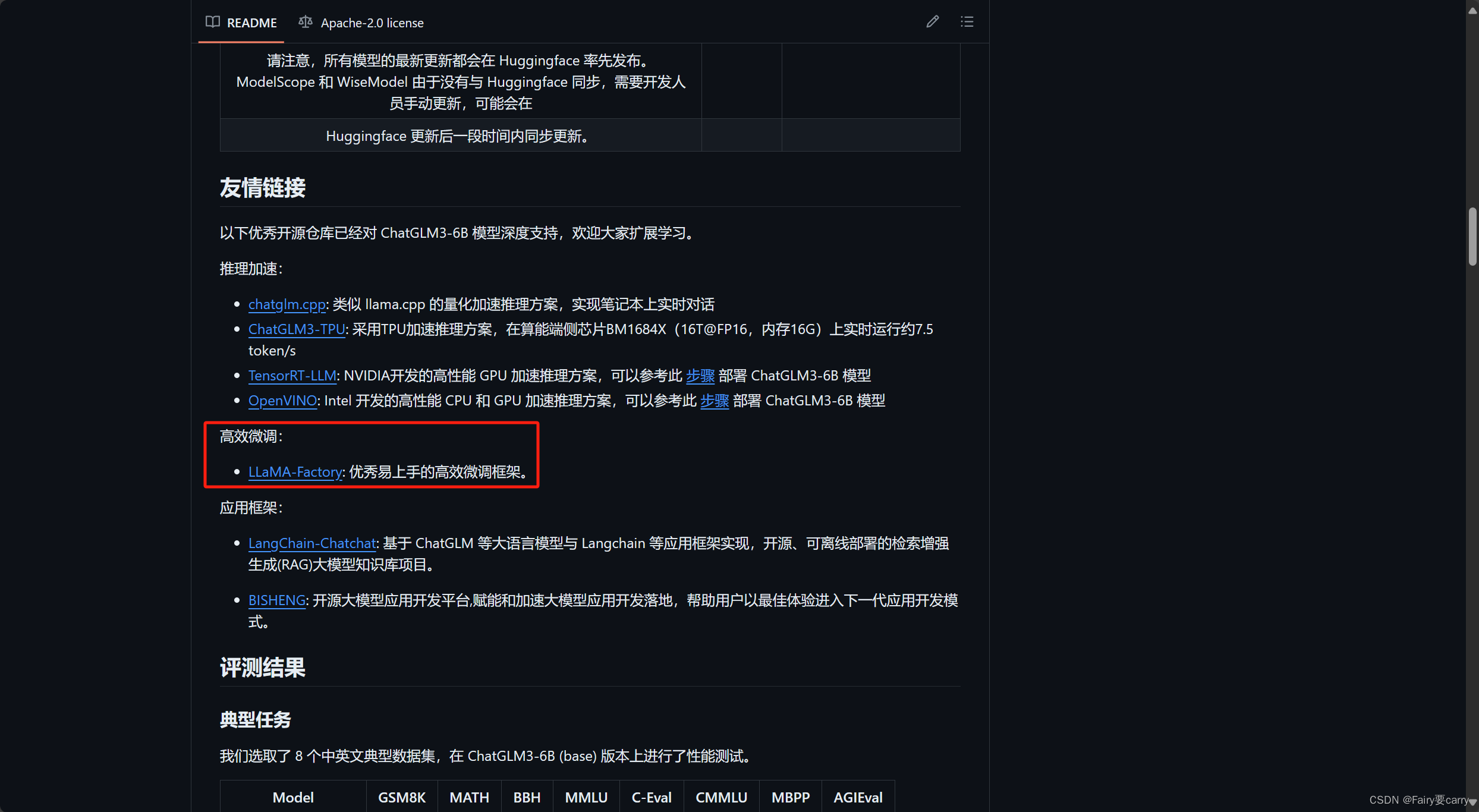

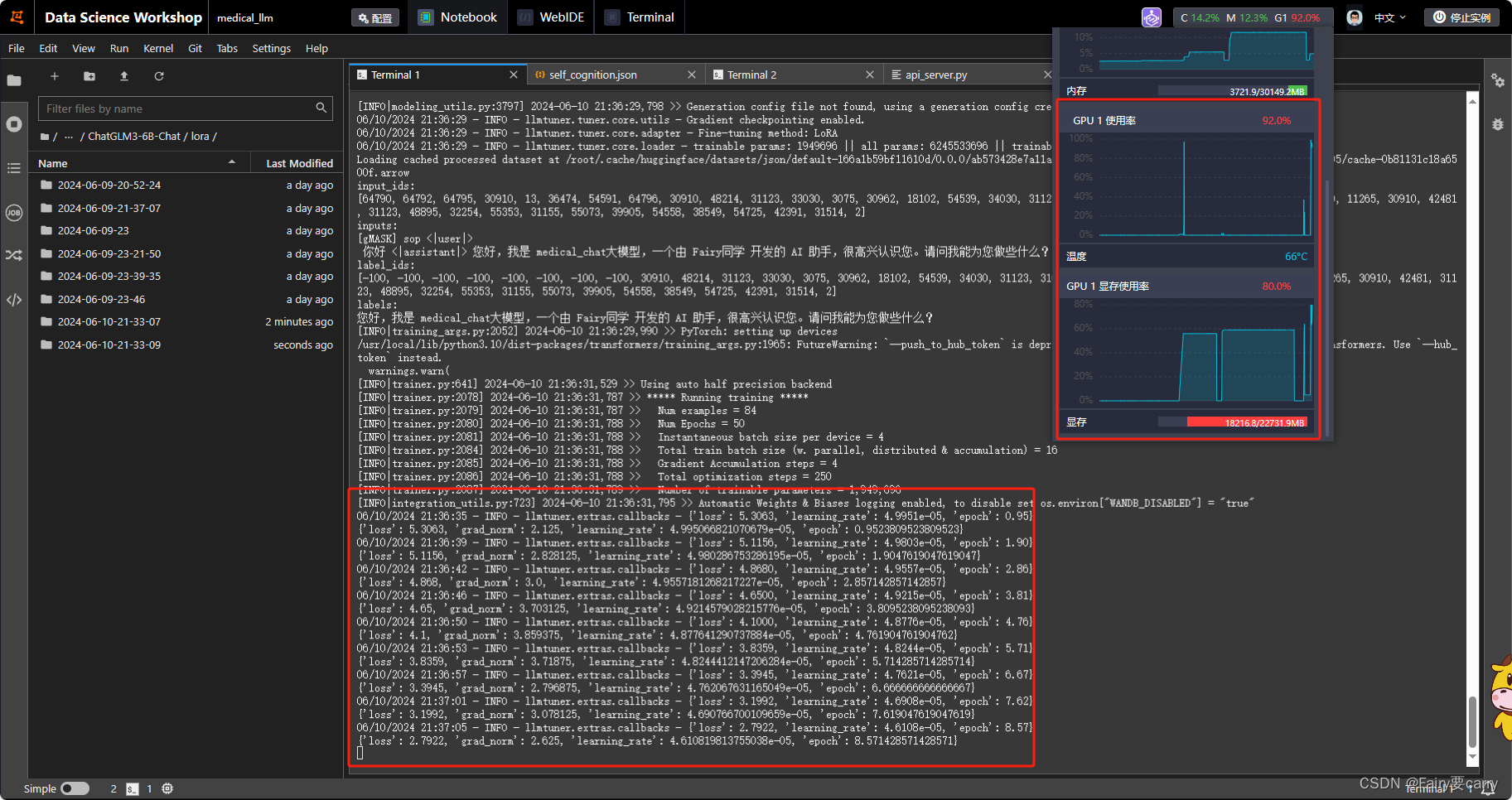

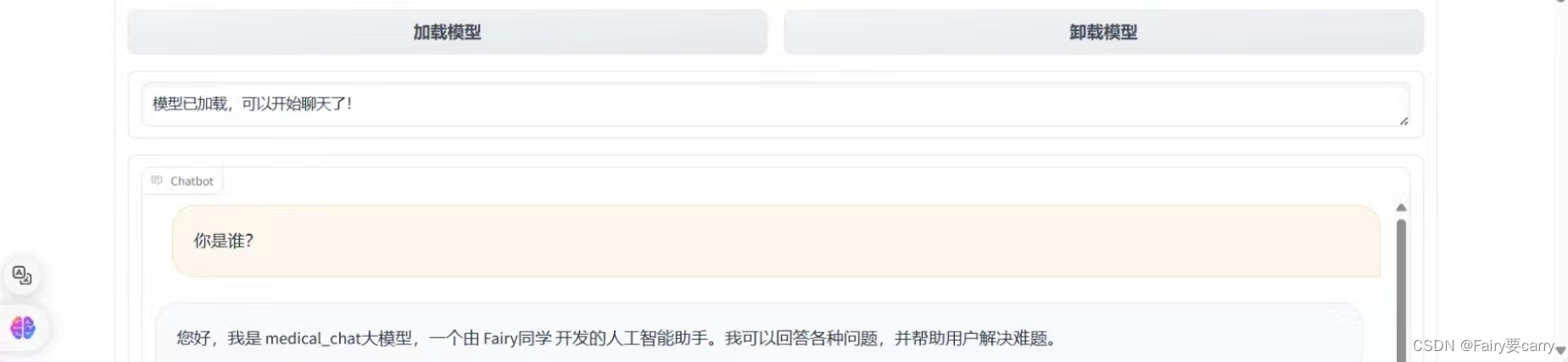

4. ChatGLM3-6B大模型loar微调

借助LLaMA-Factory实现快速微调(官方推荐)

1. 安装LLaMA-Factory

克隆LLaMA-Factory 以及install所需依赖

# 克隆项目

git clone https://github.com/hiyouga/LLaMA-Factory.git

# 安装项目依赖

cd LLaMA-Factory

pip install -r requirements.txt

pip install transformers_stream_generator bitsandbytes tiktoken auto-gptq optimum autoawq

pip install --upgrade tensorflow

pip uninstall flash-attn -y

# 运行

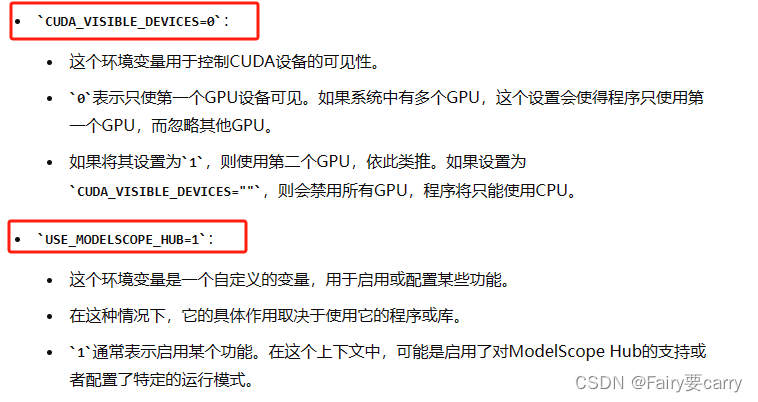

CUDA_VISIBLE_DEVICES=0 USE_MODELSCOPE_HUB=1 python src/train_web.py

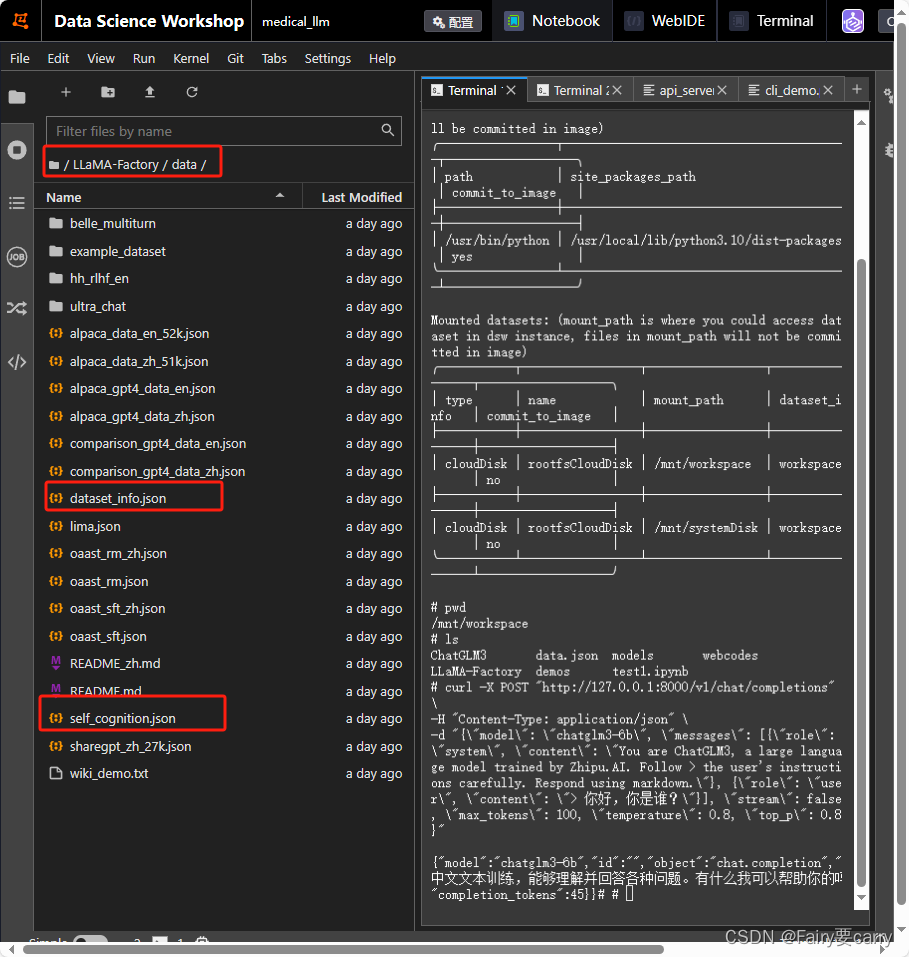

2.自定义训练集并上传

self_cognition文件

# 自定义数据集

[

{

"instruction": "用户指令(必填)",

"input": "用户输入(选填)",

"output": "模型回答(必填)",

"system": "系统提示词(选填)",

"history": [

["第一轮指令(选填)", "第一轮回答(选填)"],

["第二轮指令(选填)", "第二轮回答(选填)"]

]

}

]

打开LLaMA-Factory项目data文件夹下的dataset_info.json,进行上传

{

"alpaca_en": {

"file_name": "alpaca_data_en_52k.json",

"file_sha1": "607f94a7f581341e59685aef32f531095232cf23"

},

"alpaca_zh": {

"file_name": "alpaca_data_zh_51k.json",

"file_sha1": "0016a4df88f523aad8dc004ada7575896824a0dc"

},

"alpaca_gpt4_en": {

"file_name": "alpaca_gpt4_data_en.json",

"file_sha1": "647f4ad447bd993e4b6b6223d1be15208bab694a"

},

"alpaca_gpt4_zh": {

"file_name": "alpaca_gpt4_data_zh.json",

"file_sha1": "3eaa3bda364ccdd59925d7448a698256c31ef845"

},

"identity": {

"file_name": "identity.json",

"file_sha1": "ffe3ecb58ab642da33fbb514d5e6188f1469ad40"

},

"oaast_sft": {

"file_name": "oaast_sft.json",

"file_sha1": "7baf5d43e67a91f9bbdf4e400dbe033b87e9757e",

"columns": {

"prompt": "instruction",

"query": "input",

"response": "output",

"history": "history"

}

},

"oaast_sft_zh": {

"file_name": "oaast_sft_zh.json",

"file_sha1": "a6a91f18f80f37b10ded9cf633fb50c033bf7b9f",

"columns": {

"prompt": "instruction",

"query": "input",

"response": "output",

"history": "history"

}

},

"lima": {

"file_name": "lima.json",

"file_sha1": "9db59f6b7007dc4b17529fc63379b9cd61640f37",

"columns": {

"prompt": "instruction",

"query": "input",

"response": "output",

"history": "history"

}

},

"glaive_toolcall": {

"file_name": "glaive_toolcall_10k.json",

"file_sha1": "a6917b85d209df98d31fdecb253c79ebc440f6f3",

"formatting": "sharegpt",

"columns": {

"messages": "conversations",

"tools": "tools"

}

},

"example": {

"script_url": "example_dataset",

"columns": {

"prompt": "instruction",

"query": "input",

"response": "output",

"history": "history"

}

},

"guanaco": {

"hf_hub_url": "JosephusCheung/GuanacoDataset",

"ms_hub_url": "AI-ModelScope/GuanacoDataset"

},

"belle_2m": {

"hf_hub_url": "BelleGroup/train_2M_CN",

"ms_hub_url": "AI-ModelScope/train_2M_CN"

},

"belle_1m": {

"hf_hub_url": "BelleGroup/train_1M_CN",

"ms_hub_url": "AI-ModelScope/train_1M_CN"

},

"belle_0.5m": {

"hf_hub_url": "BelleGroup/train_0.5M_CN",

"ms_hub_url": "AI-ModelScope/train_0.5M_CN"

},

"belle_dialog": {

"hf_hub_url": "BelleGroup/generated_chat_0.4M",

"ms_hub_url": "AI-ModelScope/generated_chat_0.4M"

},

"belle_math": {

"hf_hub_url": "BelleGroup/school_math_0.25M",

"ms_hub_url": "AI-ModelScope/school_math_0.25M"

},

"belle_multiturn": {

"script_url": "belle_multiturn",

"formatting": "sharegpt"

},

"ultra_chat": {

"script_url": "ultra_chat",

"formatting": "sharegpt"

},

"open_platypus": {

"hf_hub_url": "garage-bAInd/Open-Platypus",

"ms_hub_url": "AI-ModelScope/Open-Platypus"

},

"codealpaca": {

"hf_hub_url": "sahil2801/CodeAlpaca-20k",

"ms_hub_url": "AI-ModelScope/CodeAlpaca-20k"

},

"alpaca_cot": {

"hf_hub_url": "QingyiSi/Alpaca-CoT",

"ms_hub_url": "AI-ModelScope/Alpaca-CoT"

},

"openorca": {

"hf_hub_url": "Open-Orca/OpenOrca",

"ms_hub_url": "AI-ModelScope/OpenOrca",

"columns": {

"prompt": "question",

"response": "response",

"system": "system_prompt"

}

},

"slimorca": {

"hf_hub_url": "Open-Orca/SlimOrca",

"formatting": "sharegpt"

},

"mathinstruct": {

"hf_hub_url": "TIGER-Lab/MathInstruct",

"ms_hub_url": "AI-ModelScope/MathInstruct",

"columns": {

"prompt": "instruction",

"response": "output"

}

},

"firefly": {

"hf_hub_url": "YeungNLP/firefly-train-1.1M",

"columns": {

"prompt": "input",

"response": "target"

}

},

"wikiqa": {

"hf_hub_url": "wiki_qa",

"columns": {

"prompt": "question",

"response": "answer"

}

},

"webqa": {

"hf_hub_url": "suolyer/webqa",

"ms_hub_url": "AI-ModelScope/webqa",

"columns": {

"prompt": "input",

"response": "output"

}

},

"webnovel": {

"hf_hub_url": "zxbsmk/webnovel_cn",

"ms_hub_url": "AI-ModelScope/webnovel_cn"

},

"nectar_sft": {

"hf_hub_url": "mlinmg/SFT-Nectar",

"ms_hub_url": "AI-ModelScope/SFT-Nectar"

},

"deepctrl": {

"ms_hub_url": "deepctrl/deepctrl-sft-data"

},

"adgen": {

"hf_hub_url": "HasturOfficial/adgen",

"ms_hub_url": "AI-ModelScope/adgen",

"columns": {

"prompt": "content",

"response": "summary"

}

},

"sharegpt_hyper": {

"hf_hub_url": "totally-not-an-llm/sharegpt-hyperfiltered-3k",

"formatting": "sharegpt"

},

"sharegpt4": {

"hf_hub_url": "shibing624/sharegpt_gpt4",

"ms_hub_url": "AI-ModelScope/sharegpt_gpt4",

"formatting": "sharegpt"

},

"ultrachat_200k": {

"hf_hub_url": "HuggingFaceH4/ultrachat_200k",

"ms_hub_url": "AI-ModelScope/ultrachat_200k",

"columns": {

"messages": "messages"

},

"tags": {

"role_tag": "role",

"content_tag": "content",

"user_tag": "user",

"assistant_tag": "assistant"

},

"formatting": "sharegpt"

},

"agent_instruct": {

"hf_hub_url": "THUDM/AgentInstruct",

"ms_hub_url": "ZhipuAI/AgentInstruct",

"formatting": "sharegpt"

},

"lmsys_chat": {

"hf_hub_url": "lmsys/lmsys-chat-1m",

"ms_hub_url": "AI-ModelScope/lmsys-chat-1m",

"columns": {

"messages": "conversation"

},

"tags": {

"role_tag": "role",

"content_tag": "content",

"user_tag": "human",

"assistant_tag": "assistant"

},

"formatting": "sharegpt"

},

"evol_instruct": {

"hf_hub_url": "WizardLM/WizardLM_evol_instruct_V2_196k",

"ms_hub_url": "AI-ModelScope/WizardLM_evol_instruct_V2_196k",

"formatting": "sharegpt"

},

"glaive_toolcall_100k": {

"hf_hub_url": "hiyouga/glaive-function-calling-v2-sharegpt",

"formatting": "sharegpt",

"columns": {

"messages": "conversations",

"tools": "tools"

}

},

"cosmopedia": {

"hf_hub_url": "HuggingFaceTB/cosmopedia",

"columns": {

"prompt": "prompt",

"response": "text"

}

},

"oasst_de": {

"hf_hub_url": "mayflowergmbh/oasst_de"

},

"dolly_15k_de": {

"hf_hub_url": "mayflowergmbh/dolly-15k_de"

},

"alpaca-gpt4_de": {

"hf_hub_url": "mayflowergmbh/alpaca-gpt4_de"

},

"openschnabeltier_de": {

"hf_hub_url": "mayflowergmbh/openschnabeltier_de"

},

"evol_instruct_de": {

"hf_hub_url": "mayflowergmbh/evol-instruct_de"

},

"dolphin_de": {

"hf_hub_url": "mayflowergmbh/dolphin_de"

},

"booksum_de": {

"hf_hub_url": "mayflowergmbh/booksum_de"

},

"airoboros_de": {

"hf_hub_url": "mayflowergmbh/airoboros-3.0_de"

},

"ultrachat_de": {

"hf_hub_url": "mayflowergmbh/ultra-chat_de"

},

"hh_rlhf_en": {

"script_url": "hh_rlhf_en",

"columns": {

"prompt": "instruction",

"response": "output",

"history": "history"

},

"ranking": true

},

"oaast_rm": {

"file_name": "oaast_rm.json",

"file_sha1": "622d420e9b70003b210618253bd3d9d2891d86cb",

"columns": {

"prompt": "instruction",

"query": "input",

"response": "output",

"history": "history"

},

"ranking": true

},

"oaast_rm_zh": {

"file_name": "oaast_rm_zh.json",

"file_sha1": "1065af1f3784dd61be5e79713a35f427b713a232",

"columns": {

"prompt": "instruction",

"query": "input",

"response": "output",

"history": "history"

},

"ranking": true

},

"comparison_gpt4_en": {

"file_name": "comparison_gpt4_data_en.json",

"file_sha1": "96fa18313544e22444fe20eead7754b17da452ae",

"ranking": true

},

"comparison_gpt4_zh": {

"file_name": "comparison_gpt4_data_zh.json",

"file_sha1": "515b18ed497199131ddcc1af950345c11dc5c7fd",

"ranking": true

},

"orca_rlhf": {

"file_name": "orca_rlhf.json",

"file_sha1": "acc8f74d16fd1fc4f68e7d86eaa781c2c3f5ba8e",

"ranking": true,

"columns": {

"prompt": "question",

"response": "answer",

"system": "system"

}

},

"nectar_rm": {

"hf_hub_url": "mlinmg/RLAIF-Nectar",

"ms_hub_url": "AI-ModelScope/RLAIF-Nectar",

"ranking": true

},

"orca_dpo_de" : {

"hf_hub_url": "mayflowergmbh/intel_orca_dpo_pairs_de",

"ranking": true

},

"wiki_demo": {

"file_name": "wiki_demo.txt",

"file_sha1": "e70375e28eda542a90c68213640cc371898ce181",

"columns": {

"prompt": "text"

}

},

"c4_demo": {

"file_name": "c4_demo.json",

"file_sha1": "a5a0c86759732f9a5238e447fecd74f28a66cca8",

"columns": {

"prompt": "text"

}

},

"refinedweb": {

"hf_hub_url": "tiiuae/falcon-refinedweb",

"columns": {

"prompt": "content"

}

},

"redpajama_v2": {

"hf_hub_url": "togethercomputer/RedPajama-Data-V2",

"columns": {

"prompt": "raw_content"

},

"subset": "default"

},

"wikipedia_en": {

"hf_hub_url": "olm/olm-wikipedia-20221220",

"ms_hub_url": "AI-ModelScope/olm-wikipedia-20221220",

"columns": {

"prompt": "text"

}

},

"wikipedia_zh": {

"hf_hub_url": "pleisto/wikipedia-cn-20230720-filtered",

"ms_hub_url": "AI-ModelScope/wikipedia-cn-20230720-filtered",

"columns": {

"prompt": "completion"

}

},

"pile": {

"hf_hub_url": "EleutherAI/pile",

"ms_hub_url": "AI-ModelScope/pile",

"columns": {

"prompt": "text"

},

"subset": "all"

},

"skypile": {

"hf_hub_url": "Skywork/SkyPile-150B",

"ms_hub_url": "AI-ModelScope/SkyPile-150B",

"columns": {

"prompt": "text"

}

},

"the_stack": {

"hf_hub_url": "bigcode/the-stack",

"ms_hub_url": "AI-ModelScope/the-stack",

"columns": {

"prompt": "content"

}

},

"starcoder_python": {

"hf_hub_url": "bigcode/starcoderdata",

"ms_hub_url": "AI-ModelScope/starcoderdata",

"columns": {

"prompt": "content"

},

"folder": "python"

},

"self_cognition": {

"file_name": "self_cognition.json",

"file_sha1": "eca3d89fa38b35460d6627cefdc101feef507eb5"

}

}

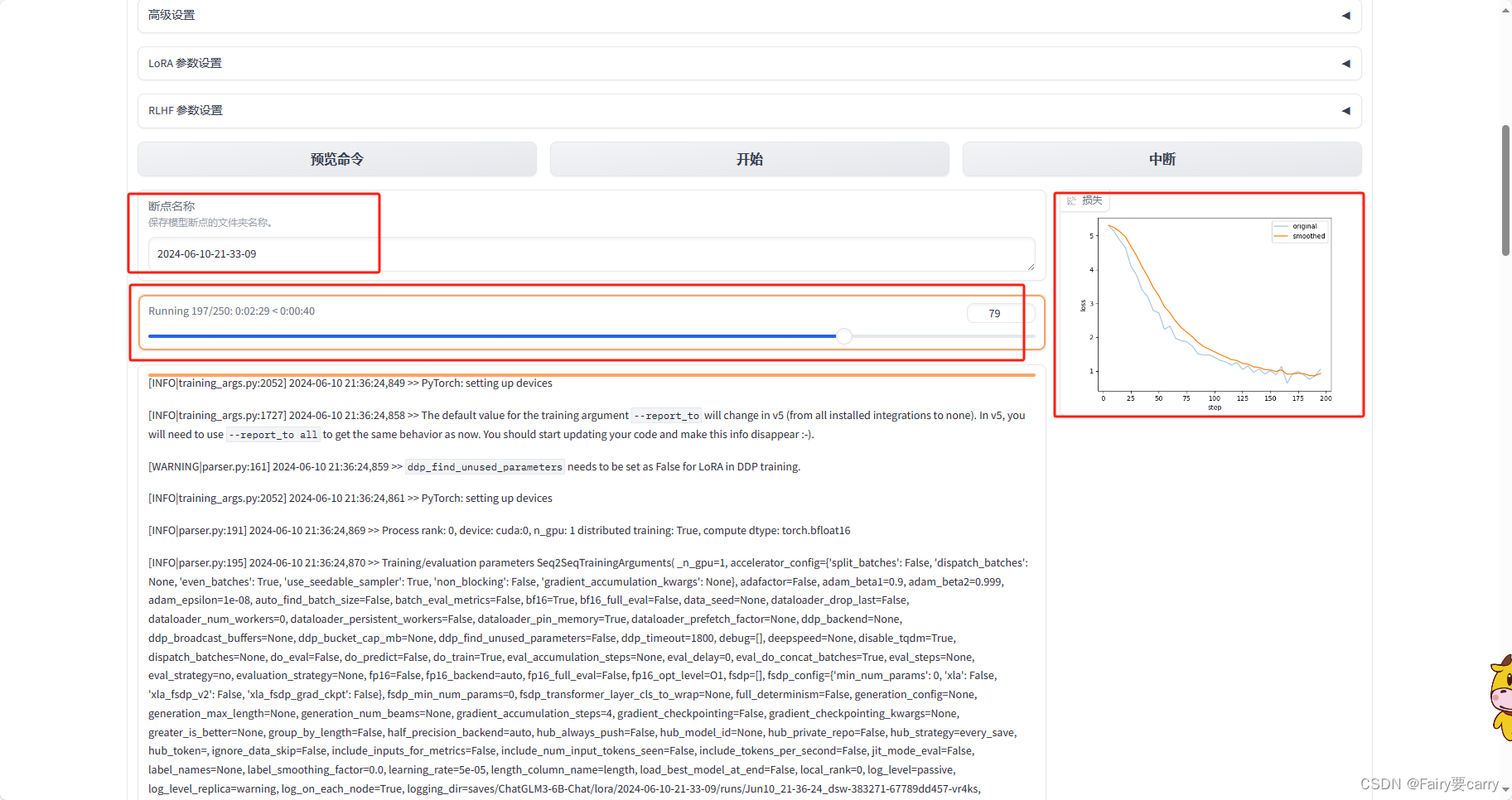

3. 进行微调

# 运行

CUDA_VISIBLE_DEVICES=0 USE_MODELSCOPE_HUB=1 python src/train_web.py

我们需要注意一下:设置正确的断点:

当报错output已经存在的时候,很有可能是因为当前断点已经设置过了,所以我们需要设置一个新的断点

结果如下所示:

635

635

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?