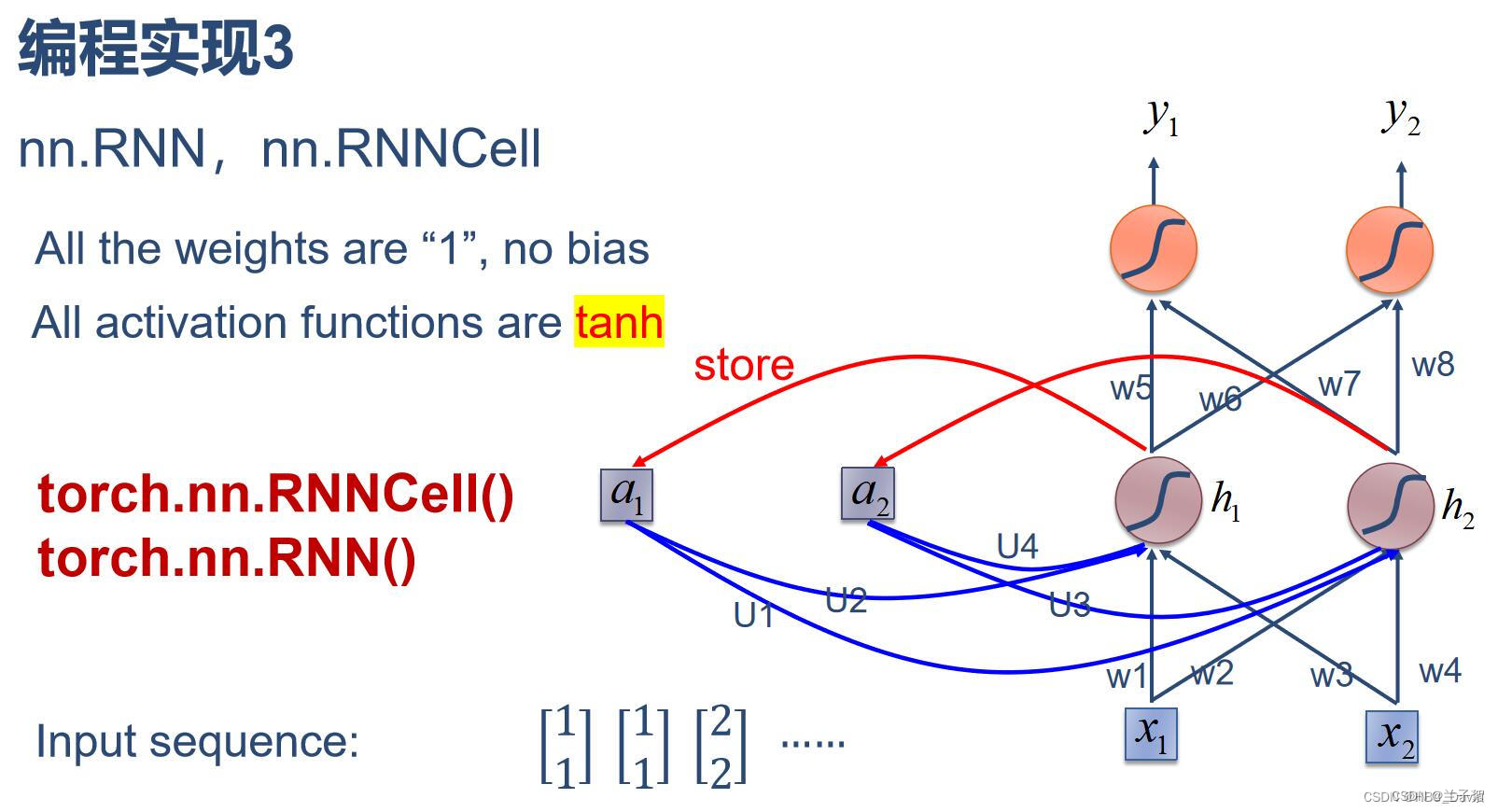

简单循环网络(Simple Recurrent Network,SRN)只有一个隐藏层的神经网络。

1、实现SRN

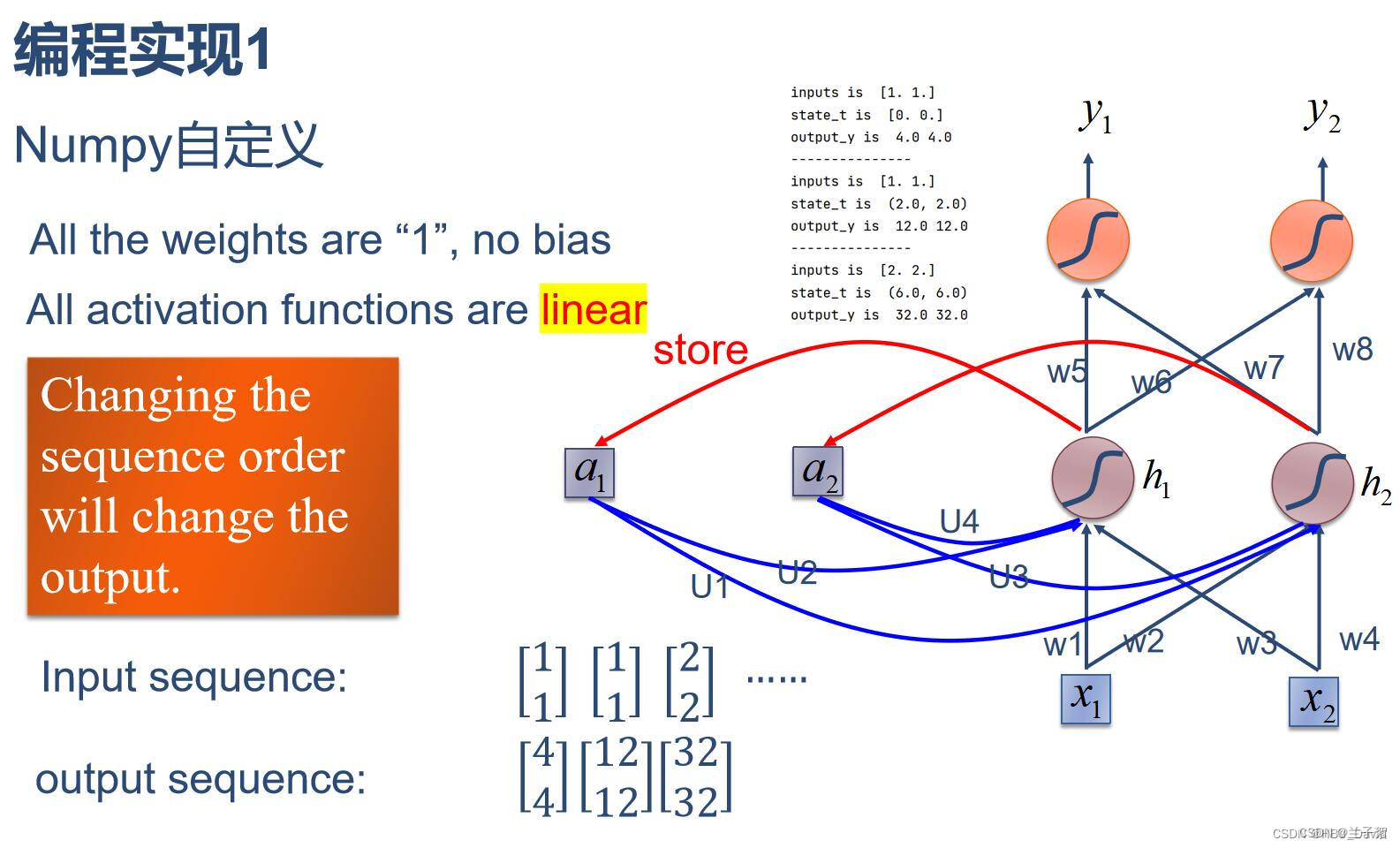

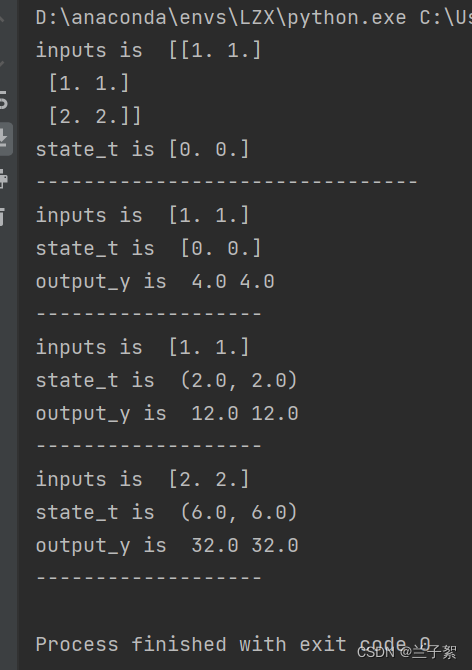

(1)使用numpy

import numpy as np

inputs=np.array([[1.,1.],

[1.,1.],

[2.,2.]])#输入序列

print('inputs is ',inputs)

state_t=np.zeros(2,)#初始化存储器

print('state_t is',state_t)

w1,w2,w3,w4,w5,w6,w7,w8=1.,1.,1.,1.,1.,1.,1.,1.

U1,U2,U3,U4=1.,1.,1.,1.

print('--------------------------------')

for input_t in inputs:

print('inputs is ',input_t)

print('state_t is ',state_t)

in_h1=np.dot([w1,w3],input_t)+np.dot([U2,U4],state_t)

in_h2=np.dot([w2,w4],input_t)+np.dot([U1,U3],state_t)

state_t=in_h1,in_h2

output_y1=np.dot([w5,w7],[in_h1,in_h2])

output_y2=np.dot([w6,w8],[in_h1,in_h2])

print('output_y is ',output_y1,output_y2)

print('-------------------')

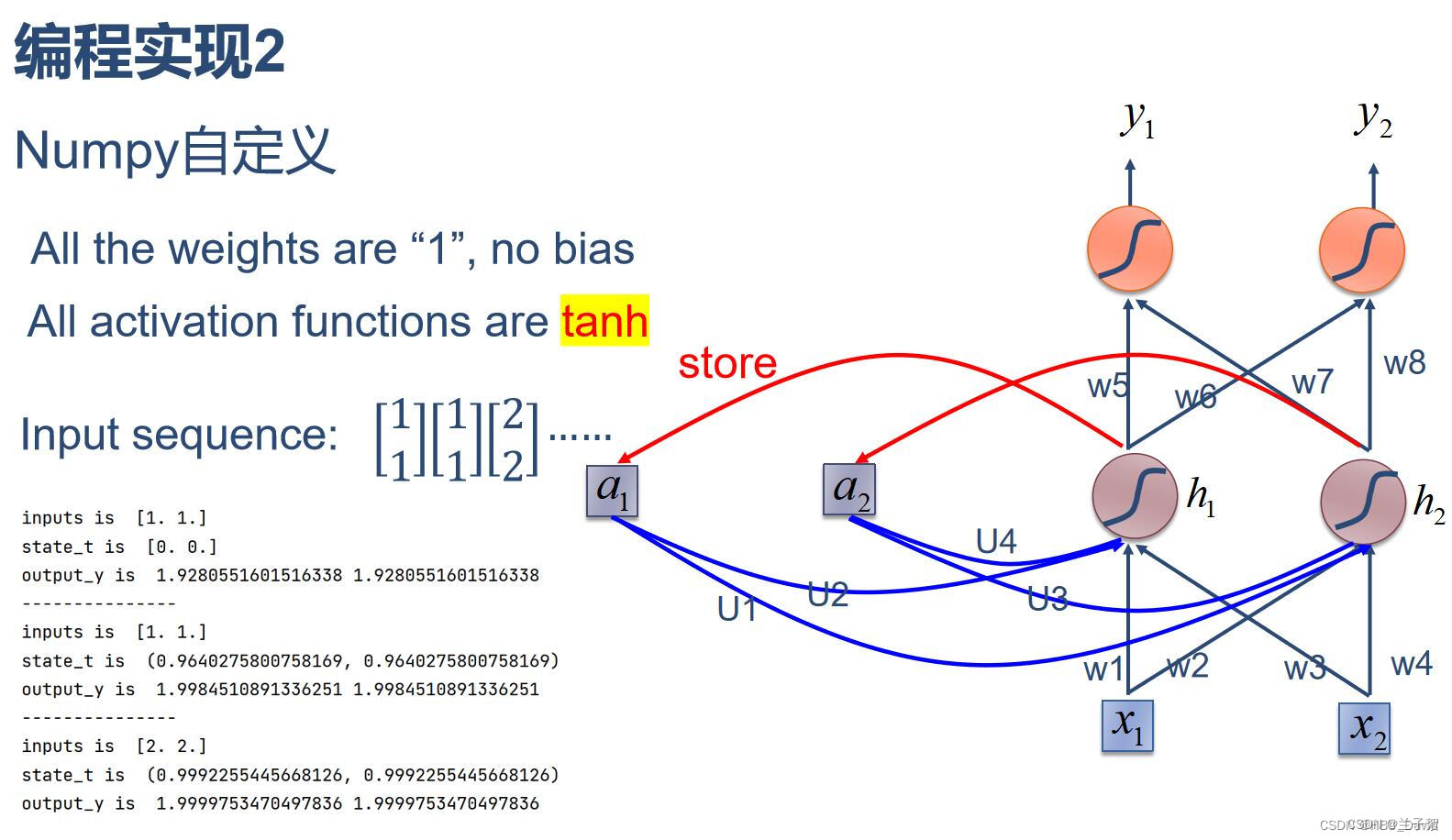

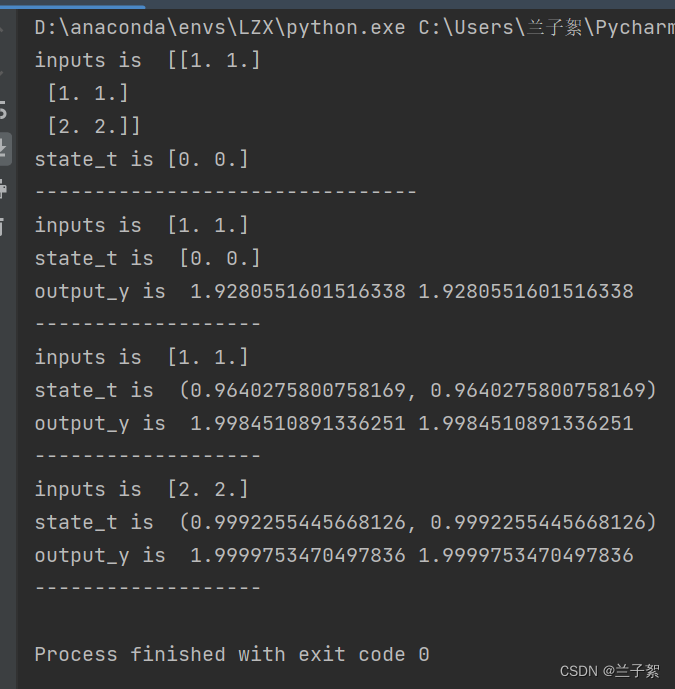

(2)在1的基础上,增加激活函数tanh

import numpy as np

inputs=np.array([[1.,1.],

[1.,1.],

[2.,2.]])#输入序列

print('inputs is ',inputs)

state_t=np.zeros(2,)#初始化存储器

print('state_t is',state_t)

w1,w2,w3,w4,w5,w6,w7,w8=1.,1.,1.,1.,1.,1.,1.,1.

U1,U2,U3,U4=1.,1.,1.,1.

print('--------------------------------')

for input_t in inputs:

print('inputs is ',input_t)

print('state_t is ',state_t)

in_h1=np.tanh(np.dot([w1,w3],input_t)+np.dot([U2,U4],state_t))

in_h2=np.tanh(np.dot([w2,w4],input_t)+np.dot([U1,U3],state_t))

state_t=in_h1,in_h2

output_y1=np.dot([w5,w7],[in_h1,in_h2])

output_y2=np.dot([w6,w8],[in_h1,in_h2])

print('output_y is ',output_y1,output_y2)

print('-------------------')

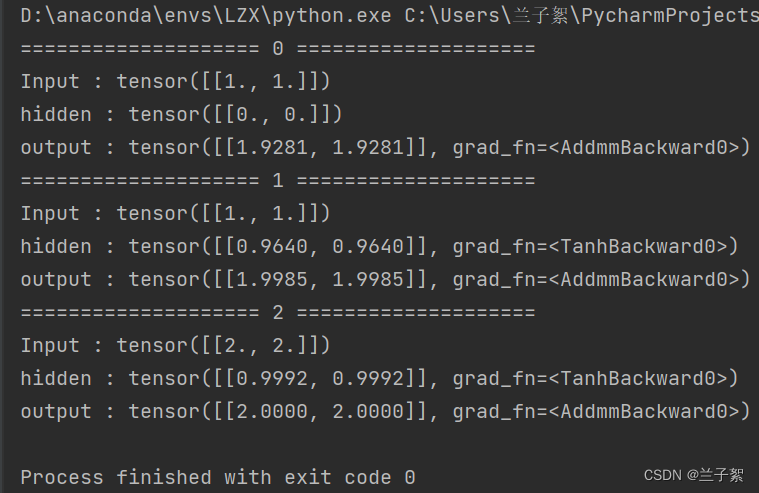

(3)使用nn.RnnCell实现

import torch

batch_size=1

seq_len=3 #序列长度

input_size=2 #输入序列维度

hidden_size=2 #隐藏层维度

output_size=2 #输出层维度

cell=torch.nn.RNNCell(input_size=input_size,hidden_size=hidden_size)

#初始化参数

for name,param in cell.named_parameters():

if name.startswith("weight"):

torch.nn.init.ones_(param)

else:

torch.nn.init.zeros_(param)

#线性层

liner=torch.nn.Linear(hidden_size,output_size)

liner.weight.data=torch.Tensor([[1,1],[1,1]])

liner.bias.data=torch.Tensor([0,0])

seq=torch.Tensor([[[1,1]],

[[1,1]],

[[2,2]]])

hidden=torch.zeros(batch_size,hidden_size)

output=torch.zeros(batch_size,output_size)

for idx,input in enumerate(seq):

print('='*20,idx,'='*20)

print('Input :',input)

print('hidden :',hidden)

hidden=cell(input,hidden)

output=liner(hidden)

print('output :',output)

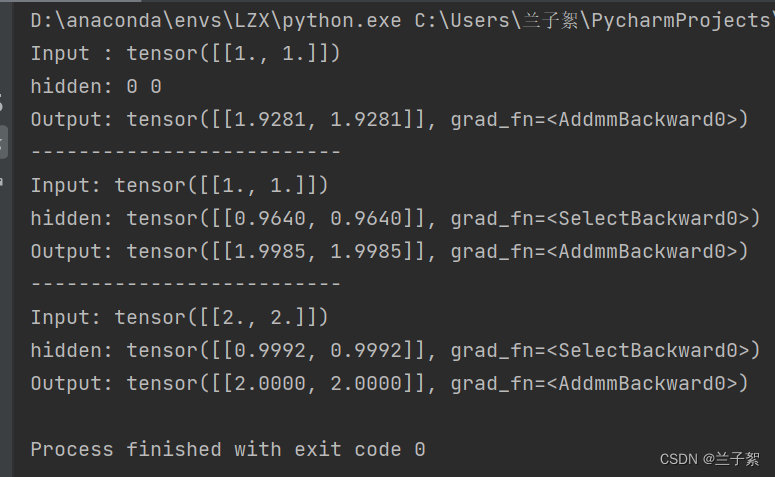

(4)使用nn.RNN实现

import torch

batch_size=1

seq_len=3 #序列长度

input_size=2 #输入序列维度

hidden_size=2 #隐藏层维度

output_size=2 #输出层维度

num_layers=1

cell=torch.nn.RNN(input_size=input_size,hidden_size=hidden_size,num_layers=num_layers)

#初始化参数

for name,param in cell.named_parameters():

if name.startswith("weight"):

torch.nn.init.ones_(param)

else:

torch.nn.init.zeros_(param)

#线性层

liner=torch.nn.Linear(hidden_size,output_size)

liner.weight.data=torch.Tensor([[1,1],[1,1]])

liner.bias.data=torch.Tensor([0,0])

inputs=torch.Tensor([[[1,1]],

[[1,1]],

[[2,2]]])

hidden=torch.zeros(num_layers,batch_size,hidden_size)

out,hidden=cell(inputs,hidden)

print('Input :',inputs[0])

print('hidden:',0,0)

print('Output:',liner(out[0]))

print('--------------------------')

print('Input:',inputs[1])

print('hidden:',out[0])

print('Output:',liner(out[1]))

print('--------------------------')

print('Input:',inputs[2])

print('hidden:',out[1])

print('Output:',liner(out[2]))

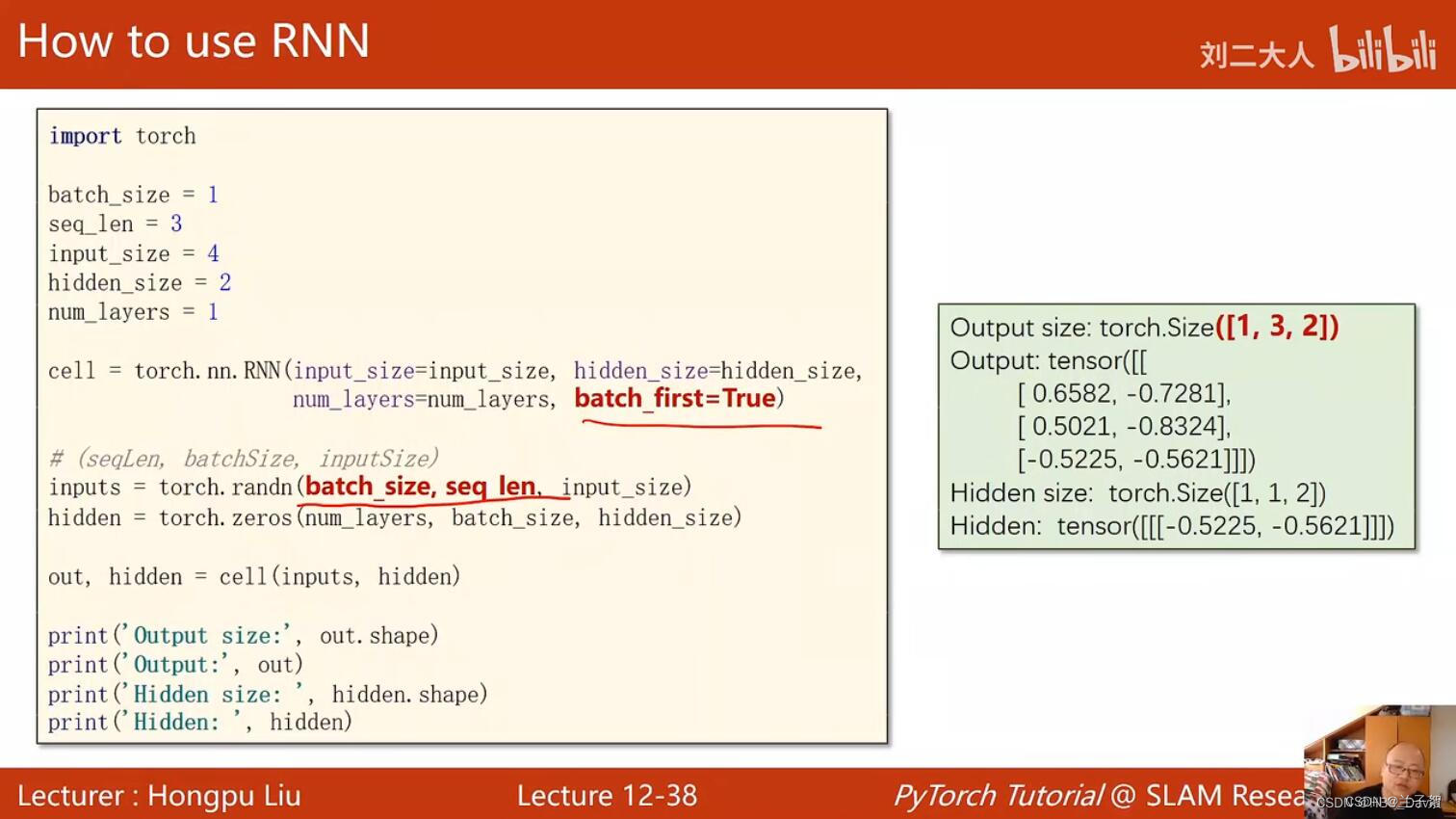

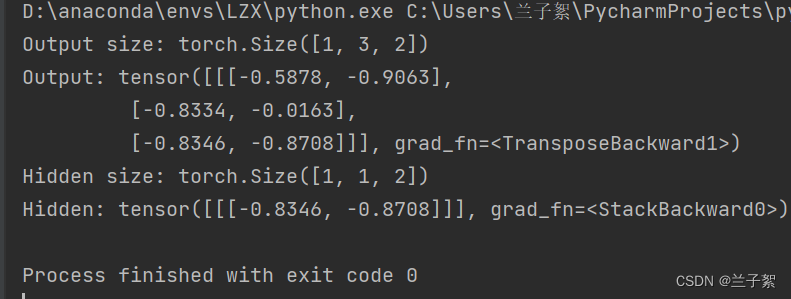

2、实现“序列到序列”

import torch

batch_size=1

seq_len=3

input_size=4

hidden_size=2

num_layers=1

cell=torch.nn.RNN(input_size=input_size,hidden_size=hidden_size,num_layers=num_layers,batch_first=True)

inputs=torch.randn(batch_size,seq_len,input_size)

hidden=torch.zeros(num_layers,batch_size,hidden_size)

out,hidden=cell(inputs,hidden)

print('Output size:',out.shape)

print('Output:',out)

print('Hidden size:',hidden.shape)

print('Hidden:',hidden)

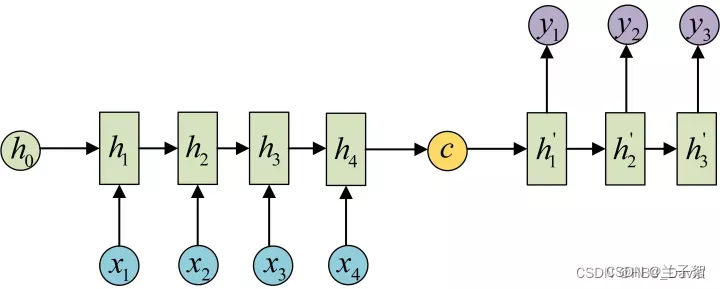

3、“编码器-解码器”的简单实现

# code by Tae Hwan Jung(Jeff Jung) @graykode, modify by wmathor

import torch

import numpy as np

import torch.nn as nn

import torch.utils.data as Data

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

# S: Symbol that shows starting of decoding input

# E: Symbol that shows starting of decoding output

# ?: Symbol that will fill in blank sequence if current batch data size is short than n_step

letter = [c for c in 'SE?abcdefghijklmnopqrstuvwxyz']

letter2idx = {n: i for i, n in enumerate(letter)}

seq_data = [['man', 'women'], ['black', 'white'], ['king', 'queen'], ['girl', 'boy'], ['up', 'down'], ['high', 'low']]

# Seq2Seq Parameter

n_step = max([max(len(i), len(j)) for i, j in seq_data]) # max_len(=5)

n_hidden = 128

n_class = len(letter2idx) # classfication problem

batch_size = 3

def make_data(seq_data):

enc_input_all, dec_input_all, dec_output_all = [], [], []

for seq in seq_data:

for i in range(2):

seq[i] = seq[i] + '?' * (n_step - len(seq[i])) # 'man??', 'women'

enc_input = [letter2idx[n] for n in (seq[0] + 'E')] # ['m', 'a', 'n', '?', '?', 'E']

dec_input = [letter2idx[n] for n in ('S' + seq[1])] # ['S', 'w', 'o', 'm', 'e', 'n']

dec_output = [letter2idx[n] for n in (seq[1] + 'E')] # ['w', 'o', 'm', 'e', 'n', 'E']

enc_input_all.append(np.eye(n_class)[enc_input])

dec_input_all.append(np.eye(n_class)[dec_input])

dec_output_all.append(dec_output) # not one-hot

# make tensor

return torch.Tensor(enc_input_all), torch.Tensor(dec_input_all), torch.LongTensor(dec_output_all)

'''

enc_input_all: [6, n_step+1 (because of 'E'), n_class]

dec_input_all: [6, n_step+1 (because of 'S'), n_class]

dec_output_all: [6, n_step+1 (because of 'E')]

'''

enc_input_all, dec_input_all, dec_output_all = make_data(seq_data)

class TranslateDataSet(Data.Dataset):

def __init__(self, enc_input_all, dec_input_all, dec_output_all):

self.enc_input_all = enc_input_all

self.dec_input_all = dec_input_all

self.dec_output_all = dec_output_all

def __len__(self): # return dataset size

return len(self.enc_input_all)

def __getitem__(self, idx):

return self.enc_input_all[idx], self.dec_input_all[idx], self.dec_output_all[idx]

loader = Data.DataLoader(TranslateDataSet(enc_input_all, dec_input_all, dec_output_all), batch_size, True)

# Model

class Seq2Seq(nn.Module):

def __init__(self):

super(Seq2Seq, self).__init__()

self.encoder = nn.RNN(input_size=n_class, hidden_size=n_hidden, dropout=0.5) # encoder

self.decoder = nn.RNN(input_size=n_class, hidden_size=n_hidden, dropout=0.5) # decoder

self.fc = nn.Linear(n_hidden, n_class)

def forward(self, enc_input, enc_hidden, dec_input):

# enc_input(=input_batch): [batch_size, n_step+1, n_class]

# dec_inpu(=output_batch): [batch_size, n_step+1, n_class]

enc_input = enc_input.transpose(0, 1) # enc_input: [n_step+1, batch_size, n_class]

dec_input = dec_input.transpose(0, 1) # dec_input: [n_step+1, batch_size, n_class]

# h_t : [num_layers(=1) * num_directions(=1), batch_size, n_hidden]

_, h_t = self.encoder(enc_input, enc_hidden)

# outputs : [n_step+1, batch_size, num_directions(=1) * n_hidden(=128)]

outputs, _ = self.decoder(dec_input, h_t)

model = self.fc(outputs) # model : [n_step+1, batch_size, n_class]

return model

model = Seq2Seq().to(device)

criterion = nn.CrossEntropyLoss().to(device)

optimizer = torch.optim.Adam(model.parameters(), lr=0.001)

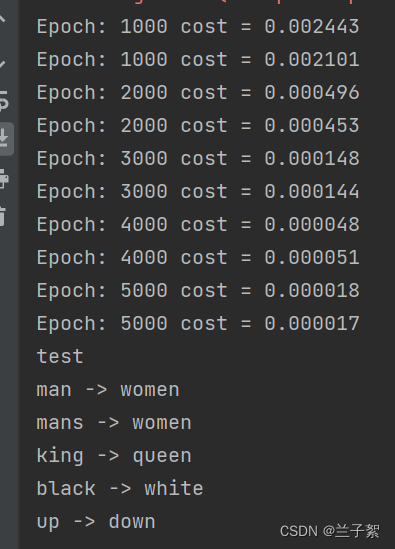

for epoch in range(5000):

for enc_input_batch, dec_input_batch, dec_output_batch in loader:

# make hidden shape [num_layers * num_directions, batch_size, n_hidden]

h_0 = torch.zeros(1, batch_size, n_hidden).to(device)

(enc_input_batch, dec_intput_batch, dec_output_batch) = (

enc_input_batch.to(device), dec_input_batch.to(device), dec_output_batch.to(device))

# enc_input_batch : [batch_size, n_step+1, n_class]

# dec_intput_batch : [batch_size, n_step+1, n_class]

# dec_output_batch : [batch_size, n_step+1], not one-hot

pred = model(enc_input_batch, h_0, dec_intput_batch)

# pred : [n_step+1, batch_size, n_class]

pred = pred.transpose(0, 1) # [batch_size, n_step+1(=6), n_class]

loss = 0

for i in range(len(dec_output_batch)):

# pred[i] : [n_step+1, n_class]

# dec_output_batch[i] : [n_step+1]

loss += criterion(pred[i], dec_output_batch[i])

if (epoch + 1) % 1000 == 0:

print('Epoch:', '%04d' % (epoch + 1), 'cost =', '{:.6f}'.format(loss))

optimizer.zero_grad()

loss.backward()

optimizer.step()

# Test

def translate(word):

enc_input, dec_input, _ = make_data([[word, '?' * n_step]])

enc_input, dec_input = enc_input.to(device), dec_input.to(device)

# make hidden shape [num_layers * num_directions, batch_size, n_hidden]

hidden = torch.zeros(1, 1, n_hidden).to(device)

output = model(enc_input, hidden, dec_input)

# output : [n_step+1, batch_size, n_class]

predict = output.data.max(2, keepdim=True)[1] # select n_class dimension

decoded = [letter[i] for i in predict]

translated = ''.join(decoded[:decoded.index('E')])

return translated.replace('?', '')

print('test')

print('man ->', translate('man'))

print('mans ->', translate('mans'))

print('king ->', translate('king'))

print('black ->', translate('black'))

print('up ->', translate('up'))在S2S模型中,RNN被用作编码器和解码器的基本单元。RNN作为一种循环神经网络,能够捕捉序列数据中的时间依赖关系,并将这些信息存储在隐藏状态中。在编码器中,RNN将输入序列转换成一个固定长度的向量,这个向量包含了输入序列的信息;在解码器中,RNN将从该向量中解码出目标序列。

Seq2Seq的PyTorch实现 - mathor (wmathor.com)

NNDL 作业8:RNN - 简单循环网络_省略编码器rnn的简单指针网络-CSDN博客

4、简单总结nn.RNNCell、nn.RNN

nn.RNNCell:

nn.RNNCell是简单的循环神经网络单元,类似于其他网络单元,如nn.Linear和nn.ReLU。它可以用于构建自定义RNN层。nn.RNNCell接受输入和隐藏状态,并输出新的隐藏状态和输出。

nn.RNN:

nn.RNN是通用的循环神经网络层,具有短期记忆能力,可以处理序列数据。与nn.RNNCell不同,nn.RNN可以处理批量的输入数据,并在每个时间步都应用相同的RNN单元。它接受一个序列的输入张量,以及初始隐藏状态(如果有的话),并返回输出张量和最终的隐藏状态。在构建自定义RNN模型时,通常会使用nn.RNNCell来定义每个时间步的RNN单元,然后将这些单元放入一个nn.RNN层中以处理序列数据。

5、谈谈对“序列”、“序列到序列”的理解

-

序列

“序列”通常指的是一系列有序的数据元素。在自然语言处理中,序列通常指的是一系列单词或字符,这些单词或字符按照特定的语法规则组成句子或文章。例如,“我喜欢看电影”可以看作是一个由“我”、“喜欢”、“看”和“电影”四个单词组成的序列。

-

序列到序列(S2S)

“序列到序列”(S2S)是一种深度学习模型,适用于处理需要将序列转换为另一序列的任务。S2S模型最早由Google在2014年提出,并在机器翻译等领域取得了显著的成果。

S2S模型通常采用编码器-解码器(Encoder-Decoder)架构。编码器将输入序列编码为固定长度的向量,解码器则将该向量解码为输出序列。编码器和解码器之间通过注意力机制(Attention Mechanism)进行交互,使得解码器可以关注输入序列中与输出序列当前位置相关的部分。

S2S模型可以用于多种任务,如机器翻译、文本摘要、对话生成等。在这些任务中,输入序列通常是一段文本,而输出序列则是另一段文本或是对输入序列的某种形式回应。

6、总结本周理论课和作业,写心得体会

本次作业主要是阅读理解代码并复现,熟悉了nn.RNNCell()和nn.RNN()的内部实现,并对比其不同;对于S2S模型的实现还不是很理解,需要进一步探索。

957

957

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?