参考

试运行

安装环境

(yolo) ┌──(venv)─(***㉿kali)-[~/myproject/yolov5]

└─$ pip install -qr requirements.txt

尝试运行

- 使用语句:python detect.py --weights yolov5s.pt --img 640 --conf 0.25 --source data/images

(yolo) ┌──(venv)─(***㉿kali)-[~/myproject/yolov5]

└─$ python detect.py --weights yolov5s.pt --img 640 --conf 0.25 --source data/images

detect: weights=['yolov5s.pt'], source=data/images, data=data/coco128.yaml, imgsz=[640, 640], conf_thres=0.25, iou_thres=0.45, max_det=1000, device=, view_img=False, save_txt=False, save_conf=False, save_crop=False, nosave=False, classes=None, agnostic_nms=False, augment=False, visualize=False, update=False, project=runs/detect, name=exp, exist_ok=False, line_thickness=3, hide_labels=False, hide_conf=False, half=False, dnn=False

YOLOv5 🚀 2022-5-20 torch 1.12.1+cu102 CPU

Downloading https:

ERROR: Remote end closed connection without response

Re-attempting https:

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 196 100 196 0 0 265 0 --:--:-- --:--:-- --:--:-- 265

ERROR: Downloaded file 'yolov5s.pt' does not exist or size is < min_bytes=100000.0

yolov5s.pt missing, try downloading from https:

Downloading https:

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 14.1M/14.1M [00:04<00:00, 3.65MB/s]

Fusing layers...

YOLOv5s summary: 213 layers, 7225885 parameters, 0 gradients

image 1/2 /homemyproject/yolov5/data/images/bus.jpg: 640x480 4 persons, 1 bus, Done. (0.242s)

image 2/2 /homemyproject/yolov5/data/images/zidane.jpg: 384x640 2 persons, 2 ties, Done. (0.187s)

Speed: 1.8ms pre-process, 214.5ms inference, 1.0ms NMS per image at shape (1, 3, 640, 640)

Results saved to runs/detect/exp

解释

导包

import argparse

……

import torch

import torch.backends.cudnn as cudnn

FILE = Path(__file__).resolve() # C:\Users\zkh\Desktop\yolov5\detect.py , __file__:当前路径,.resolve():获取绝对路径

ROOT = FILE.parents[0] #获取父目录 YOLOv5 root directory, C:\Users\zkh\Desktop\yolov5

if str(ROOT) not in sys.path: # 模块的查询路径的列表 方便导入自己写的包

sys.path.append(str(ROOT)) # add ROOT to PATH

ROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative ,绝对路径转换成相对路径

from models.common import DetectMultiBackend

path.resolve()方法可以将多个路径解析为一个规范化的绝对路径。其处理方式类似于对这些路径逐一进行 cd 操作,与 cd 操作不同的是,这引起路径可以是文件,并且可不必实际存在(resolve()方法不会利用底层的文件系统判断路径是否存在,而只是进行路径字符串操作)

主函数

if __name__ == "__main__":

opt = parse_opt()# 解析参数

main(opt)# 执行主函数

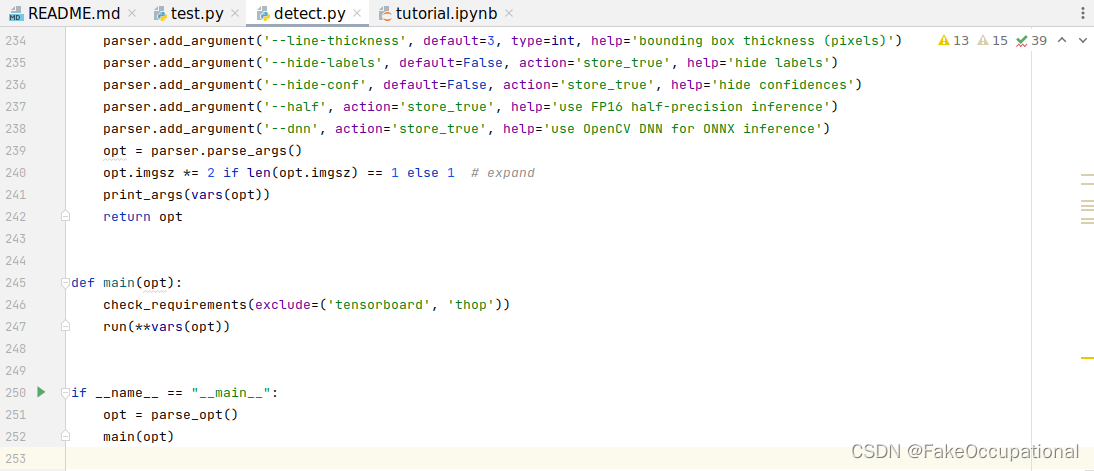

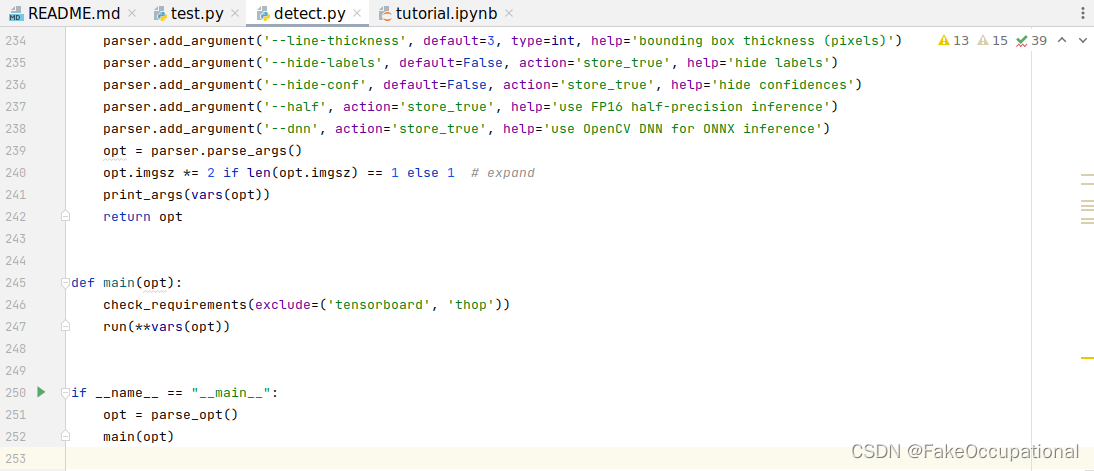

解析参数

def parse_opt():

parser = argparse.ArgumentParser()

parser.add_argument('--weights', nargs='+', type=str, default=ROOT / 'yolov5s.pt', help='model path(s)')

parser.add_argument('--source', type=str, default=ROOT / 'data/images', help='file/dir/URL/glob, 0 for webcam')

parser.add_argument('--data', type=str, default=ROOT / 'data/coco128.yaml', help='(optional) dataset.yaml path')

parser.add_argument('--imgsz', '--img', '--img-size', nargs='+', type=int, default=[640], help='inference size h,w')

parser.add_argument('--conf-thres', type=float, default=0.25, help='confidence threshold')

parser.add_argument('--iou-thres', type=float, default=0.45, help='NMS IoU threshold')

parser.add_argument('--max-det', type=int, default=1000, help='maximum detections per image')

parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

parser.add_argument('--view-img', action='store_true', help='show results')

parser.add_argument('--save-txt', action='store_true', help='save results to *.txt')

parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels')

parser.add_argument('--save-crop', action='store_true', help='save cropped prediction boxes')

parser.add_argument('--nosave', action='store_true', help='do not save images/videos')

parser.add_argument('--classes', nargs='+', type=int, help='filter by class: --classes 0, or --classes 0 2 3')

parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS')

parser.add_argument('--augment', action='store_true', help='augmented inference')

parser.add_argument('--visualize', action='store_true', help='visualize features')

parser.add_argument('--update', action='store_true', help='update all models')

parser.add_argument('--project', default=ROOT / 'runs/detect', help='save results to project/name')

parser.add_argument('--name', default='exp', help='save results to project/name')

parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')

parser.add_argument('--line-thickness', default=3, type=int, help='bounding box thickness (pixels)')

parser.add_argument('--hide-labels', default=False, action='store_true', help='hide labels')

parser.add_argument('--hide-conf', default=False, action='store_true', help='hide confidences')

parser.add_argument('--half', action='store_true', help='use FP16 half-precision inference')

parser.add_argument('--dnn', action='store_true', help='use OpenCV DNN for ONNX inference')

opt = parser.parse_args()

opt.imgsz *= 2 if len(opt.imgsz) == 1 else 1 # expand 专门判断一下imgsz是不是1,如果长度为1,自动乘2,从[640]变为[640 640]

print_args(vars(opt)) # 打印参数信息

return opt

def main(opt):

check_requirements(exclude=('tensorboard', 'thop'))# 检查当前环境是否满足 requirements.txt

run(**vars(opt))

run函数

| |

|---|

| source = str(source) | 对检测的source处理 |

| increment_path | 设置结果保存的文件夹 |

| model = DetectMultiBackend(weights, device=device, dnn=dnn, data=data, fp16=half) | 下载模型,加载模型,确定检测结果的种类,是否半精度,模型步长 |

| img = letterbox(img0,…… | daterloder加载数据,设置读取图片的规则,letterbox(padding resize到特定大小) |

| pred = model(im, augment=augment, visualize=visualize) # torch.Size([1, 18900, 85]) | Run inference推断,85是位置4(左上右下)+置信度1+类别80 |

| im = im[None] # expand for batch dim , torch.Size([1, 3, 640, 480]) |

| NMS 结果处理(非极大值抑制)[6.72000e+02, 3.95000e+02, 8.10000e+02, 8.78000e+02, 8.96172e-01(置信度), 0.00000e+00(第一个类别是人)] |

| save_path = str(save_dir / p.name) 设置保存路径(整张图像结果,txt文本结果,是否裁剪保存) |

| Annotator是专门画检测框的工具 |

| LOGGER.info(f’Speed:…… | 打印结果 |

@torch.no_grad()

def run(

weights=ROOT / 'yolov5s.pt', # model.pt path(s)

source=ROOT / 'data/images', # file/dir/URL/glob, 0 for webcam

data=ROOT / 'data/coco128.yaml', # dataset.yaml path 用于设置自己的数据集

imgsz=(640, 640), # inference size (height, width)

conf_thres=0.25, # confidence threshold

iou_thres=0.45, # NMS IOU threshold

max_det=1000, # maximum detections per image

device='', # cuda device, i.e. 0 or 0,1,2,3 or cpu

view_img=False, # show results

save_txt=False, # save results to *.txt

save_conf=False, # save confidences in --save-txt labels

save_crop=False, # save cropped prediction boxes

nosave=False, # do not save images/videos

classes=None, # filter by class: --class 0, or --class 0 2 3

agnostic_nms=False, # class-agnostic NMS

augment=False, # augmented inference

visualize=False, # visualize features

update=False, # update all models

project=ROOT / 'runs/detect', # save results to project/name

name='exp', # save results to project/name

exist_ok=False, # existing project/name ok, do not increment

line_thickness=3, # bounding box thickness (pixels)

hide_labels=False, # hide labels

hide_conf=False, # hide confidences

half=False, # use FP16 half-precision inference

dnn=False, # use OpenCV DNN for ONNX inference

):

source = str(source) # 'python detect.py --source data\\images\\bus.jpg'

save_img = not nosave and not source.endswith('.txt') # save inference images

is_file = Path(source).suffix[1:] in (IMG_FORMATS + VID_FORMATS)

is_url = source.lower().startswith(('rtsp://', 'rtmp://', 'http://', 'https://'))

webcam = source.isnumeric() or source.endswith('.txt') or (is_url and not is_file)

if is_url and is_file:

source = check_file(source) # download

# Directories 设置结果保存的文件夹

save_dir = increment_path(Path(project) / name, exist_ok=exist_ok) #增量路径 increment run, runs\\detect\\exp3

(save_dir / 'labels' if save_txt else save_dir).mkdir(parents=True, exist_ok=True) # make dir 指定txt保存

# Load model

device = select_device(device)

model = DetectMultiBackend(weights, device=device, dnn=dnn, data=data, fp16=half)# 多后端环境检测加载模型

stride, names, pt = model.stride, model.names, model.pt

imgsz = check_img_size(imgsz, s=stride) # check image size

# Dataloader

if webcam:

view_img = check_imshow()

cudnn.benchmark = True # set True to speed up constant image size inference

dataset = LoadStreams(source, img_size=imgsz, stride=stride, auto=pt)

bs = len(dataset) # batch_size

else:

dataset = LoadImages(source, img_size=imgsz, stride=stride, auto=pt)

bs = 1 # batch_size

vid_path, vid_writer = [None] * bs, [None] * bs

# Run inference

model.warmup(imgsz=(1 if pt else bs, 3, *imgsz)) # warmup

dt, seen = [0.0, 0.0, 0.0], 0

for path, im, im0s, vid_cap, s in dataset: # "C:\\Users\\zkh\\Desktop\\yolov5\\data\\images\\bus.jpg"

t1 = time_sync()

im = torch.from_numpy(im).to(device) # torch.Size([3, 640, 480])

im = im.half() if model.fp16 else im.float() # uint8 to fp16/32

im /= 255 # 0 - 255 to 0.0 - 1.0

if len(im.shape) == 3:

im = im[None] # expand for batch dim , torch.Size([1, 3, 640, 480])

t2 = time_sync()

dt[0] += t2 - t1

# Inference

visualize = increment_path(save_dir / Path(path).stem, mkdir=True) if visualize else False

pred = model(im, augment=augment, visualize=visualize) # torch.Size([1, 18900, 85])

t3 = time_sync()

dt[1] += t3 - t2

# NMS

pred = non_max_suppression(pred, conf_thres, iou_thres, classes, agnostic_nms, max_det=max_det) # 1,5,6 . [6.72000e+02, 3.95000e+02, 8.10000e+02, 8.78000e+02, 8.96172e-01, 0.00000e+00]

dt[2] += time_sync() - t3

# Second-stage classifier (optional)

# pred = utils.general.apply_classifier(pred, classifier_model, im, im0s)

# Process predictions

for i, det in enumerate(pred): # per image,torch.Size([5, 6])

seen += 1

if webcam: # batch_size >= 1

p, im0, frame = path[i], im0s[i].copy(), dataset.count

s += f'{i}: '

else:

p, im0, frame = path, im0s.copy(), getattr(dataset, 'frame', 0)

p = Path(p) # to Path

save_path = str(save_dir / p.name) # im.jpg, "runs\\detect\\exp3","bus.jpg"

txt_path = str(save_dir / 'labels' / p.stem) + ('' if dataset.mode == 'image' else f'_{frame}') # im.txt

s += '%gx%g ' % im.shape[2:] # print string

gn = torch.tensor(im0.shape)[[1, 0, 1, 0]] # normalization gain whwh

imc = im0.copy() if save_crop else im0 # for save_crop

annotator = Annotator(im0, line_width=line_thickness, example=str(names))

if len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(im.shape[2:], det[:, :4], im0.shape).round()

# Print results

for c in det[:, -1].unique():

n = (det[:, -1] == c).sum() # detections per class

s += f"{n} {names[int(c)]}{'s' * (n > 1)}, " # add to string

# Write results

for *xyxy, conf, cls in reversed(det):

if save_txt: # Write to file

xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4)) / gn).view(-1).tolist() # normalized xywh

line = (cls, *xywh, conf) if save_conf else (cls, *xywh) # label format

with open(txt_path + '.txt', 'a') as f:

f.write(('%g ' * len(line)).rstrip() % line + '\n')

if save_img or save_crop or view_img: # Add bbox to image

c = int(cls) # integer class

label = None if hide_labels else (names[c] if hide_conf else f'{names[c]} {conf:.2f}')

annotator.box_label(xyxy, label, color=colors(c, True))

if save_crop:

save_one_box(xyxy, imc, file=save_dir / 'crops' / names[c] / f'{p.stem}.jpg', BGR=True)

# Stream results

im0 = annotator.result()

if view_img:

cv2.imshow(str(p), im0)

cv2.waitKey(1) # 1 millisecond

# Save results (image with detections)

if save_img:

if dataset.mode == 'image':

cv2.imwrite(save_path, im0)

else: # 'video' or 'stream'

if vid_path[i] != save_path: # new video

vid_path[i] = save_path

if isinstance(vid_writer[i], cv2.VideoWriter):

vid_writer[i].release() # release previous video writer

if vid_cap: # video

fps = vid_cap.get(cv2.CAP_PROP_FPS)

w = int(vid_cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(vid_cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

else: # stream

fps, w, h = 30, im0.shape[1], im0.shape[0]

save_path = str(Path(save_path).with_suffix('.mp4')) # force *.mp4 suffix on results videos

vid_writer[i] = cv2.VideoWriter(save_path, cv2.VideoWriter_fourcc(*'mp4v'), fps, (w, h))

vid_writer[i].write(im0)

# Print time (inference-only)

LOGGER.info(f'{s}Done. ({t3 - t2:.3f}s)')

# Print results

t = tuple(x / seen * 1E3 for x in dt) # speeds per image

LOGGER.info(f'Speed: %.1fms pre-process, %.1fms inference, %.1fms NMS per image at shape {(1, 3, *imgsz)}' % t)

if save_txt or save_img:

s = f"\n{len(list(save_dir.glob('labels/*.txt')))} labels saved to {save_dir / 'labels'}" if save_txt else ''

LOGGER.info(f"Results saved to {colorstr('bold', save_dir)}{s}")

if update:

strip_optimizer(weights) # update model (to fix SourceChangeWarning)

1788

1788

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?