首先从上一节博文我们知道了关于如何通过shell脚本进行传递参数

在main文件中,我们看到在train的环节有以下代码:

if args.train:

model = BrainDQN(epsilon=args.init_e, mem_size=args.memory_size, cuda=args.cuda)

resume = not args.weight == ‘’

train_dqn(model, args, resume)

接下来我们来看看这些具体的函数是怎么运行的。

本文通过训练过程中用到的函数的顺序进行讲解

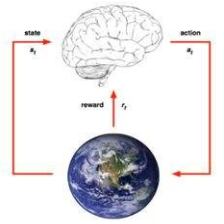

DNQ-FlappyBird学习之算法思路+代码分析

import

import sys

sys.path.append("game/")

import game.wrapped_flappy_bird as game

from BrainDQN import *

import shutil

import numpy as np

import random

import torch

import torch.nn as nn

import torch.optim as optim

from torch.autograd import Variable

import PIL.Image as Image

IMAGE_SIZE = (72, 128)

train_dqn

程序梳理:

1、首先是将best_time_step置0

def train_dqn(model, options, resume):

best_time_step = 0.

2、然后判断是否利用之前未训练完的weight继续学习

if resume:

if options.weight is None:

print ('when resume, you should give weight file name.')

return

print ('load previous model weight: {}'.format(options.weight))

_, _, best_time_step = load_checkpoint(options.weight, model)

tips1:

1、这里options就是在main文件中的args,这里相当于直接传入参数类,然后可以自由选择在dqn中用到哪些参数。

2、’{}’.format(options.weight)),在字符串中传入参数

这里是首先将保存的参数加载到已经实例化好的model中,然后返回最优的步数,可以用于判断是否保存后面的参数。

3、实例化游戏

flappyBird = game.GameState()

4、定义优化器和损失

optimizer = optim.RMSprop(model.parameters(), lr=options.lr)

ceriterion = nn.MSELoss()

model.parameters()是在model父类中定义的,可以直接调用,优化采取均方误差损失。

5、走出第一步

action = [1, 0]

o, r, terminal = flappyBird.frame_step(action)

o = preprocess(o)

model.set_initial_state()

第一步选择不点击屏幕,然后得到游戏的反馈,对图像进行预处理,得到第一张预处理的图片。初始化状态矩阵,形状为412872,现在元素都为0。

6、是否使用cuda加速

if options.cuda:

model = model.cuda()

7、开始的几步不训练

在游戏的开始100个环节,先不对网络进行训练,先积累训练样本。

# in the first `OBSERVE` time steos, we dont train the model

for i in range(options.observation):

action = model.get_action_randomly()

o, r, terminal = flappyBird.frame_step(action)

o = preprocess(o)

model.store_transition(o, action, r, terminal)

由于前100步用于探索环境,不训练,因此这里的动作是随机选择的,与状态策略无关。

然后将每一步的图片(状态),动作和奖励储存。

动作选择函数定义

def get_action_randomly(self):

action = np.zeros(self.actions, dtype=np.float32)

#action_index = random.randrange(self.actions)

action_index = 0 if random.random() < 0.8 else 1

action[action_index] = 1

return action

每次执行函数先定义1*2的全0数组,然后以0.8的概率选择不点击屏幕。

储存函数定义

def store_transition(self, o_next, action, reward, terminal):

next_state = np.append(self.current_state[1:,:,:], o_next.reshape((1,)+o_next.shape), axis=0)

self.replay_memory.append((self.current_state, action, reward, next_state, terminal))

if len(self.replay_memory) > self.mem_size:

self.replay_memory.popleft()

if not terminal:

self.current_state = next_state

else:

self.set_initial_state()

tips2:

1、传入参数有下一步的状态,当前的动作,得到的奖励以及是否终止

2、self.current_state[1:,:,:]在这里相当于self.current_state[1:,]即去掉最外面一维的第一个元素。因为添加的方式是append,从末尾添加,因此这种方式就是去掉时间最早的经验。

3、o_next.reshape((1,)+o_next.shape )得到的形状是112772。

4、这里要理解经验池中的每一个state都是4个观测的组合。添加仅经验池的也是4个state的组合,而不是当前的state

5、经验池是固定大小的,如果超出self.replay_memory.popleft()

6、如果当前到达的是终点,就返回空的数组,相当于初始化下一次的状态。

8、遍历options.max_episode轮

这个函数是主要的实现算法的部分

part1

for episode in range(options.max_episode):

model.time_step = 0

model.set_train()

total_reward = 0.

# begin an episode!

while True:

optimizer.zero_grad()

action = model.get_action()

o_next, r, terminal = flappyBird.frame_step(action)

total_reward += options.gamma**model.time_step * r

o_next = preprocess(o_next)

model.store_transition(o_next, action, r, terminal)

model.increase_time_step()

# Step 1: obtain random minibatch from replay memory

minibatch = random.sample(model.replay_memory, options.batch_size)

state_batch = np.array([data[0] for data in minibatch])

action_batch = np.array([data[1] for data in minibatch])

reward_batch = np.array([data[2] for data in minibatch])

next_state_batch = np.array([data[3] for data in minibatch])

state_batch_var = Variable(torch.from_numpy(state_batch))

with torch.no_grad():

next_state_batch_var = Variable(torch.from_numpy(next_state_batch))

if options.cuda:

state_batch_var = state_batch_var.cuda()

next_state_batch_var = next_state_batch_var.cuda()

# Step 2: calculate y

q_value_next = model.forward(next_state_batch_var)

q_value = model.forward(state_batch_var)

y = reward_batch.astype(np.float32)

max_q, _ = torch.max(q_value_next, dim=1)

for i in range(options.batch_size):

if not minibatch[i][4]:

y[i] += options.gamma*max_q[[i][0]].item()

y = Variable(torch.from_numpy(y))

action_batch_var = Variable(torch.from_numpy(action_batch))

if options.cuda:

y = y.cuda()

action_batch_var = action_batch_var.cuda()

q_value = torch.sum(torch.mul(action_batch_var, q_value), dim=1)

loss = ceriterion(q_value, y)

loss.backward()

optimizer.step()

# when the bird dies, the episode ends

if terminal:

break

print ('episode: {}, epsilon: {:.4f}, max time step: {}, total reward: {:.6f}'.format(

episode, model.epsilon, model.time_step, total_reward))

if model.epsilon > options.final_e:

delta = (options.init_e - options.final_e)/options.exploration

model.epsilon -= delta

if episode % 100 == 0:

ave_time = test_dqn(model, episode)

if ave_time > best_time_step:

best_time_step = ave_time

save_checkpoint({

'episode': episode,

'epsilon': model.epsilon,

'state_dict': model.state_dict(),

'best_time_step': best_time_step,

}, True, 'checkpoint-episode-%d.pth.tar' %episode)

elif episode % options.save_checkpoint_freq == 0:

save_checkpoint({

'episode:': episode,

'epsilon': model.epsilon,

'state_dict': model.state_dict(),

'time_step': ave_time,

}, False, 'checkpoint-episode-%d.pth.tar' %episode)

else:

continue

print ('save checkpoint, episode={}, ave time step={:.2f}'.format(

episode, ave_time))

首先在每一轮(直到游戏结束)开始的时候将当前步数置0,然后通过调用model.set_train()将train标志位设置为True。用与下面选择动作的时候确定选择最优动作(test)还是根据ε策略选择。将总奖励置零。

model.time_step = 0

model.set_train()

total_reward = 0.

# begin an episode!

然后是进入持续动作空间(while True),在这个while循环中

首先进行游戏,得到奖励,存储变量。

optimizer.zero_grad()

action = model.get_action()

o_next, r, terminal = flappyBird.frame_step(action)

total_reward += options.gamma**model.time_step * r

o_next = preprocess(o_next)

model.store_transition(o_next, action, r, terminal)

model.increase_time_step()

tips3:

optimizer.zero_grad()的作用是将梯度置零,这样在下一次进行训练的时候不会出错

minibatch = random.sample(model.replay_memory, options.batch_size)

state_batch = np.array([data[0] for data in minibatch])

action_batch = np.array([data[1] for data in minibatch])

reward_batch = np.array([data[2] for data in minibatch])

next_state_batch = np.array([data[3] for data in minibatch])

tips4

random.sample可以依据数据库的格式,抽取固定数量的样本

根据奖励状态等在经验池中位置提取批量的状态,动作,奖励,下一状态。

将当前状态(图片)变成一个tensor,并且下一状态的也变成一个tensor,但是却不涉及微分求导。

state_batch_var = Variable(torch.from_numpy(state_batch))

with torch.no_grad():

next_state_batch_var = Variable(torch.from_numpy(next_state_batch))

这里也有一个cuda的加速判断

if options.cuda:

state_batch_var = state_batch_var.cuda()

next_state_batch_var = next_state_batch_var.cuda()

从这一步往上,我们完成了行动一步,放入经验池,取经验,将状态设为可自动求微分的准备工作。

part2

首先将两步(图像)状态作为输入传入网络,计算得到输出(2维)

q_value_next = model.forward(next_state_batch_var)

q_value = model.forward(state_batch_var)

def createQNetwork(self):

self.conv1 = nn.Conv2d(4, 32, kernel_size=8, stride=4, padding=2)

self.relu1 = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(32, 64, kernel_size=4, stride=2, padding=1)

self.relu2 = nn.ReLU(inplace=True)

self.map_size = (64, 16, 9)

self.fc1 = nn.Linear(self.map_size[0]*self.map_size[1]*self.map_size[2], 256)

self.relu3 = nn.ReLU(inplace=True)

self.fc2 = nn.Linear(256, self.actions)

经过两层卷积两层全连接,其中3个relu激活

def get_q_value(self, o):

out = self.conv1(o)

out = self.relu1(out)

out = self.conv2(out)

out = self.relu2(out)

out = out.view(out.size()[0], -1)

out = self.fc1(out)

out = self.relu3(out)

out = self.fc2(out)

return out

tips5

1、out.view的作用是将多维数据平铺为一维

2、x = a.view(a.size(0), -1)等价于x = x.view(x.size()[0], -1)

def forward(self, o):

q = self.get_q_value(o)

return q

然后将奖励的批量设置为如下格式,并命名为y

y = reward_batch.astype(np.float32)

在上一步得到q_next值以后(二维输出),然后取其中的最大值

max_q, _ = torch.max(q_value_next, dim=1)

对于batch中的每个样本,计算r+γmaxq(s’,a),即target

for i in range(options.batch_size):

if not minibatch[i][4]:

y[i] += options.gamma*max_q[[i][0]].item()

tips6:

因为由存储函数的定义可知,经验池中的样本由五个元素组成,最后一个是是否为终止状态,因此这里的意思是,只要不是终止状态,TDtarget都会加上后面哪一项,众所周知,在结束状态的价值函数等于0。

然后这值y为Variable

y = Variable(torch.from_numpy(y))

设置action_batch为Variable

action_batch_var = Variable(torch.from_numpy(action_batch))

设置这y和action_batch_var的原因是,y需要传入pytorch的损失中,action需要使用torch的mul函数。

一个cuda的判断

if options.cuda:

y = y.cuda()

action_batch_var = action_batch_var.cuda()

因为q是关于动作的,但是这里通过神经网络得到的是两个值,因此需要确定当前的动作是哪个,这里的加和是关于mul的,不是关于所有样本的。

q_value = torch.sum(torch.mul(action_batch_var, q_value), dim=1)

最后是关于损失

loss = ceriterion(q_value, y)

loss.backward()

最后优化

optimizer.step()

if terminal:

break

9、每次while结束输出

print ('episode: {}, epsilon: {:.4f}, max time step: {}, total reward: {:.6f}'.format(

episode, model.epsilon, model.time_step, total_reward))

tips7:

1、{:.4f}表示4位浮点数,同时可以提供多个输入

2、step表示进行了多久,总奖励与实际游戏给出的奖励和时间步都有关

10、epsilon逐步减小

if model.epsilon > options.final_e:

delta = (options.init_e - options.final_e)/options.exploration

model.epsilon -= delta

在这个程序中

final_epsilon=0.1

exploration=50000

initial_epsilon=1.

就是说这是一个常数,每次减小很小

由下图可知,在50000步的时候,epsilon已经到达0.1

11、每遍历100次就进行一次测试,并储存在测试中进行的平均步数(步数越高越好)

if episode % 100 == 0:

ave_time = test_dqn(model, episode)

def test_dqn(model, episode):

model.set_eval()

ave_time = 0.

for test_case in range(5):

model.time_step = 0

flappyBird = game.GameState()

o, r, terminal = flappyBird.frame_step([1, 0])

o = preprocess(o)

model.set_initial_state()

while True:

action = model.get_optim_action()

o, r, terminal = flappyBird.frame_step(action)

if terminal:

break

o = preprocess(o)

model.current_state = np.append(model.current_state[1:,:,:], o.reshape((1,)+o.shape), axis=0)

model.increase_time_step()

ave_time += model.time_step

ave_time /= 5

print (‘testing: episode: {}, average time: {}’.format(episode, ave_time))

return ave_time

首先设置model.set_eval,这一步的作用是将train标志位置0,这样在提取动作的时候,就会选择最优动作。

然后运行5个回合,取平均,游戏中的初始化需要做的是,开始游戏,根据一个固定的动作获取状态奖励,预处理数据,再初始化当前训练数据(4个叠在一起)

在每一个回合中,首先选取最优动作,然后获得奖励,状态等,然后预处理,更新状态

注意这里不需要加入经验池,然后增加步数。

tips9:

在程序中我们一共用到三个动作选择函数,分别是一开始的随机选择动作100次,然后是在train中使用epilison策略选取,最后是在test中使用最优策略选取。这里的策略指的是根据Q值大小进行选择。

(1)

def get_action(self):

if self.train and random.random() <= self.epsilon:

return self.get_action_randomly()

return self.get_optim_action()

tips8:

if里面的returen是退出整个函数的意思

(2)

def get_optim_action(self):

state = self.current_state

with torch.no_grad():

state_var = Variable(torch.from_numpy(state)).unsqueeze(0)

if self.use_cuda:

state_var = state_var.cuda()

q_value = self.forward(state_var)

_, action_index = torch.max(q_value, dim=1)

action_index = action_index[[0][0]].item()

action = np.zeros(self.actions, dtype=np.float32)

action[action_index] = 1

return action

在测试中我们选择的时最优动作

在这个函数中,首先获取当前状态,这里的状态就是4个图片的叠加。并且在train或者test的开头都会init一下。

然后由于是选择最优动作,因此需要判断当前状态下哪个动作对应的值最大。这里的current就是上一步储存的下一步的值。

然后就是计算q值,选取最大动作索引。

tips10:

1、由于使用神经网络时传入的数据必须是tensor,同时因为在训练过程中不需要求导,因此设置no_grad()。

2、tourch.max函数返回每一行最大值和索引,这里我们只需要索引。索引是字典形式,首先转换为元组,然后取值

3、unsqueeze(0)表示增添一维度,因为pytorch输入的第一维一定是样本数。

python中的unsqueeze()和squeeze()函数

(3)

def get_action_randomly(self):

action = np.zeros(self.actions, dtype=np.float32)

#action_index = random.randrange(self.actions)

action_index = 0 if random.random() < 0.8 else 1

action[action_index] = 1

return action

12、保存数据

(1)当测试中发现运行时间比上一次测试时步数长的时后,更新最优时间(长),并保存参数

(2)当程序中固定每隔一段时间保存一次参数

if model.epsilon > options.final_e:

delta = (options.init_e - options.final_e)/options.exploration

model.epsilon -= delta

if episode % 100 == 0:

ave_time = test_dqn(model, episode)

if ave_time > best_time_step:

best_time_step = ave_time

save_checkpoint({

'episode': episode,

'epsilon': model.epsilon,

'state_dict': model.state_dict(),

'best_time_step': best_time_step,

}, True, 'checkpoint-episode-%d.pth.tar' %episode)

elif episode % options.save_checkpoint_freq == 0:

save_checkpoint({

'episode:': episode,

'epsilon': model.epsilon,

'state_dict': model.state_dict(),

'time_step': ave_time,

}, False, 'checkpoint-episode-%d.pth.tar' %episode)

else:

continue

print ('save checkpoint, episode={}, ave time step={:.2f}'.format(

episode, ave_time))

tips11:

定义:

def save_checkpoint(state, is_best, filename=‘checkpoint.pth.tar’):

torch.save(state, filename)

if is_best:

shutil.copyfile(filename, ‘model_best.pth.tar’)

应用:

save_checkpoint({

‘episode’: episode,

‘epsilon’: model.epsilon,

‘state_dict’: model.state_dict(),

‘best_time_step’: best_time_step,

}, True, ‘checkpoint-episode-%d.pth.tar’ %episode)

1、这种保存方法只是保存参数model.state_dict(),但是如果用load.save()则可以保存整个神经网络的模型和参数,别人用的话只需要model = load…就可以

2、保存的其他参数比如best_time_step可用于中途离开,后来继续训练用,其他的我想应该也是可以继续用的,但是在这个代码中在训练中只用到best_time_step.

tips12:

接下来是一个在play中的一个技巧

print ('load pretrained model file: ’ + model_file_name)因为都是字符串,因此可以直接加减,不需要使用format

论文

在论文中

分析可发现,代码实现的思路和论文一样,在卷积中利用了有部分重叠的卷积。

代码流程

4257

4257

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?