Py之imblearn:imblearn/imbalanced-learn库的简介、安装、使用方法之详细攻略

目录

imblearn/imbalanced-learn库的使用方法

imblearn/imbalanced-learn库的简介

imblearn/imbalanced-learn是一个python包,它提供了许多重采样技术,常用于显示强烈类间不平衡的数据集中。它与scikit learn兼容,是 scikit-learn-contrib 项目的一部分。Imbalanced-learn 是一个 Python 库,专门用于处理不平衡数据集的机器学习问题。该库提供了一系列的重采样技术、组合方法和机器学习算法,旨在提高在不平衡数据集上的分类性能。它支持欠采样、过采样、结合欠采样和过采样的方法,以及一些集成学习方法。

Imbalanced-learn 的主要特点包括:

- >>多样性:Imbalanced-learn 提供了多种不同的重采样技术,包括基于欠采样和过采样的方法,以及结合欠采样和过采样的方法。此外,它还提供了一些组合方法,如集成学习和自适应集成学习等,以便于用户选择合适的方法。

- >>可扩展性:Imbalanced-learn 支持与 Scikit-learn 和 Pandas 等常见的 Python 库的集成,可以方便地与其他的机器学习算法和工具进行组合和使用。

- >>灵活性:Imbalanced-learn 提供了多种参数调整和定制化的选项,以便于用户根据不同的应用场景和需求进行调整和定制化。

在python3.6+下测试了imbalanced-learn。依赖性要求基于上一个scikit学习版本:

- scipy(>=0.19.1)

- numpy(>=1.13.3)

- scikit-learn(>=0.22)

- joblib(>=0.11)

- keras 2 (optional)

- tensorflow (optional)

GitHub链接:https://github.com/scikit-learn-contrib/imbalanced-learn

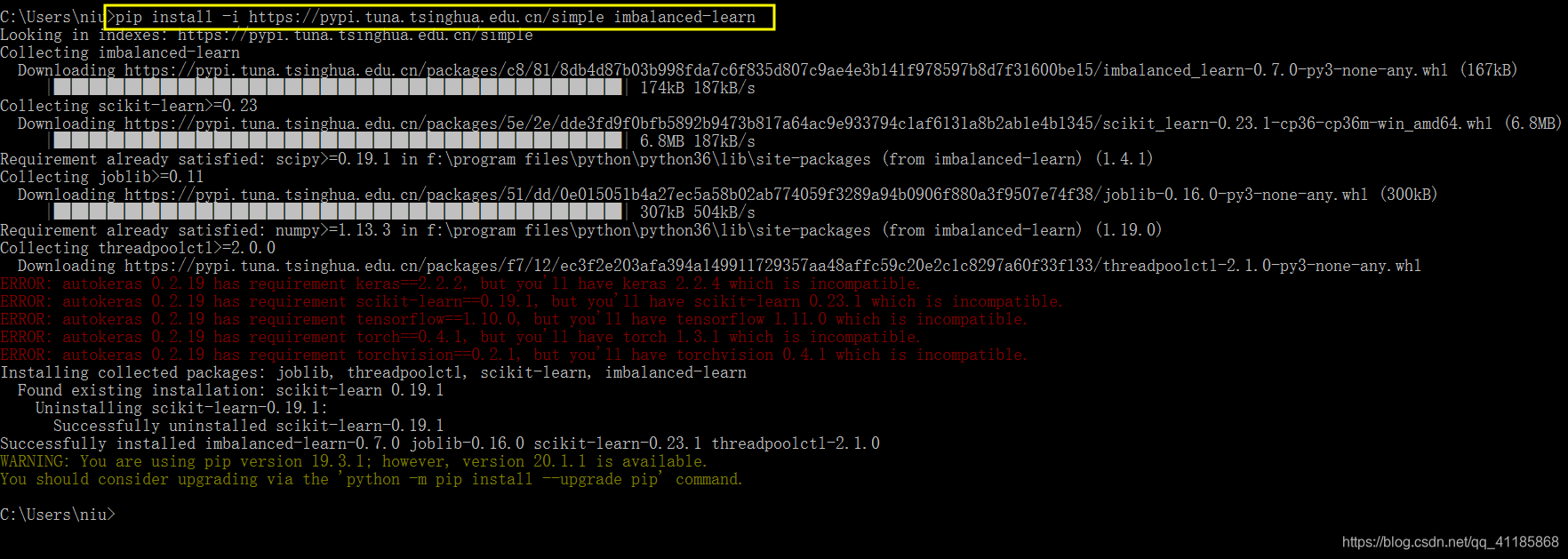

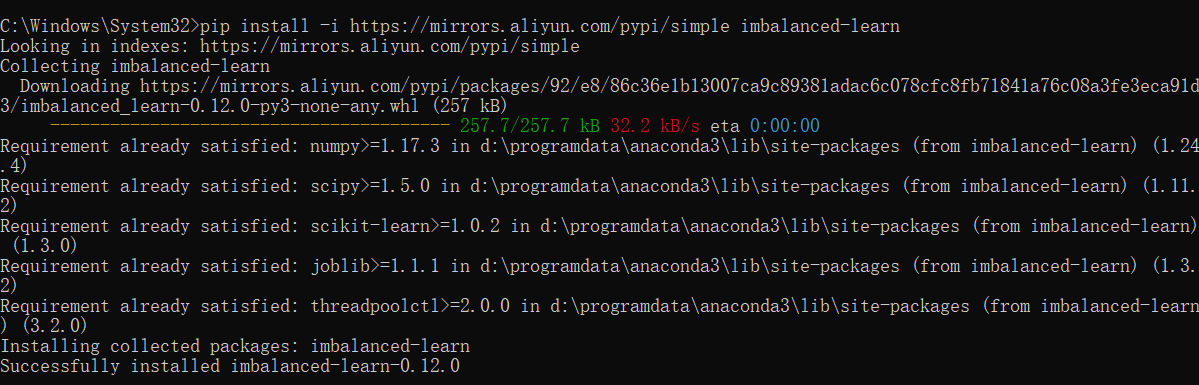

imblearn/imbalanced-learn库的安装

pip install imblearn

pip install imbalanced-learn

pip install -i https://mirrors.aliyun.com/pypi/simple imbalanced-learn

pip install -U imbalanced-learn

conda install -c conda-forge imbalanced-learn

imblearn/imbalanced-learn库的使用方法

1、用法详解

大多数分类算法只有在每个类的样本数量大致相同的情况下才能达到最优。高度倾斜的数据集,其中少数被一个或多个类大大超过,已经证明是一个挑战,但同时变得越来越普遍。解决这个问题的一种方法是通过重新采样数据集来抵消这种不平衡,希望得到一个比其他方法更健壮和公平的决策边界。

Re-sampling techniques are divided in two categories:

- Under-sampling the majority class(es).

- Over-sampling the minority class.

- Combining over- and under-sampling.

- Create ensemble balanced sets.

Below is a list of the methods currently implemented in this module.

-

Under-sampling

- Random majority under-sampling with replacement

- Extraction of majority-minority Tomek links [1]

- Under-sampling with Cluster Centroids

- NearMiss-(1 & 2 & 3) [2]

- Condensed Nearest Neighbour [3]

- One-Sided Selection [4]

- Neighboorhood Cleaning Rule [5]

- Edited Nearest Neighbours [6]

- Instance Hardness Threshold [7]

- Repeated Edited Nearest Neighbours [14]

- AllKNN [14]

-

Over-sampling

- Random minority over-sampling with replacement

- SMOTE - Synthetic Minority Over-sampling Technique [8]

- SMOTENC - SMOTE for Nominal Continuous [8]

- bSMOTE(1 & 2) - Borderline SMOTE of types 1 and 2 [9]

- SVM SMOTE - Support Vectors SMOTE [10]

- ADASYN - Adaptive synthetic sampling approach for imbalanced learning [15]

- KMeans-SMOTE [17]

-

Over-sampling followed by under-sampling

-

Ensemble classifier using samplers internally

- Mini-batch resampling for Keras and Tensorflow

1805

1805

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?