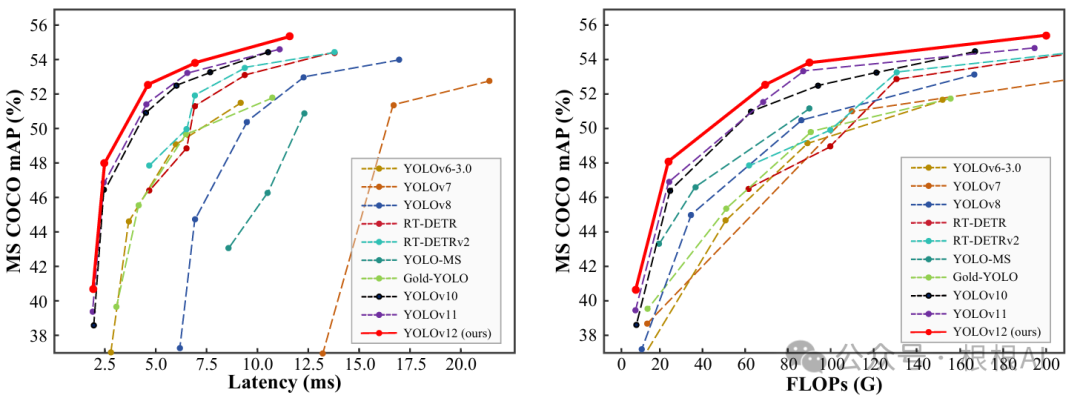

2025年2月19日,YOLOv12发布,YOLOv12与其它YOLO模型的对比如下:

论文地址:https://arxiv.org/pdf/2502.12524

代码地址:https://github.com/sunsmarterjie/yolov12

YOLOv12在继承YOLO系列高效性的同时,引入了注意力机制(attention mechanisms),显著提升了检测精度,同时保持了快速的推理速度。YOLOv12通过一系列创新的设计和架构改进,打破了传统卷积神经网络(CNN)在YOLO系列中的主导地位,证明了注意力机制在实时目标检测中的潜力。

YOLOv12的主要贡献包括:

-

提出了一种以注意力为中心的YOLO框架,通过方法论创新和架构改进,打破了CNN在YOLO系列中的主导地位。

-

在不依赖额外预训练技术的情况下,YOLOv12实现了更快的推理速度和更高的检测精度,展现出其在实时目标检测中的潜力。

1 Area Attention

YOLOv12提出了一种名为“Area Attention”的注意力机制,将注意力分解为水平和垂直两个方向。

A2模块代码实现如下:

class AAttn(nn.Module):

"""

Area-attention module with the requirement of flash attention.

Attributes:

dim (int): Number of hidden channels;

num_heads (int): Number of heads into which the attention mechanism is divided;

area (int, optional): Number of areas the feature map is divided. Defaults to 1.

Methods:

forward: Performs a forward process of input tensor and outputs a tensor after the execution of the area attention mechanism.

Examples:

>>> import torch

>>> from ultralytics.nn.modules import AAttn

>>> model = AAttn(dim=64, num_heads=2, area=4)

>>> x = torch.randn(2, 64, 128, 128)

>>> output = model(x)

>>> print(output.shape)

Notes:

recommend that dim//num_heads be a multiple of 32 or 64.

"""

def __init__(self, dim, num_heads, area=1):

"""Initializes the area-attention module, a simple yet efficient attention module for YOLO."""

super().__init__()

self.area = area

self.num_heads = num_heads

self.head_dim = head_dim = dim // num_heads

all_head_dim = head_dim * self.num_heads

self.qkv = Conv(dim, all_head_dim * 3, 1, act=False)

self.proj = Conv(all_head_dim, dim, 1, act=False)

self.pe = Conv(all_head_dim, dim, 7, 1, 3, g=dim, act=False)

def forward(self, x):

"""Processes the input tensor 'x' through the area-attention"""

B, C, H, W = x.shape

N = H * W

qkv = self.qkv(x).flatten(2).transpose(1, 2)

if self.area > 1:

qkv = qkv.reshape(B * self.area, N // self.area, C * 3)

B, N, _ = qkv.shape

q, k, v = qkv.view(B, N, self.num_heads, self.head_dim * 3).split(

[self.head_dim, self.head_dim, self.head_dim], dim=3

)

if x.is_cuda:

x = flash_attn_func(

q.contiguous().half(),

k.contiguous().half(),

v.contiguous().half()

).to(q.dtype)

else:

q = q.permute(0, 2, 3, 1)

k = k.permute(0, 2, 3, 1)

v = v.permute(0, 2, 3, 1)

attn = (q.transpose(-2, -1) @ k) * (self.head_dim ** -0.5)

max_attn = attn.max(dim=-1, keepdim=True).values

exp_attn = torch.exp(attn - max_attn)

attn = exp_attn / exp_attn.sum(dim=-1, keepdim=True)

x = (v @ attn.transpose(-2, -1))

x = x.permute(0, 3, 1, 2)

v = v.permute(0, 3, 1, 2)

if self.area > 1:

x = x.reshape(B // self.area, N * self.area, C)

v = v.reshape(B // self.area, N * self.area, C)

B, N, _ = x.shape

x = x.reshape(B, H, W, C).permute(0, 3, 1, 2)

v = v.reshape(B, H, W, C).permute(0, 3, 1, 2)

x = x + self.pe(v)

x = self.proj(x)

return x

class ABlock(nn.Module):

"""

ABlock class implementing a Area-Attention block with effective feature extraction.

This class encapsulates the functionality for applying multi-head attention with feature map are dividing into areas

and feed-forward neural network layers.

Attributes:

dim (int): Number of hidden channels;

num_heads (int): Number of heads into which the attention mechanism is divided;

mlp_ratio (float, optional): MLP expansion ratio (or MLP hidden dimension ratio). Defaults to 1.2;

area (int, optional): Number of areas the feature map is divided. Defaults to 1.

Methods:

forward: Performs a forward pass through the ABlock, applying area-attention and feed-forward layers.

Examples:

Create a ABlock and perform a forward pass

>>> model = ABlock(dim=64, num_heads=2, mlp_ratio=1.2, area=4)

>>> x = torch.randn(2, 64, 128, 128)

>>> output = model(x)

>>> print(output.shape)

Notes:

recommend that dim//num_heads be a multiple of 32 or 64.

"""

def __init__(self, dim, num_heads, mlp_ratio=1.2, area=1):

"""Initializes the ABlock with area-attention and feed-forward layers for faster feature extraction."""

super().__init__()

self.attn = AAttn(dim, num_heads=num_heads, area=area)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = nn.Sequential(Conv(dim, mlp_hidden_dim, 1), Conv(mlp_hidden_dim, dim, 1, act=False))

self.apply(self._init_weights)

def _init_weights(self, m):

"""Initialize weights using a truncated normal distribution."""

if isinstance(m, nn.Conv2d):

trunc_normal_(m.weight, std=.02)

if isinstance(m, nn.Conv2d) and m.bias is not None:

nn.init.constant_(m.bias, 0)

def forward(self, x):

"""Executes a forward pass through ABlock, applying area-attention and feed-forward layers to the input tensor."""

x = x + self.attn(x)

x = x + self.mlp(x)

return x

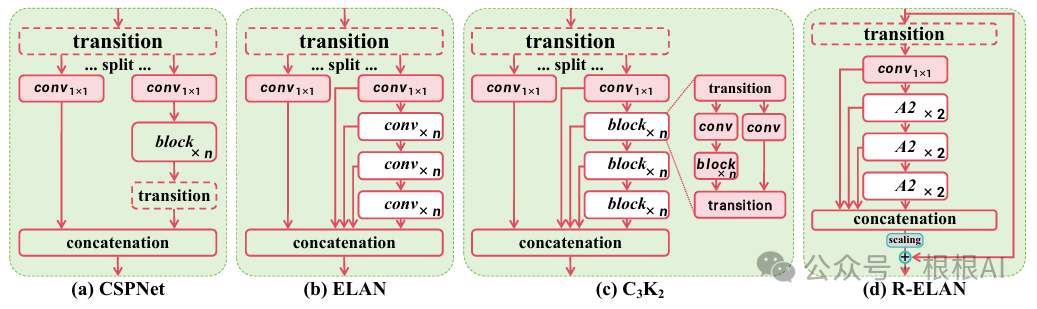

2 R-ELAN

并利用Area Attention(A2)作为主要的特征提取模块,提出了R-ELAN。

R-ELAN代码实现如下:

我们发现,并非所有的特征提取模块都替换为了A2C2f,而是在较小的尺度上使用A2C2f,这可能是出于时间复杂度的考虑,在大的尺度上使用A2C2f,将会很大程度的增加计算量。

class A2C2f(nn.Module):

"""

A2C2f module with residual enhanced feature extraction using ABlock blocks with area-attention. Also known as R-ELAN

This class extends the C2f module by incorporating ABlock blocks for fast attention mechanisms and feature extraction.

Attributes:

c1 (int): Number of input channels;

c2 (int): Number of output channels;

n (int, optional): Number of 2xABlock modules to stack. Defaults to 1;

a2 (bool, optional): Whether use area-attention. Defaults to True;

area (int, optional): Number of areas the feature map is divided. Defaults to 1;

residual (bool, optional): Whether use the residual (with layer scale). Defaults to False;

mlp_ratio (float, optional): MLP expansion ratio (or MLP hidden dimension ratio). Defaults to 1.2;

e (float, optional): Expansion ratio for R-ELAN modules. Defaults to 0.5.

g (int, optional): Number of groups for grouped convolution. Defaults to 1;

shortcut (bool, optional): Whether to use shortcut connection. Defaults to True;

Methods:

forward: Performs a forward pass through the A2C2f module.

Examples:

>>> import torch

>>> from ultralytics.nn.modules import A2C2f

>>> model = A2C2f(c1=64, c2=64, n=2, a2=True, area=4, residual=True, e=0.5)

>>> x = torch.randn(2, 64, 128, 128)

>>> output = model(x)

>>> print(output.shape)

"""

def __init__(self, c1, c2, n=1, a2=True, area=1, residual=False, mlp_ratio=2.0, e=0.5, g=1, shortcut=True):

super().__init__()

c_ = int(c2 * e) # hidden channels

assert c_ % 32 == 0, "Dimension of ABlock be a multiple of 32."

# num_heads = c_ // 64 if c_ // 64 >= 2 else c_ // 32

num_heads = c_ // 32

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv((1 + n) * c_, c2, 1) # optional act=FReLU(c2)

init_values = 0.01 # or smaller

self.gamma = nn.Parameter(init_values * torch.ones((c2)), requires_grad=True) if a2 and residual else None

self.m = nn.ModuleList(

nn.Sequential(*(ABlock(c_, num_heads, mlp_ratio, area) for _ in range(2))) if a2 else C3k(c_, c_, 2, shortcut, g) for _ in range(n)

)

def forward(self, x):

"""Forward pass through R-ELAN layer."""

y = [self.cv1(x)]

y.extend(m(y[-1]) for m in self.m)

if self.gamma is not None:

return x + (self.gamma * self.cv2(torch.cat(y, 1)).permute(0, 2, 3, 1)).permute(0, 3, 1, 2)

return self.cv2(torch.cat(y, 1))YOLOv12的模型结构如下:

# YOLOv12 🚀, AGPL-3.0 license

# YOLOv12 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov12n.yaml' will call yolov12.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 465 layers, 2,603,056 parameters, 2,603,040 gradients, 6.7 GFLOPs

s: [0.50, 0.50, 1024] # summary: 465 layers, 9,285,632 parameters, 9,285,616 gradients, 21.7 GFLOPs

m: [0.50, 1.00, 512] # summary: 501 layers, 20,201,216 parameters, 20,201,200 gradients, 68.1 GFLOPs

l: [1.00, 1.00, 512] # summary: 831 layers, 26,454,880 parameters, 26,454,864 gradients, 89.7 GFLOPs

x: [1.00, 1.50, 512] # summary: 831 layers, 59,216,928 parameters, 59,216,912 gradients, 200.3 GFLOPs

# YOLO12n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 2, C3k2, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 4, A2C2f, [512, True, 4]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 4, A2C2f, [1024, True, 1]] # 8

# YOLO12n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, A2C2f, [512, False, -1]] # 11

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, A2C2f, [256, False, -1]] # 14

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 11], 1, Concat, [1]] # cat head P4

- [-1, 2, A2C2f, [512, False, -1]] # 17

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 8], 1, Concat, [1]] # cat head P5

- [-1, 2, C3k2, [1024, True]] # 20 (P5/32-large)

- [[14, 17, 20], 1, Detect, [nc]] # Detect(P3, P4, P5)可以发现,并非所有的特征提取模块都替换为了A2C2f,而是在较小的尺度上使用A2C2f,这可能是出于时间复杂度的考虑,在大的尺度上使用A2C2f,将会很大程度的增加计算量。

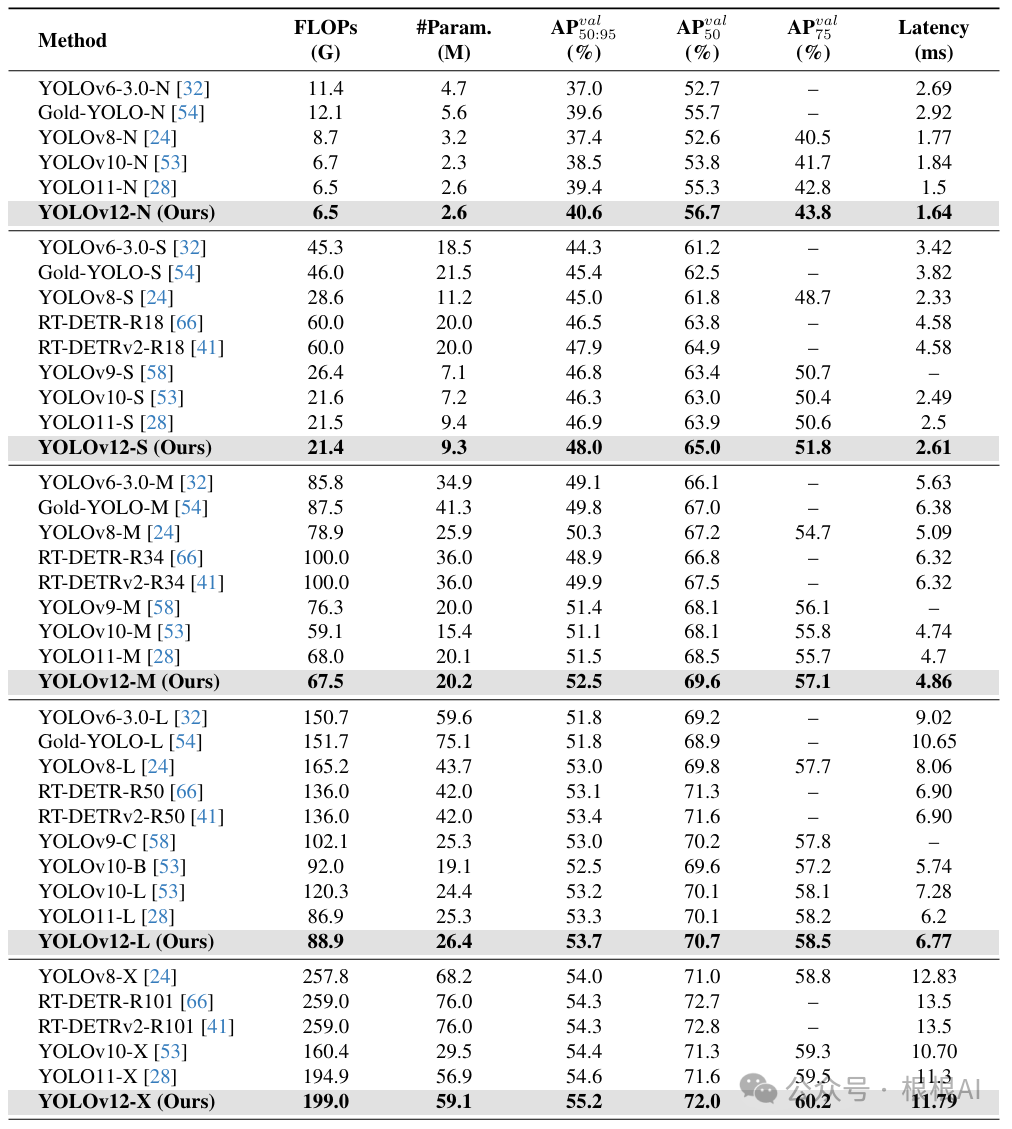

3 对比实验

以下是YOLOv12与其它几个版本YOLO的对比。

可以发现,YOLOv12在AP上达到了YOLO模型的最佳水平。美中不足的是,YOLO12在推理速度上略低于YOLO11模型,但零点几毫秒的延迟几乎可以忽略不记。

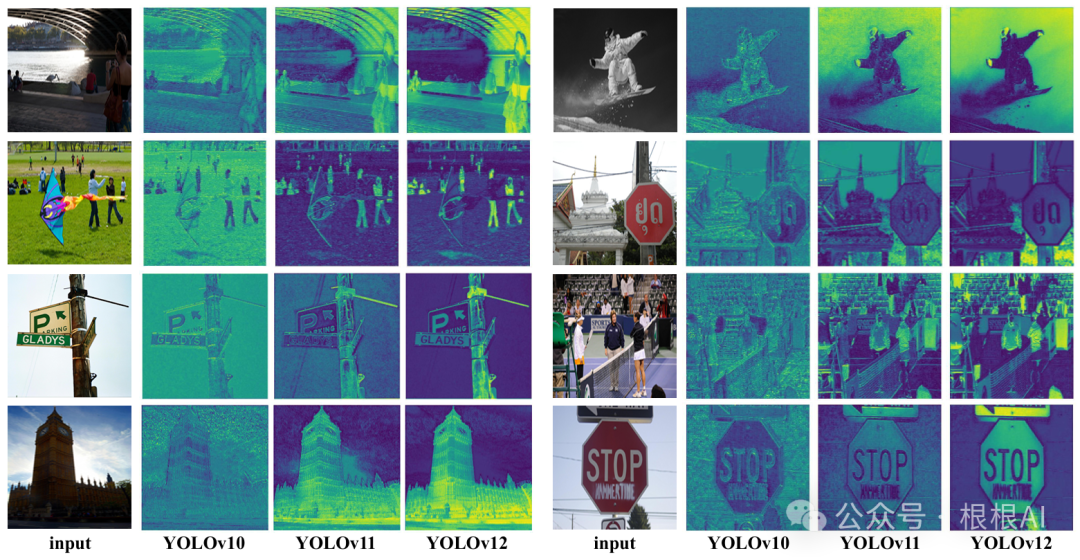

论文还对不同模型的特征图做了可视化,YOLOv12的注意力能够更加有效地保持目标的特征。

YOLOv12成功地将注意力机制引入实时目标检测框架,通过Area Attention、R-ELAN和架构优化等创新设计,实现了精度与效率的双重提升。该研究不仅挑战了CNN在YOLO系列中的主导地位,还为未来实时目标检测的发展提供了新的方向。

1487

1487

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?