论文题目:Attention U-Net: Learning Where to Look for the Pancreas

论文地址:https://arxiv.org/pdf/1804.03999

代码地址:https://github.com/ozan-oktay/Attention-Gated-Networks

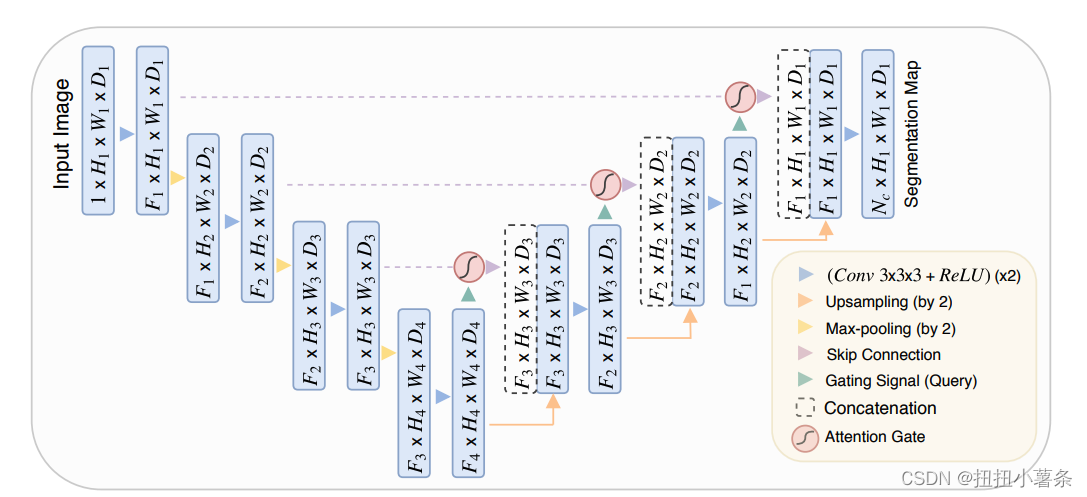

方法:上采样阶段加了门控函数。

代码:

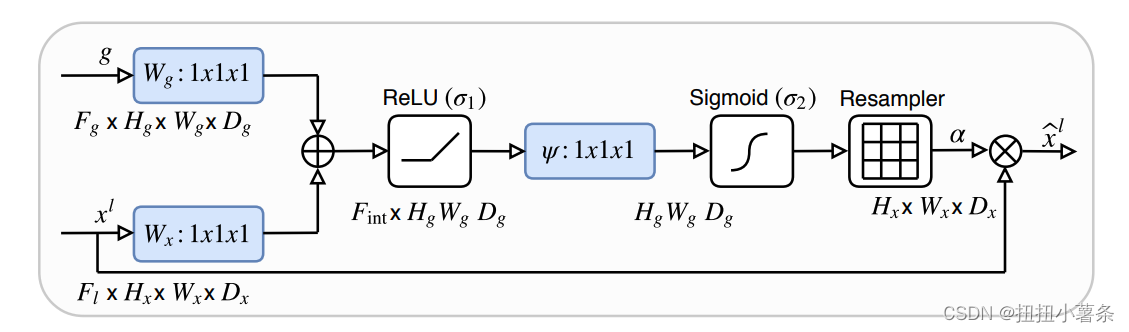

class Attention_block(nn.Module):

def __init__(self,F_g,F_l,F_int):

super(Attention_block,self).__init__()

self.W_g = nn.Sequential(

nn.Conv2d(F_g, F_int, kernel_size=1,stride=1,padding=0,bias=True),

nn.BatchNorm2d(F_int)

)

self.W_x = nn.Sequential(

nn.Conv2d(F_l, F_int, kernel_size=1,stride=1,padding=0,bias=True),

nn.BatchNorm2d(F_int)

)

self.psi = nn.Sequential(

nn.Conv2d(F_int, 1, kernel_size=1,stride=1,padding=0,bias=True),

nn.BatchNorm2d(1),

nn.Sigmoid()

)

self.relu = nn.ReLU(inplace=True)

def forward(self,g,x):

g1 = self.W_g(g) #1x512x64x64->conv(512,256)/B.N.->1x256x64x64

x1 = self.W_x(x) #1x512x64x64->conv(512,256)/B.N.->1x256x64x64

psi = self.relu(g1+x1)#1x256x64x64di

psi = self.psi(psi)#得到权重矩阵 1x256x64x64 -> 1x1x64x64 ->sigmoid 结果到(0,1)

return x*psi #与low-level feature相乘,将权重矩阵赋值进去

通过sigmoid来对low-level的feature加入attention。

2018

2018

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?