《gc-net》 参考别人写的pytorch版本代码链接

理解参考https://blog.csdn.net/weixin_41405284/article/details/109381542?spm=1001.2014.3001.5501

1 generate-image-list.py

生成图像的绝对路径列表,用于后续读取,下载下来的图片文件夹如下:

import os

def generate_image_list(data_dir= "C:/.../data_scene_flow/training" , label_dir= "C:/...\data_scene_flow"):

left_data_files = []

right_data_files = []

label_files = []

left_data_dir = os.path.join(data_dir,'image_2')

right_data_dir = os.path.join(data_dir,'image_3')

label_data_dir = os.path.join(data_dir,'disp_noc_0')

f_train = open('train.lst', 'w')

for file in os.listdir(left_data_dir):

left_data_files.append(str(left_data_dir) + '/' + str(file))

for file in os.listdir(right_data_dir):

right_data_files.append(str(right_data_dir) + '/' + str(file))

for file in os.listdir(label_data_dir):

label_files.append(str(label_data_dir) + '/' + str(file))

for left_data_file,right_data_file,label_file in zip(left_data_files,right_data_files,label_files):

line = str(left_data_file) + '\t' + str(right_data_file) + '\t' + str(label_file) + '\n'

f_train.write(line)

f_train.close()

if __name__ == '__main__':

generate_image_list()

运行后,新建只写 train.lst 文件里为图片链接地址,用txt打开是这样的:

2 read_data.py 几个类的实现

mode为’train’ 训练处理后256*512左右图片 0-159;为“validate”验证160-199;‘test’ 测试文件夹testing中200对图片

几个类:

class KITTI2015(Dataset)

class RandomCrop()

class Normalize()

class ToTensor()

class Pad()

对于F.pad的理解https://blog.csdn.net/binbinczsohu/article/details/106359426

3 main.py

4 network.py

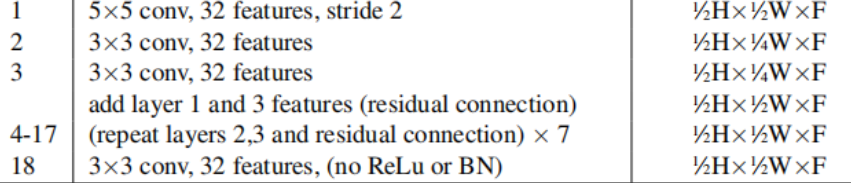

使用fiter size:5*5,stride:2的conv2d 将输入降维(1/2H,1/2W),self.conv0中实现.8层残差网络结构,进行Unary Features 特征提取.

imgl0 = F.relu(self.bn0(self.conv0(imgLeft)))

imgr0 = F.relu(self.bn0(self.conv0(imgRight)))

imgl_block = self.res_block(imgl0)

imgr_block = self.res_block(imgr0)

imgl1 = self.conv1(imgl_block)

imgr1 = self.conv1(imgr_block)

残差层的主要作用就是提取左右图像的‘unary features’,残差层具体实现

class BasicBlock(nn.Module): #basic block for Conv2d

def __init__(self,in_planes,planes,stride=1):

super(BasicBlock,self).__init__()

self.conv1=nn.Conv2d(in_planes,planes,kernel_size=3,stride=stride,padding=1)

self.bn1=nn.BatchNorm2d(planes)

self.conv2=nn.Conv2d(planes,planes,kernel_size=3,stride=1,padding=1)

self.bn2=nn.BatchNorm2d(planes)

self.shortcut=nn.Sequential()

def forward(self, x):

out=F.relu(self.bn1(self.conv1(x)))

out=self.bn2(self.conv2(out))

out+=self.shortcut(x)

out=F.relu(out)

return out

按w构建cost voume,形成一个维度height×width×(max disparity + 1)×feature size. 的代价体。我们通过将每一个Unary Features与它们对应的来自相反立体图像的Unary Features在每一个视差水平上连接起来,并将它们打包到4D体积中来实现这一点。

cost_volum = self.cost_volume(imgl1, imgr1)

------------------------------------------

def cost_volume(self,imgl,imgr):

B, C, H, W = imgl.size()

cost_vol = torch.zeros(B, C * 2, self.maxdisp , H, W).type_as(imgl)

for i in range(self.maxdisp):

if i > 0:

cost_vol[:, :C, i, :, i:] = imgl[:, :, :, i:]

cost_vol[:, C:, i, :, i:] = imgr[:, :, :, :-i]

else:

cost_vol[:, :C, i, :, :] = imgl

cost_vol[:, C:, i, :, :] = imgr

return cost_vol

剩下的下采样对着表看吧。

…

上采样decoder部分,残差层连接

# deconv

deconv3d = F.relu(self.debn1(self.deconv1(conv3d_block_4)) + conv3d_block_3)

deconv3d = F.relu(self.debn2(self.deconv2(deconv3d)) + conv3d_block_2)

deconv3d = F.relu(self.debn3(self.deconv3(deconv3d)) + conv3d_block_1)

deconv3d = F.relu(self.debn4(self.deconv4(deconv3d)) + conv3d_out)

恢复原尺寸,视差回归

# last deconv3d

self.deconv5 = nn.ConvTranspose3d(32, 1, 3, 2, 1, 1)

self.regression = DisparityRegression(maxdisp)

# last deconv3d

deconv3d = self.deconv5(deconv3d)

out = deconv3d.view( original_size)

prob = F.softmax(-out, 1)

disp1 = self.regression(prob)

return disp1

def forward(self, prob):

disp_score = self.disp_score.expand_as(prob).type_as(prob) # [B, D, H, W]

out = torch.sum(disp_score * prob, dim=1) # [B, H, W]

return out

损失函数优化器

criterion = SmoothL1Loss().to(device)

optimizer = optim.Adam(model.parameters(), lr=0.001)

342

342

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?