- 使用onnx.helper可以进行onnx的制造组装操作:

| 对象 | 描述 |

|---|---|

| ValueInfoProto 对象 | 张量名、张量的基本数据类型、张量形状 |

| 算子节点信息 NodeProto | 算子名称(可选)、算子类型、输入和输出列表(列表元素为数值元素) |

| GraphProto对象 | 用张量节点和算子节点组成的计算图对象 |

| ModelProto对象 | GraphProto封装后的对象 |

| 方法 | 描述 |

|---|---|

| onnx.helper.make_tensor_value_info | 制作ValueInfoProto对象 |

| onnx.helper.make_tensor | 使用指定的参数制作一个张量原型(与ValueInfoProto相比可以设置具体值) |

| onnx.helper.make_node | 构建一个节点原型NodeProto对象 (输入列表为之前定义的名称) |

| onnx.helper.make_graph | 构造图原型GraphProto对象(输入列表为之前定义的对象) |

| make_model(graph, **kwargs) | GraphProto封装后为ModelProto对象 |

| make_sequence | 使用指定的值参数创建序列 |

| make_operatorsetid | |

| make_opsetid | |

| make_model_gen_version | 推断模型IR_VERSION的make_model扩展,如果未指定,则使用尽力而为的基础。 |

| set_model_props | |

| set_model_props | |

| make_map | 使用指定的键值对参数创建 Map |

| make_attribute | |

| get_attribute_value | |

| make_empty_tensor_value_info | |

| make_sparse_tensor |

提取出一个子模型

// https://onnx.ai/onnx/api/utils.html

import onnx

onnx.utils.extract_model('whole_model.onnx', 'partial_model.onnx', ['22'], ['28'])

提取时添加额外输出

onnx.utils.extract_model('whole_model.onnx', 'submodel_1.onnx', ['22'], ['27', '31']) # 本来只有31节点输出,现在让27节点的值也输出出来

形状推断

以下是一个简单的例子,展示了如何使用onnx.shape_inference.infer_shapes函数来推断ONNX模型中节点的输入和输出张量形状:

import onnx

import onnx.shape_inference

# 定义一个简单的ONNX模型

x = onnx.helper.make_tensor_value_info('x', onnx.TensorProto.FLOAT, [None, 3])

y = onnx.helper.make_tensor_value_info('y', onnx.TensorProto.FLOAT, [None, 2])

add = onnx.helper.make_node('Add', inputs=['x', 'y'], outputs=['z'])

graph_def = onnx.helper.make_graph(

[add],

'test-model',

[x, y],

[onnx.helper.make_tensor_value_info('z', onnx.TensorProto.FLOAT, [None, 2])]

)

model_def = onnx.helper.make_model(graph_def, producer_name='onnx-example')

# 推断节点输入和输出张量形状

inferred_model = onnx.shape_inference.infer_shapes(model_def)

# 打印推断结果

for idx, node in enumerate(inferred_model.graph.node):

print("Node ", idx, " inputs: ", node.input)

print("Node ", idx, " outputs: ", node.output)

print("Node ", idx, " input shapes: ", [i.type.tensor_type.shape.dim for i in inferred_model.graph.input if i.name in node.input])

print("Node ", idx, " output shapes: ", [o.type.tensor_type.shape.dim for o in inferred_model.graph.output if o.name in node.output])

-

在这个例子中,我们定义了一个简单的ONNX模型,包含一个Add节点,它的输入是两个形状为[N, 3]和[N, 2]的张量,输出是一个形状为[N, 2]的张量。onnx.shape_inference.infer_shapes函数对这个模型进行推断,并打印了每个节点的输入和输出张量形状。

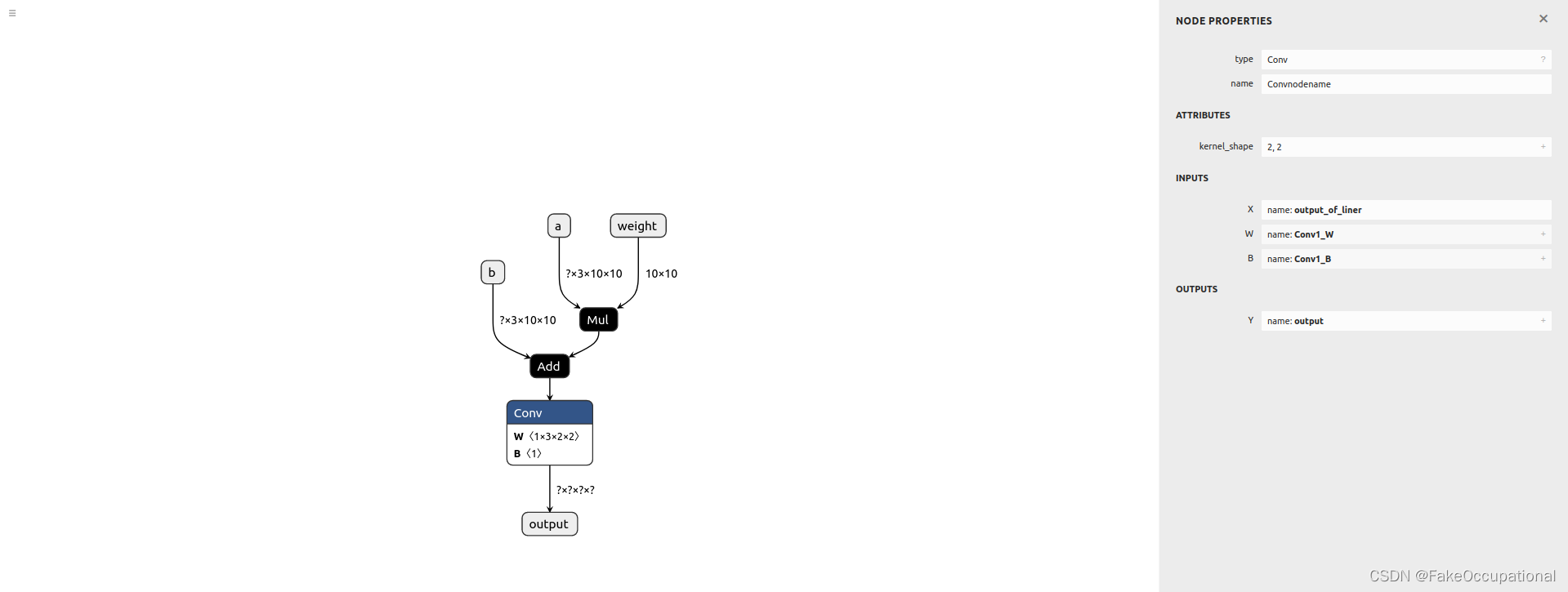

使用(尝试构建一个模型)

import onnx

from onnx import helper

from onnx import TensorProto

import numpy as np

def create_initializer_tensor(

name: str,

tensor_array: np.ndarray,

data_type: onnx.TensorProto = onnx.TensorProto.FLOAT

) -> onnx.TensorProto:

# (TensorProto)

initializer_tensor = onnx.helper.make_tensor(

name=name,

data_type=data_type,

dims=tensor_array.shape,

vals=tensor_array.flatten().tolist())

return initializer_tensor

# input and output

a = helper.make_tensor_value_info('a', TensorProto.FLOAT, [None,3,10, 10])

x = helper.make_tensor_value_info('weight', TensorProto.FLOAT, [10, 10])

b = helper.make_tensor_value_info('b', TensorProto.FLOAT, [None,3, 10,10])

output = helper.make_tensor_value_info('output', TensorProto.FLOAT, [None,None,None, None])

# Mul

mul = helper.make_node('Mul', ['a', 'weight'], ['c'])

# Add

add = helper.make_node('Add', ['c', 'b'], ['output_of_liner'])

# Conv

conv1_W_initializer_tensor_name = "Conv1_W"

conv1_W_initializer_tensor = create_initializer_tensor(

name=conv1_W_initializer_tensor_name,

tensor_array=np.ones(shape=(1, 3,*(2,2))).astype(np.float32),

data_type=onnx.TensorProto.FLOAT)

conv1_B_initializer_tensor_name = "Conv1_B"

conv1_B_initializer_tensor = create_initializer_tensor(

name=conv1_B_initializer_tensor_name,

tensor_array=np.ones(shape=(1)).astype(np.float32),

data_type=onnx.TensorProto.FLOAT)

conv_node = onnx.helper.make_node(

name="Convnodename", # Name is optional.

op_type="Conv", # Must follow the order of input and output definitions. # https://github.com/onnx/onnx/blob/rel-1.9.0/docs/Operators.md#inputs-2---3

inputs=[ 'output_of_liner', conv1_W_initializer_tensor_name,conv1_B_initializer_tensor_name ],

outputs=["output"],

kernel_shape= (2, 2),

#pads=(1, 1, 1, 1),

)

# graph and model

graph = helper.make_graph([mul, add,conv_node], 'test', [a, x, b], [output],

initializer=[conv1_W_initializer_tensor, conv1_B_initializer_tensor,],

)

model = helper.make_model(graph)

# save model

onnx.checker.check_model(model)

print(model)

onnx.save(model, 'test.onnx')

###################EEEEEEEEEEEEEEEEEEEEEEEEEEEEEEEVVVVVVVVVVVVVVVVVVVVVVVVVVVVVAAAAAAAAAAAAAAAAAAAAAAAAALLLLLLLLLLLLLLLLLLLLLLLL#########

import onnxruntime

# import numpy as np

sess = onnxruntime.InferenceSession('test.onnx')

a = np.random.rand(1,3,10, 10).astype(np.float32)

b = np.random.rand(1,3,10, 10).astype(np.float32)

x = np.random.rand(10, 10).astype(np.float32)

output = sess.run(['output'], {'a': a, 'b': b, 'weight': x})[0]

print(output)

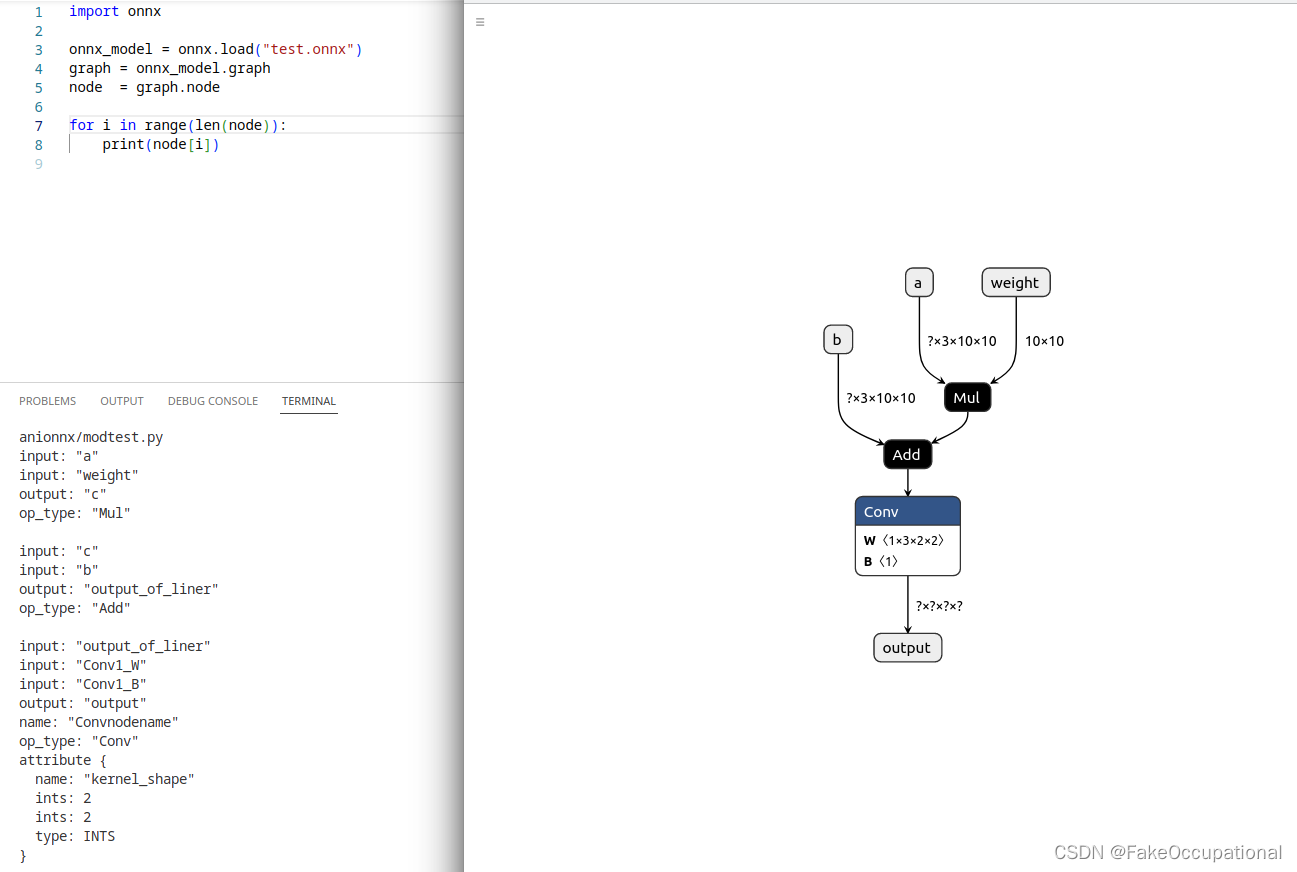

替换操作

import onnx

onnx_model = onnx.load("model.onnx")

graph = onnx_model.graph

node = graph.node

for i in range(len(node)):

print(i)

print(node[i])

print(123)

old_scale_node = node[3372]

new_scale_node = onnx.helper.make_node('Add', ['onnx::Add_4676', 'onnx::Add_4676'], ['onnx::Gather_4677'])

graph.node.remove(old_scale_node)

graph.node.insert(3372, new_scale_node)

graph.node.remove(node[3364])

onnx.checker.check_model(onnx_model)

onnx.save(onnx_model, 'out2.onnx')

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?