0.环境

ubuntu16.04

models-1.12.0

tenosrflow-v1.12.0

python3.5对于models的编译请参考我的其他博客。

1.下载

本文主要使用的这个版本的AMSGrad(https://github.com/taki0112/AMSGrad-Tensorflow)

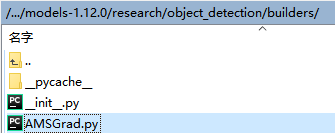

将AMSGrad.py复制到/models-1.12.0/research/object_detection/builders/

2.修改

2.1 optimizer_builder.py

/models-1.12.0/research/object_detection/builders/optimizer_builder.py

Line20:

from object_detection.builders.AMSGrad import AMSGrad![]()

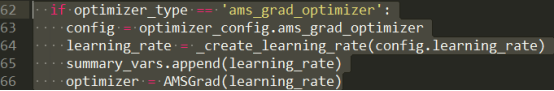

Line62-66:

if optimizer_type == 'ams_grad_optimizer':

config = optimizer_config.ams_grad_optimizer

learning_rate = _create_learning_rate(config.learning_rate)

summary_vars.append(learning_rate)

optimizer = AMSGrad(learning_rate)

2.2 optimizer.proto

/models-1.12.0/research/object_detection/protos/optimizer.proto

Line9-18:

message Optimizer {

oneof optimizer {

RMSPropOptimizer rms_prop_optimizer = 1;

MomentumOptimizer momentum_optimizer = 2;

AdamOptimizer adam_optimizer = 3;

AMSGrad ams_grad_optimizer = 6;// Added

}

optional bool use_moving_average = 4 [default = true];

optional float moving_average_decay = 5 [default = 0.9999];

}

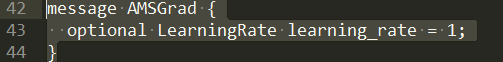

Line42-44:

message AMSGrad {

optional LearningRate learning_rate = 1;

}

3.protoc编译

在/models-1.12.0/research/目录下,重新执行:

./protoc object_detection/protos/*.proto --python_out=. optimizer_pb2.py是protoc生成的,所以有什么错误的话,看报错的对应的line,然后看看其他写的例子进行修改。

执行后如果什么都不报,就是ok的。

4.使用(config)

因为只需要去对学习率赋值,所以较为简单:

optimizer {

ams_grad_optimizer: {

learning_rate: {

exponential_decay_learning_rate {

initial_learning_rate: 0.004

decay_steps: 800720

decay_factor: 0.95

}

}

}

参考

2.Custom-Optimizer-in-TensorFlow

3.Stackoverflow-How to create an optimizer in Tensorflow

1909

1909

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?