上一片博客(指路)末尾有提到计算公式,这里就不再赘述了放个截图。

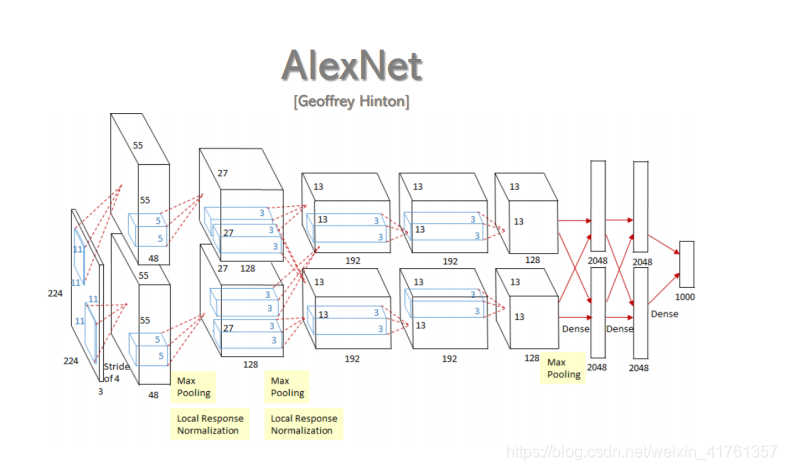

本文将以AlexNet为例,计算AlexNet参数量量和浮点运算次数。

计算参数量

1.首先回顾参数量的计算公式:

如果输入是C channel ×H×W;卷积核是C inchannel × M outchannel ×K ×K;

parameters = [(K ×K)×C inchannel ]×M outchannel+M

2.分析AlexNet网络结构

共进行五次卷积操作,各层之间的kernel大小和输出feature尺寸下图中给出:

各层参数:

parameters = [(K ×K)×C ]×M+M

CONV1:

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1318

1318

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?