系列文章目录

文章目录

前言

YOLOv12 来了,同样由ultralytics公司出品(这更新速度可是够快的了)

本次主要改动依然在模型方面:

1) 简单有效的区域注意力机制(area-attention)

2)高效的聚合网络R-ELAN

paper:https://arxiv.org/pdf/2502.12524

github:https://github.com/sunsmarterjie/yolov12

一、area-attenion

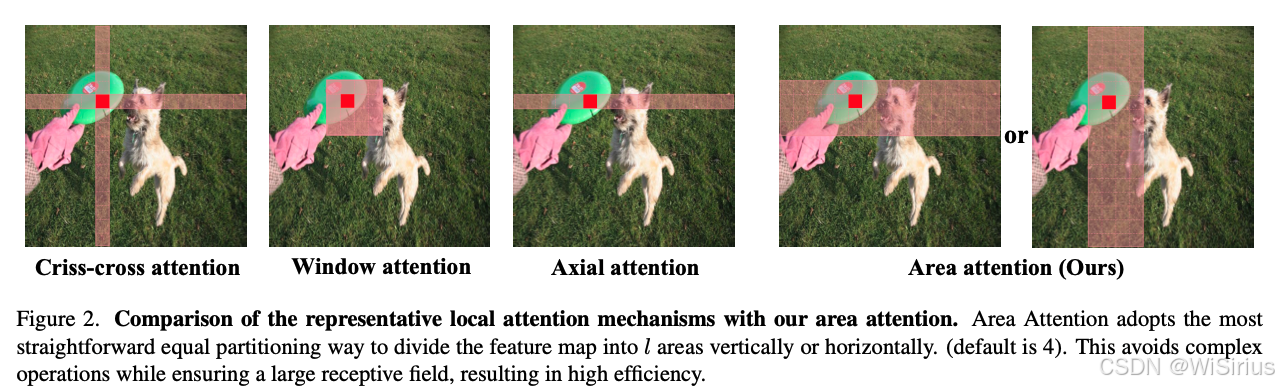

注意机制天生就比卷积神经网络(CNN)慢, 首先自注意力的运算的复杂度随输入序列长度呈二次增长,另外大多数基于注意力的视觉Transformer由于其复杂的设计,逐渐累积计算成本。为了能够缩小注意力机制产生的计算成本YOLOv12提出了area attention运算。其将分辨率为(H, W)的feature map划分为大小为(H/l, W)或(H, W/l)的l段。默认值l设置为4,将接受域减少到原来的1/4,但仍然保持较大的接受域。

class AAttn(nn.Module):

"""

Area-attention module with the requirement of flash attention.

Attributes:

dim (int): Number of hidden channels;

num_heads (int): Number of heads into which the attention mechanism is divided;

area (int, optional): Number of areas the feature map is divided. Defaults to 1.

Methods:

forward: Performs a forward process of input tensor and outputs a tensor after the execution of the area attention mechanism.

Examples:

>>> import torch

>>> from ultralytics.nn.modules import AAttn

>>> model = AAttn(dim=64, num_heads=2, area=4)

>>> x = torch.randn(2, 64, 128, 128)

>>> output = model(x)

>>> print(output.shape)

Notes:

recommend that dim//num_heads be a multiple of 32 or 64.

"""

def __init__(self, dim, num_heads, area=1):

"""Initializes the area-attention module, a simple yet efficient attention module for YOLO."""

super().__init__()

self.area = area

self.num_heads = num_heads

self.head_dim = head_dim = dim // num_heads

all_head_dim = head_dim * self.num_heads

self.qkv = Conv(dim, all_head_dim

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

4947

4947

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?