CVTNet以激光点云多类投影生成的二维图为输入,利用cross transformer将多类信息交叉融合,为激光点云提取强特异性描述子,实现SLAM闭环检测或全局定位功能。此外,CVTNet生成的全局描述子具备车辆yaw角旋转不变性,提升车辆多视角地点识别精度。各项试验表明,CVTNet达到了SOTA的地点识别精度,且实时性适用于自动驾驶要求。

代码地址:https://github.com/BIT-MJY/CVTNet

论文地址:https://arxiv.org/abs/2302.01665

摘要如下:

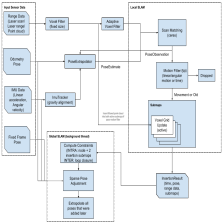

LiDAR-based place recognition (LPR) is one of the most crucial components of autonomous vehicles to identify previously visited places in GPS-denied environments. Most existing LPR methods use mundane representations of the input point cloud without considering different views, which may not fully exploit the information from LiDAR sensors. In this paper, we propose a cross-view transformer-based network, dubbed CVTNet, to fuse the range image views (RIVs) and bird’s eye views (BEVs) generated from the LiDAR data. It extracts correlations within the views themselves using intra-transformers and between the two different views using inter-transformers. Based on that, our proposed CVTNet generates a yaw-angle-invariant global descriptor for each laser scan end-to-end online and retrieves previously seen places by descriptor matching between the current query scan and the pre-built database. We evaluate our approach on three datasets collected with different sensor setups and environmental conditions. The experimental results show that our method outperforms the state-of-the-art LPR methods with strong robustness to viewpoint changes and long-time spans. Furthermore, our approach has a good real-time performance that can run faster than the typical LiDAR frame rate.

机翻:

基于 LiDAR 的地点识别 (LPR) 是自动驾驶汽车最重要的组成部分之一,用于在 GPS 无法识别的环境中识别之前访问过的地点。 大多数现有的 LPR 方法使用输入点云的普通表示,而不考虑不同的视图,这可能无法充分利用 LiDAR 传感器的信息。 在本文中,我们提出了一种基于跨视图变换器的网络,称为 CVTNet,用于融合从 LiDAR 数据生成的距离图像视图 (RIV) 和鸟瞰视图 (BEV)。 它使用内部转换器提取视图本身内的相关性,并使用内部转换器提取两个不同视图之间的相关性。 基于此,我们提出的 CVTNet 为每个端到端在线激光扫描生成一个偏航角不变的全局描述符,并通过当前查询扫描和预构建数据库之间的描述符匹配来检索以前看到的位置。 我们在使用不同传感器设置和环境条件收集的三个数据集上评估我们的方法。 实验结果表明,我们的方法优于最先进的 LPR 方法,对视点变化和长时间跨度具有很强的鲁棒性。 此外,我们的方法具有良好的实时性能,可以比典型的激光雷达帧速率运行得更快。

784

784

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?