写在前面的话:建议先看完本专栏的前三篇文章再看这篇

本文将介绍如何了查找点云中每个点的邻近点并统计个数

取点云文件

我给了个demo文件,在input文件夹下面,其每行为一个点,每行分别有4个数:index, x, y, z

0 0.190818 2.151146 1.516178

1 0.182662 2.208185 1.506151

2 0.212130 2.120995 1.519654

3 0.195404 2.216945 1.554745

4 0.177847 2.420602 1.464208

5 0.108176 2.408980 1.471528

6 0.285591 2.271129 1.526475

...........................

82881 2.856293 4.802210 0.886675

82882 2.893785 4.858056 0.938935读取函数如下:

float* read_pc(string filename, float xyz[]){

ifstream fin;

fin.open(filename);

if(!fin) {

cout << filename << " file could not be opened\n";

exit(0);

}

int idx;

float x, y, z;

while(!fin.eof()) {

fin >> idx >> x >> y >> z;

xyz[idx*3 + 0] =x;

xyz[idx*3 + 1] =y;

xyz[idx*3 + 2] =z;

}

return xyz;

}主函数

主函数中我们需要设置计算邻近点的辐射距离,如下代码中所示,与所选点距离小于0.2m的即可以视为所选点的邻近点

int main() {

string inputFileName = "/home/bruno/Documents/Clion_Project/cuda_demo/demo3_AdjacentPoint/input/pc.txt";

const int point_num = 82883;

const float radius = 0.2;

float xyz[point_num*3];

read_pc(inputFileName, xyz);

checkNearPoints(point_num, xyz, radius);

return 0;

}检查临近点

首先我们要清楚我们的输入是什么: 点云个数,点云坐标,以及辐射距离; 其次我们要理清楚如何去利用cuda加速计算。最简单的想法:我们有多少个点就开启多少个线程,这样一次就可以把所有的点的临近点算出来。该函数的编写思路与前面几篇文章总结的一样,这里不多说。

void checkNearPoints(const int point_num, float xyz[], const float radius){

dim3 blocks(DIVUP(point_num, THREADS_PER_BLOCK));

dim3 threads(THREADS_PER_BLOCK);

int * ptsCnt_h;

ptsCnt_h = (int *)malloc(point_num * sizeof(int));

// define gpu variable

int * point_num_d;

float * xyz_d;

float * radius_d;

int * ptsCnt_d; //mark the number of adjacent points

int * adj_point_d; //mark the adjacent points, 1 is true,0 is false

//generate gpu ram

CUDA_ERR_CHK( cudaMalloc((void **) &point_num_d, sizeof(int)));

CUDA_ERR_CHK(cudaMalloc((void **) &xyz_d, 3*point_num*sizeof(float)));

CUDA_ERR_CHK(cudaMalloc((void **) &radius_d, sizeof(float)));

CUDA_ERR_CHK(cudaMalloc((void **) &ptsCnt_d, point_num*sizeof(int)));

// copy host to device

CUDA_ERR_CHK(cudaMemcpy(point_num_d, &point_num, sizeof(int), cudaMemcpyHostToDevice));

CUDA_ERR_CHK(cudaMemcpy(xyz_d, xyz, 3*point_num*sizeof(float), cudaMemcpyHostToDevice));

CUDA_ERR_CHK(cudaMemcpy(radius_d, &radius, sizeof(float), cudaMemcpyHostToDevice));

// start device kernel

checkNearPoints_cuda<<<blocks, threads>>>(point_num_d, xyz_d, radius_d, ptsCnt_d);

// copy device to host

CUDA_ERR_CHK(cudaMemcpy(ptsCnt_h, ptsCnt_d, point_num*sizeof(float), cudaMemcpyDeviceToHost));

// release gpu ram

CUDA_ERR_CHK(cudaFree(point_num_d));

CUDA_ERR_CHK(cudaFree(xyz_d));

CUDA_ERR_CHK(cudaFree(radius_d));

CUDA_ERR_CHK(cudaFree(ptsCnt_d));

for (int i =0; i< point_num; i++){

printf("index: %d adjacent point number: %d \n",i, ptsCnt_h[i]);

}

}CUDA检查函数

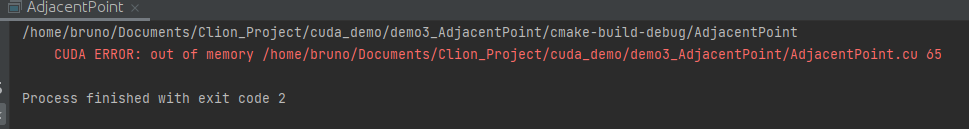

由于我们直接调用CUDA的函数(cudaMencpy cudaMalloc 等),其不会直接返回准确的报错信息,所以我们需要写一个报错检索函数,这样我们可以知道出错的原因:

// https://stackoverflow.com/a/14038590

#define CUDA_ERR_CHK(code) { cuda_err_chk((code), __FILE__, __LINE__); }

inline void cuda_err_chk(cudaError_t code, const char *file, int line, bool abort = true) {

if (code != cudaSuccess) {

fprintf(stderr, "\tCUDA ERROR: %s %s %d\n", cudaGetErrorString(code), file, line);

if (abort) exit(code);

}

}添加后我们可以看到如下报错:直接告诉我们在65行,内存溢出了。(说明你的GPU卡内存不够,可以换卡, 或者对点进行下采样)

核函数

核函数利用线程ID做检索,通过for循环去遍历所选点外的每一个点。通过计算每个点与所选距离,再与辐射距离进行比较,小于辐射距离的视为邻近点。

__global__ void checkNearPoints_cuda(int *point_num_d, float *xyz_d, float *eps_d, int *ptsCnt_d){

int th_index = blockIdx.x*blockDim.x + threadIdx.x;

if (th_index >= *point_num_d) return ;

ptsCnt_d[th_index] = 0; // the number of adjacent points

float o_x = xyz_d[th_index * 3 + 0];

float o_y = xyz_d[th_index * 3 + 1];

float o_z = xyz_d[th_index * 3 + 2];

for (int k =0; k< *point_num_d; k++){

if(th_index==k) continue;

float k_x = xyz_d[k * 3 + 0];

float k_y = xyz_d[k * 3 + 1];

float k_z = xyz_d[k * 3 + 2];

float l2 = sqrt((k_x-o_x)*(k_x-o_x)+(k_y-o_y)*(k_y-o_y)+(k_z-o_z)*(k_z-o_z));

if (l2 <= *eps_d) {

ptsCnt_d[th_index]= ptsCnt_d[th_index] + 1;

}

}

}CmakeList

Cmakelist我这次给出了一个通用的模板,基本每个demo都可以用,还解决了无法核嵌套的问题。注意要根据自己CUDA安装路径小小更改下下面的代码。

cmake_minimum_required(VERSION 3.19)

project(AdjacentPoint)

set(CMAKE_CXX_STANDARD 14)

# packages

find_package(CUDA REQUIRED)

if(${CUDA_FOUND})

set(CUDA_SOURCE_PROPERTY_FORMAT OBJ)

set(CUDA_SEPARABLE_COMPILATION ON)

include_directories(${CUDA_INCLUDE_DIRS})

set(CUDA_PROPAGATE_HOST_FLAGS OFF)

# set(CUDA_VERBOSE_BUILD ON)

SET(CUDA_NVCC_FLAGS ${CUDA_NVCC_FLAGS};-gencode arch=compute_75,code=sm_75;-std=c++14; -rdc=true; -lcudadevrt)

link_directories(/usr/local/cuda/lib64)

MESSAGE(STATUS "found cuda")

else(${CUDA_FOUND})

MESSAGE(STATUS "cuda not found!")

endif(${CUDA_FOUND})

file(GLOB CURRENT_SOURCES *.cpp *.cu)

file(GLOB_RECURSE CURRENT_HEADERS *.h *.hpp *.cuh)

source_group("Source" FILES ${CURRENT_SOURCES})

source_group("Include" FILES ${CURRENT_HEADERS})

CUDA_ADD_EXECUTABLE(AdjacentPoint ${CURRENT_HEADERS} ${CURRENT_SOURCES} ${CUDA_INCLUDE_DIRS})

target_link_libraries(AdjacentPoint /usr/local/cuda/lib64/libcudadevrt.a)

打印结果

结果如下图所示。 如果想知道邻近点的索引,可以查看adj_point_d变量,该变量很占内存,如果不需要还是注释掉。

最后,完整代码以及其他demo可以在我的github中获取(不定期更新):

GitHub - weiguangzhao/cuda_demo: CUDA DEMO![]() https://github.com/weiguangzhao/cuda_demo

https://github.com/weiguangzhao/cuda_demo

3650

3650

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?