@article{liu2023sgfusion,

title={SGFusion: A saliency guided deep-learning framework for pixel-level image fusion},

author={Liu, Jinyang and Dian, Renwei and Li, Shutao and Liu, Haibo},

journal={Information Fusion},

volume={91},

pages={205–214},

year={2023},

publisher={Elsevier}

}

| 论文级别:SCI A1 TOP |

| 影响因子:18.6 |

文章目录

📖论文解读

作者提出了一种【显著性引导】的【端到端】【通用】【像素级】图像融合框架SGFusion,可用于多模态图像融合(IVIF、医学MRI+PET)和多曝光图像融合任务。

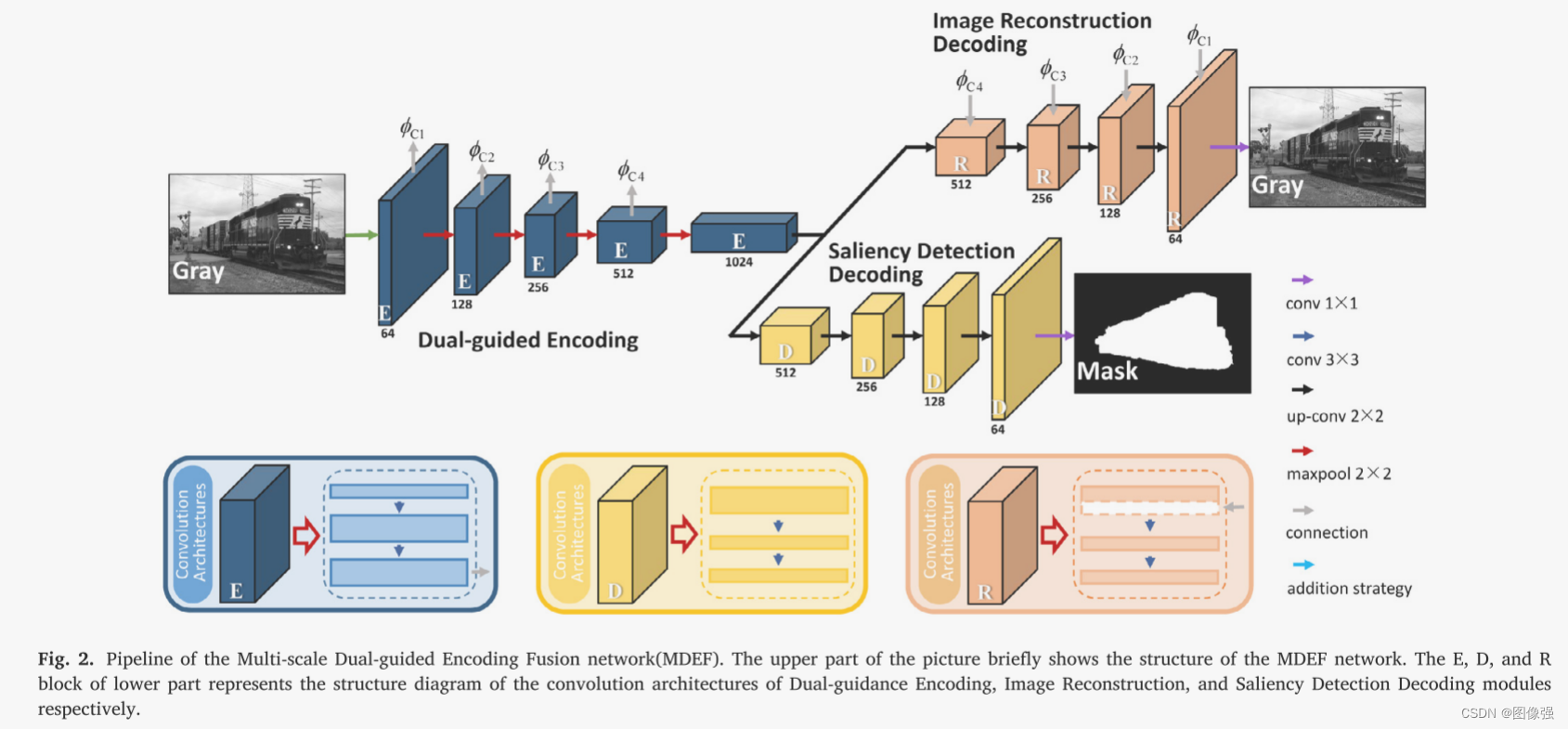

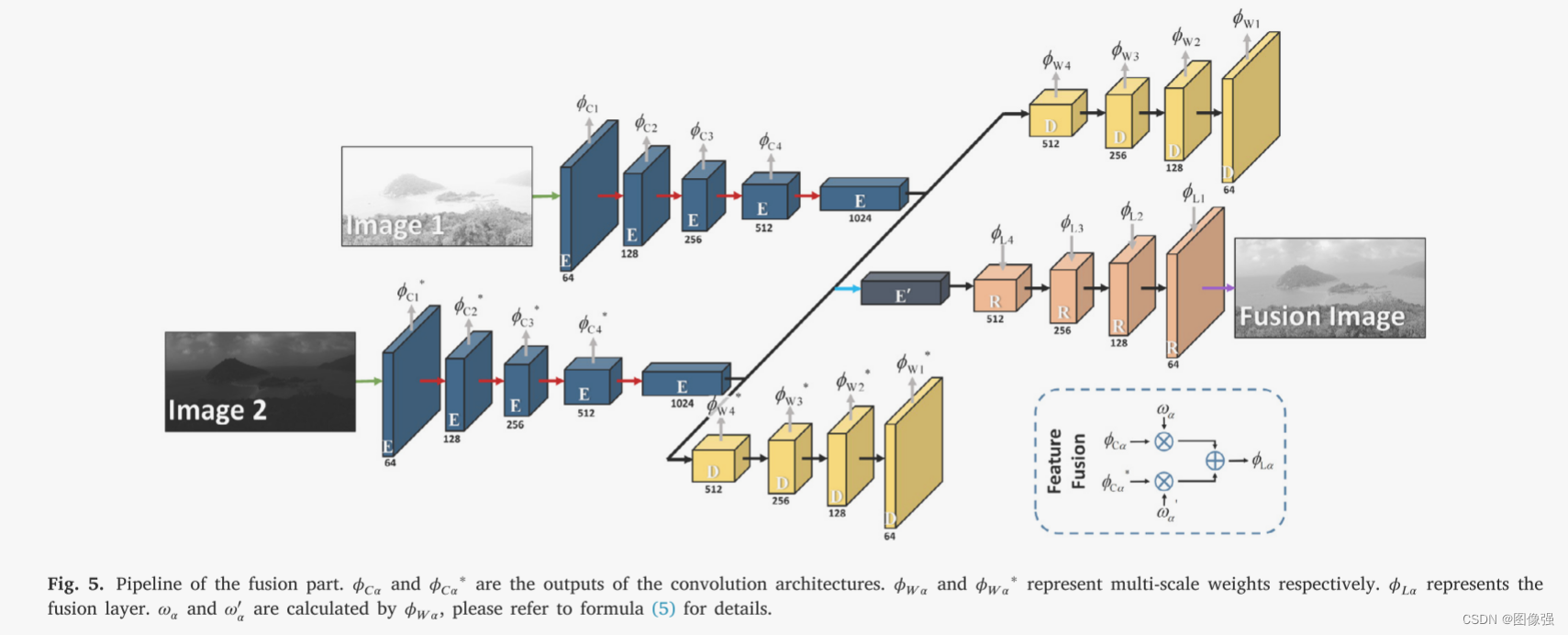

该网络采用双导编码、图像重建解码和显著性检测解码过程,同时从图像中提取不同尺度的特征映射和显著性映射。将显著性检测解码作为融合权值,将图像重构解码的特征合并生成融合图像,可以有效地从源图像中提取有意义的信息,使融合图像更符合视觉感知。

🔑关键词

Pixel-level image fusion 像素级图像融合

Fusion weight 融合权重

Deep learning 深度学习

Saliency detection 显著性检测

💭核心思想

训练的时候是单编码器(提取特征)双解码器(其实就是特征重构解码器和Mask解码器,用于重构源图像和掩膜)

🎖️本文贡献

- 提出了一种像素级通用图像融合模型,只需要训练一个模型,即可实现多任务图像融合

- 利用显著性检测来指导图像编码过程,利用显著性检测的特征作为融合权值来实现图像解码过程

- SOTA

🪅相关背景知识

- 深度学习

- 神经网络

- 图像融合

🪢网络结构

🪢训练部分

对于训练部分,构建【多尺度双导编码融合网络】(multi-scale dual-guided encoding fusion, MDEF)作为整个框架,MDEF主要包括:

- dual-guided encoding 双指导编码 下图中蓝色模块

- image reconstruction decoding 图像重构编码 下图中黄色模块

- saliency detection decoding 显著检测解码 下图中粉色模块

整体结构说白了就是单编码器双解码器,双解码器一个重构源图像,一个生成显著性掩膜

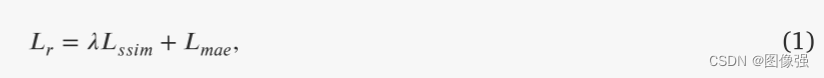

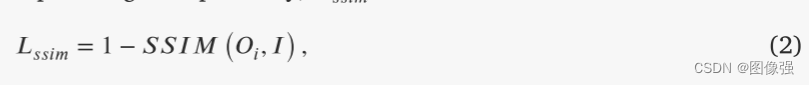

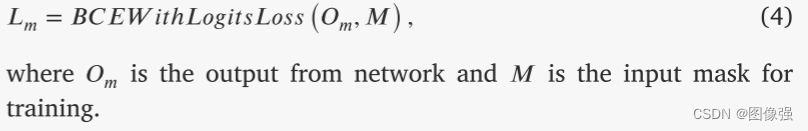

既然是双解码器,因此就会有两个损失

重构损失Lr和掩膜损失Lm

MAE,Mean Absolute Error,平均绝对误差

Binary Cross Entropy(BCE),二值交叉熵

🪢融合部分

对于融合部分

利用训练部分的结构重构双输入网络,并将网络提取的显著特征作为权重进行图像融合,生成最终的融合结果

作者提出的网络结构如下所示。

这里特征融合作者选择了加法策略,融合以后就到E‘那个地方了,然后使用特征重构模块生成融合图像

诶?那前面说了半天的D,显著性检测解码哪去了???

问得好

咱们先看原文

3.2.1节说了什么?

又是一个好问题,作者想表达的其实就是:

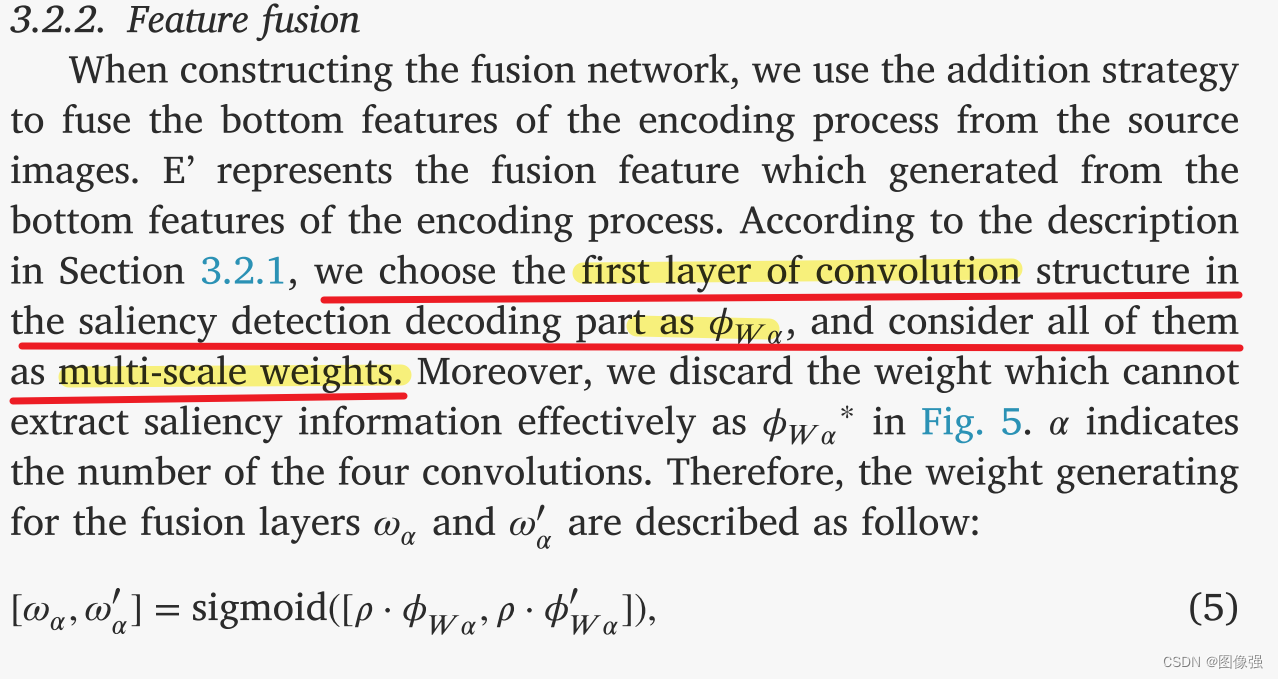

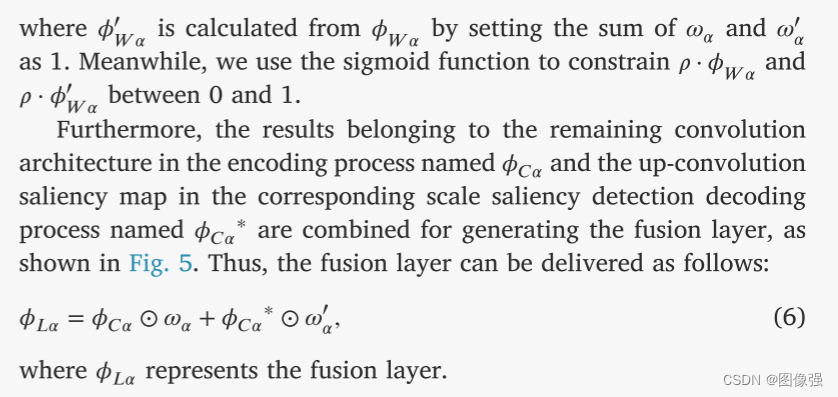

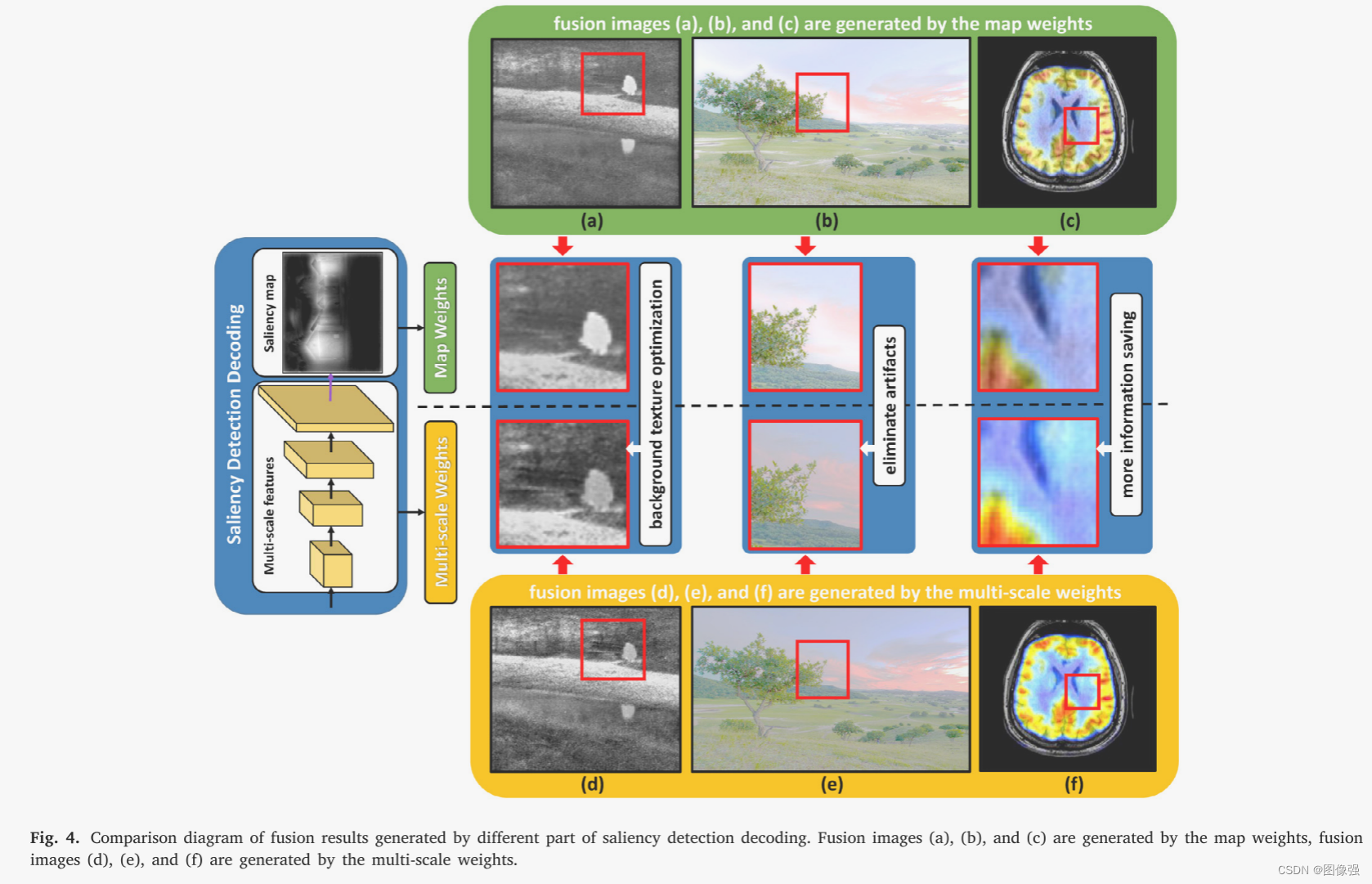

不同类型的源图像对各种融合任务都有限制,因此有必要选择合适的源图像来生成融合权值。此外,权值设计的另一个关键点是选择显著性检测解码的哪一部分生成权值。

其中,显著性检测解码生成的特征有两个部分:多尺度特征和生成的显著性图(权重图)

经过对比,作者只使用多尺度特征来生成融合层,可以使融合方法兼顾重要的区域信息和环境信息,避免产生伪影

作者选择显著性检测解码的第一层卷积层计算多尺度权值,同时舍弃了不能有效提取显著性信息的权重

📉损失函数

上文已介绍

🔢数据集

测试数据集:

- TNO

- DOI:10.1016/j.infrared.2017.02.005

训练数据集:

对于通用的IF模型,作者只使用了一个数据集训练,这个数据集就是EC-SSD

这是一个显著性分割数据集

图像融合数据集链接

[图像融合常用数据集整理]

🎢训练设置

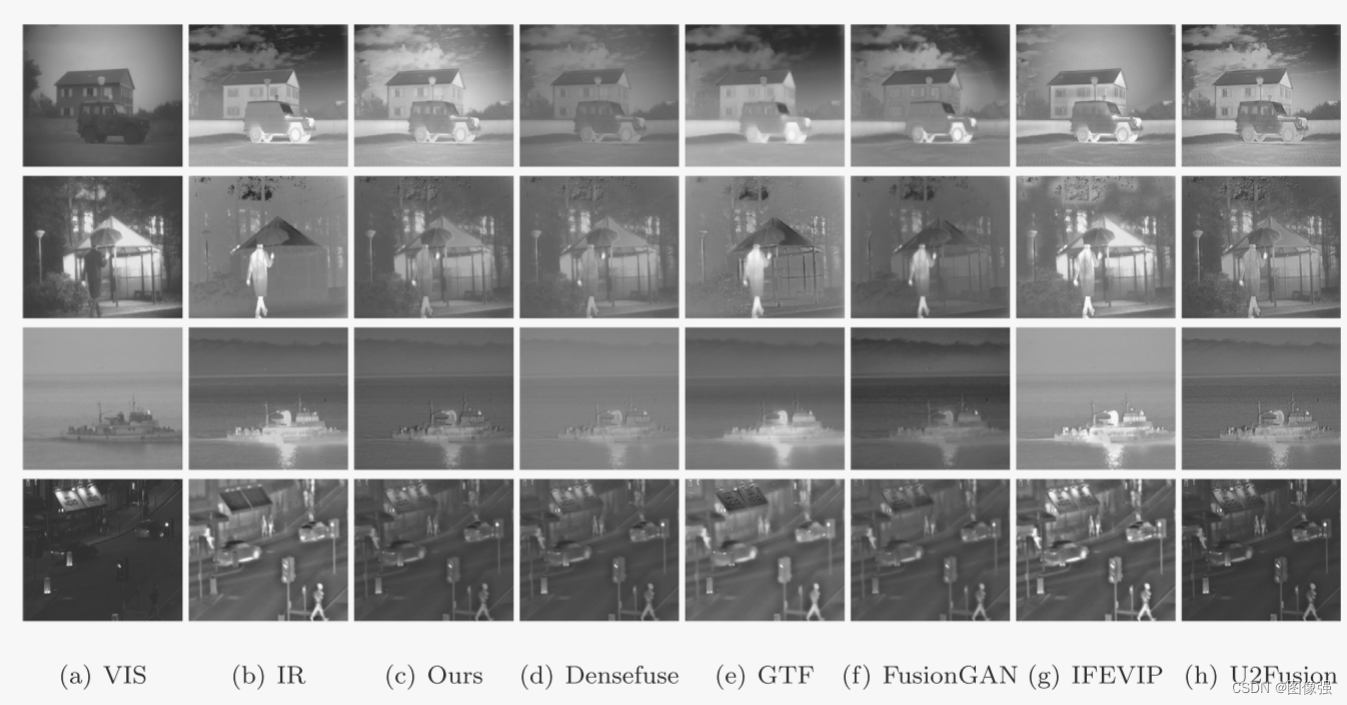

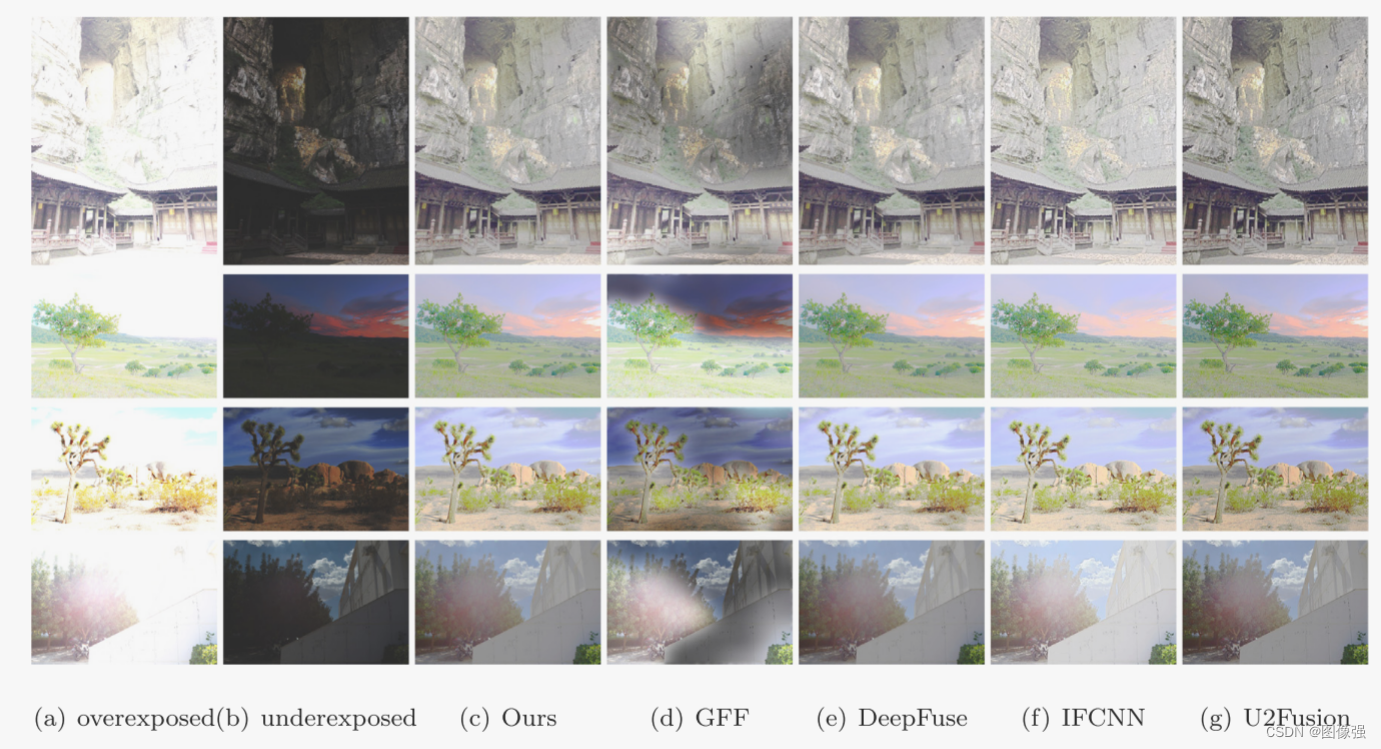

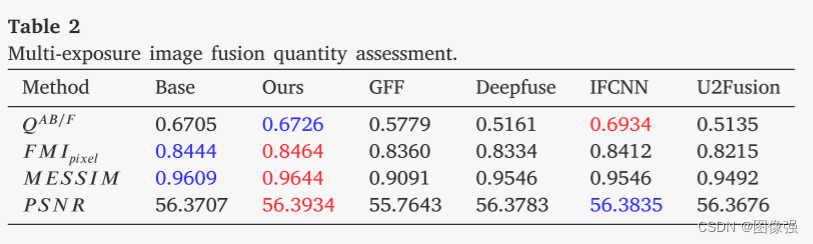

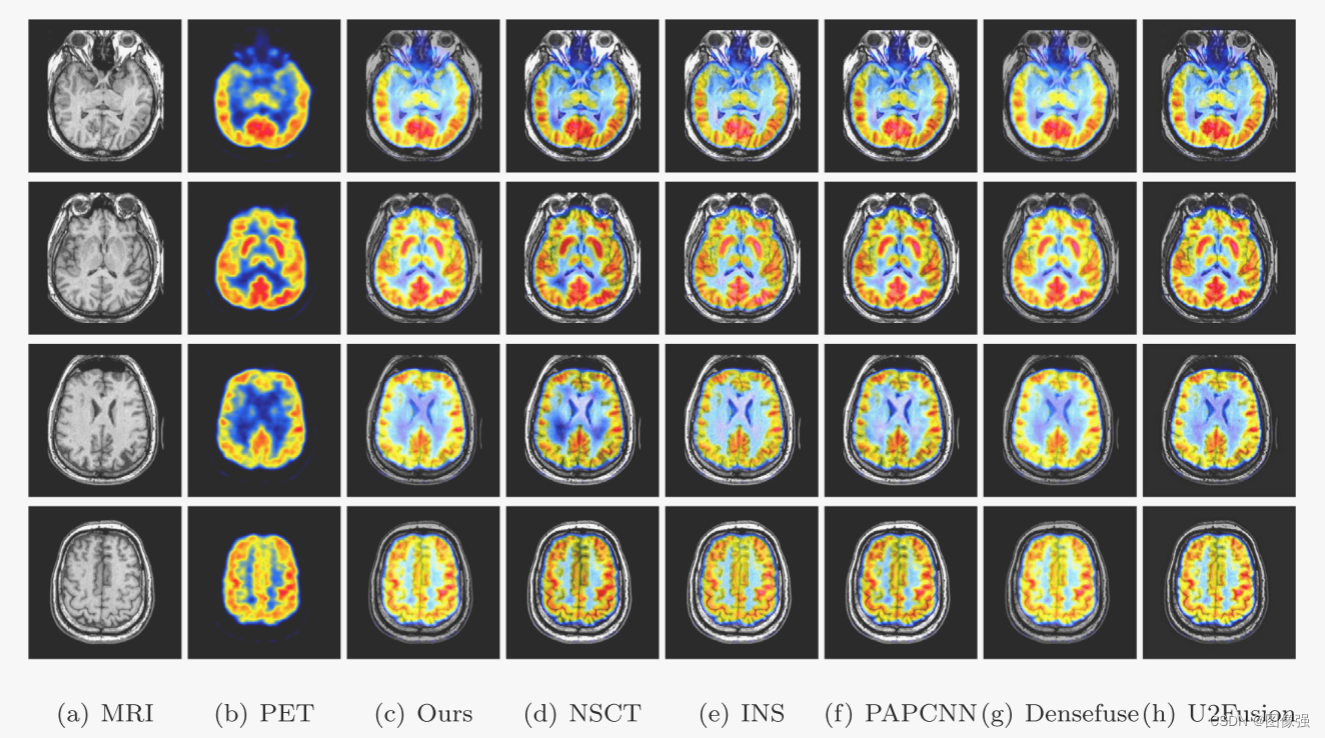

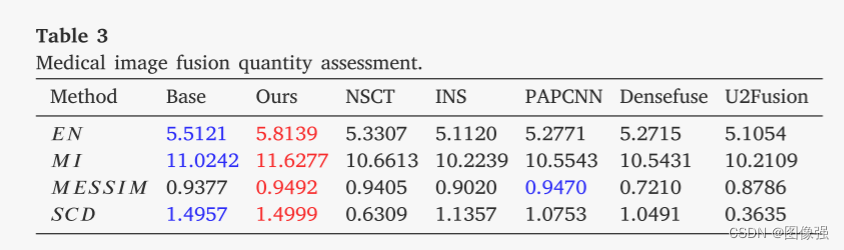

🔬实验

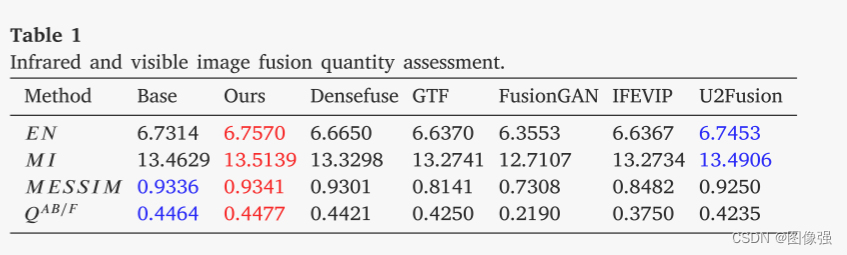

📏评价指标

- EN

- MI

- ME-SSIM

- QABF

扩展学习

[图像融合定量指标分析]

🥅Baseline

- IVIF

Densefuse、GTF、fusongan、IFEVIP、U2Fusion

✨✨✨扩展学习✨✨✨

✨✨✨强烈推荐必看博客[图像融合论文baseline及其网络模型]✨✨✨

🔬实验结果

更多实验结果及分析可以查看原文:

📖[论文下载地址]

🧷总结体会

单编码器和双解码器,双解码器一个用于重构,一个用于生成mask

🚀传送门

📑图像融合相关论文阅读笔记

📑[(TLGAN)Boosting target-level infrared and visible image fusion with regional information coordination]

📑[ReFusion: Learning Image Fusion from Reconstruction with Learnable Loss via Meta-Learning]

📑[YDTR: Infrared and Visible Image Fusion via Y-Shape Dynamic Transformer]

📑[CS2Fusion: Contrastive learning for Self-Supervised infrared and visible image fusion by estimating feature compensation map]

📑[CrossFuse: A novel cross attention mechanism based infrared and visible image fusion approach]

📑[(DIF-Net)Unsupervised Deep Image Fusion With Structure Tensor Representations]

📑[(MURF: Mutually Reinforcing Multi-Modal Image Registration and Fusion]

📑[(A Deep Learning Framework for Infrared and Visible Image Fusion Without Strict Registration]

📑[(APWNet)Real-time infrared and visible image fusion network using adaptive pixel weighting strategy]

📑[Dif-fusion: Towards high color fidelity in infrared and visible image fusion with diffusion models]

📑[Coconet: Coupled contrastive learning network with multi-level feature ensemble for multi-modality image fusion]

📑[LRRNet: A Novel Representation Learning Guided Fusion Network for Infrared and Visible Images]

📑[(DeFusion)Fusion from decomposition: A self-supervised decomposition approach for image fusion]

📑[ReCoNet: Recurrent Correction Network for Fast and Efficient Multi-modality Image Fusion]

📑[RFN-Nest: An end-to-end resid- ual fusion network for infrared and visible images]

📑[SwinFuse: A Residual Swin Transformer Fusion Network for Infrared and Visible Images]

📑[SwinFusion: Cross-domain Long-range Learning for General Image Fusion via Swin Transformer]

📑[(MFEIF)Learning a Deep Multi-Scale Feature Ensemble and an Edge-Attention Guidance for Image Fusion]

📑[DenseFuse: A fusion approach to infrared and visible images]

📑[DeepFuse: A Deep Unsupervised Approach for Exposure Fusion with Extreme Exposure Image Pair]

📑[GANMcC: A Generative Adversarial Network With Multiclassification Constraints for IVIF]

📑[DIDFuse: Deep Image Decomposition for Infrared and Visible Image Fusion]

📑[IFCNN: A general image fusion framework based on convolutional neural network]

📑[(PMGI) Rethinking the image fusion: A fast unified image fusion network based on proportional maintenance of gradient and intensity]

📑[SDNet: A Versatile Squeeze-and-Decomposition Network for Real-Time Image Fusion]

📑[DDcGAN: A Dual-Discriminator Conditional Generative Adversarial Network for Multi-Resolution Image Fusion]

📑[FusionGAN: A generative adversarial network for infrared and visible image fusion]

📑[PIAFusion: A progressive infrared and visible image fusion network based on illumination aw]

📑[CDDFuse: Correlation-Driven Dual-Branch Feature Decomposition for Multi-Modality Image Fusion]

📑[U2Fusion: A Unified Unsupervised Image Fusion Network]

📑综述[Visible and Infrared Image Fusion Using Deep Learning]

📚图像融合论文baseline总结

📑其他论文

📑[3D目标检测综述:Multi-Modal 3D Object Detection in Autonomous Driving:A Survey]

🎈其他总结

🎈[CVPR2023、ICCV2023论文题目汇总及词频统计]

✨精品文章总结

✨[图像融合论文及代码整理最全大合集]

✨[图像融合常用数据集整理]

🌻【如侵权请私信我删除】

如有疑问可联系:420269520@qq.com;

码字不易,【关注,收藏,点赞】一键三连是我持续更新的动力,祝各位早发paper,顺利毕业~

1021

1021

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?