【slam十四讲第二版】【课本例题代码向】【第十二讲~建图】【实践:单目稠密重建】【RGBD-稠密建图】【从点云重建网格】【octomap的安装】【实践:八叉树地图】

0 前言

1 实践:单目稠密重建

-

本工程采用的数据集是使用REMODE的测试数据集。它提供了一架无人集采集的单目俯视图像,共有200张,同时提供了每张图像的真实位姿数据集自取:链接: https://pan.baidu.com/s/1X9Y3fo8M2mFLdHh0YoN9dA 提取码: rpow

-

整个工程出去数据集自取:链接: https://pan.baidu.com/s/19pkkGewg4lhOOE595E16WQ 提取码: bf26

1.1 dense_mapping.cpp

#include <iostream>

#include <vector>

#include <fstream>

using namespace std;

#include <boost/timer.hpp>

// for sophus

#include <sophus/se3.hpp>

using Sophus::SE3d;

// for eigen

#include <Eigen/Core>

#include <Eigen/Geometry>

using namespace Eigen;

// for opencv

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

using namespace cv;

/**********************************************

* 本程序演示了单目相机在已知轨迹下的稠密深度估计

* 使用极线搜索 + NCC 匹配的方式,与书本的 12.2 节对应

* 请注意本程序并不完美,你完全可以改进它——我其实在故意暴露一些问题(这是借口)。

***********************************************/

// ------------------------------------------------------------------

// parameters

const int boarder = 20; // 边缘宽度

const int width = 640; // 图像宽度

const int height = 480; // 图像高度

const double fx = 481.2f; // 相机内参

const double fy = -480.0f;

const double cx = 319.5f;

const double cy = 239.5f;

const int ncc_window_size = 3; // NCC 取的窗口半宽度

const int ncc_area = (2 * ncc_window_size + 1) * (2 * ncc_window_size + 1); // NCC窗口面积

const double min_cov = 0.1; // 收敛判定:最小方差

const double max_cov = 10; // 发散判定:最大方差

// ------------------------------------------------------------------

// 重要的函数

/// 从 REMODE 数据集读取数据

bool readDatasetFiles(

const string &path,

vector<string> &color_image_files,

vector<SE3d> &poses,

cv::Mat &ref_depth

);

/**

* 根据新的图像更新深度估计

* @param ref 参考图像

* @param curr 当前图像

* @param T_C_R 参考图像到当前图像的位姿

* @param depth 深度

* @param depth_cov 深度方差

* @return 是否成功

*/

bool update(

const Mat &ref,

const Mat &curr,

const SE3d &T_C_R,

Mat &depth,

Mat &depth_cov2

);

/**

* 极线搜索

* @param ref 参考图像

* @param curr 当前图像

* @param T_C_R 位姿

* @param pt_ref 参考图像中点的位置

* @param depth_mu 深度均值

* @param depth_cov 深度方差

* @param pt_curr 当前点

* @param epipolar_direction 极线方向

* @return 是否成功

*/

bool epipolarSearch(

const Mat &ref,

const Mat &curr,

const SE3d &T_C_R,

const Vector2d &pt_ref,

const double &depth_mu,

const double &depth_cov,

Vector2d &pt_curr,

Vector2d &epipolar_direction

);

/**

* 更新深度滤波器

* @param pt_ref 参考图像点

* @param pt_curr 当前图像点

* @param T_C_R 位姿

* @param epipolar_direction 极线方向

* @param depth 深度均值

* @param depth_cov2 深度方向

* @return 是否成功

*/

bool updateDepthFilter(

const Vector2d &pt_ref,

const Vector2d &pt_curr,

const SE3d &T_C_R,

const Vector2d &epipolar_direction,

Mat &depth,

Mat &depth_cov2

);

/**

* 计算 NCC 评分

* @param ref 参考图像

* @param curr 当前图像

* @param pt_ref 参考点

* @param pt_curr 当前点

* @return NCC评分

*/

double NCC(const Mat &ref, const Mat &curr, const Vector2d &pt_ref, const Vector2d &pt_curr);

// 双线性灰度插值

inline double getBilinearInterpolatedValue(const Mat &img, const Vector2d &pt) {

uchar *d = &img.data[int(pt(1, 0)) * img.step + int(pt(0, 0))];//读取

double xx = pt(0, 0) - floor(pt(0, 0));

double yy = pt(1, 0) - floor(pt(1, 0));

return ((1 - xx) * (1 - yy) * double(d[0]) +

xx * (1 - yy) * double(d[1]) +

(1 - xx) * yy * double(d[img.step]) +

xx * yy * double(d[img.step + 1])) / 255.0;

}

// ------------------------------------------------------------------

// 一些小工具

// 显示估计的深度图

void plotDepth(const Mat &depth_truth, const Mat &depth_estimate);

// 像素到相机坐标系

inline Vector3d px2cam(const Vector2d px) {

return Vector3d(

(px(0, 0) - cx) / fx,

(px(1, 0) - cy) / fy,

1

);

}

// 相机坐标系到像素

inline Vector2d cam2px(const Vector3d p_cam) {

return Vector2d(

p_cam(0, 0) * fx / p_cam(2, 0) + cx,

p_cam(1, 0) * fy / p_cam(2, 0) + cy

);

}

// 检测一个点是否在图像边框内

inline bool inside(const Vector2d &pt) {

return pt(0, 0) >= boarder && pt(1, 0) >= boarder

&& pt(0, 0) + boarder < width && pt(1, 0) + boarder <= height;

}

// 显示极线匹配

void showEpipolarMatch(const Mat &ref, const Mat &curr, const Vector2d &px_ref, const Vector2d &px_curr);

// 显示极线

/**

*

* @param ref 参考图像

* @param curr 当前图像

* @param px_ref 参考图像的坐标点

* @param px_min_curr 按最小深度投影的像素

* @param px_max_curr 按最大深度投影的像素

*/

void showEpipolarLine(const Mat &ref, const Mat &curr, const Vector2d &px_ref, const Vector2d &px_min_curr,

const Vector2d &px_max_curr);

/// 评测深度估计

void evaludateDepth(const Mat &depth_truth, const Mat &depth_estimate);

// ------------------------------------------------------------------

int main(int argc, char **argv) {

if (argc != 2) {

cout << "Usage: dense_mapping path_to_test_dataset" << endl;

return -1;

}

// 从数据集读取数据

vector<string> color_image_files;

vector<SE3d> poses_TWC;

Mat ref_depth;

bool ret = readDatasetFiles(argv[1], color_image_files, poses_TWC, ref_depth);

if (ret == false) {

cout << "Reading image files failed!" << endl;

return -1;

}

cout << "read total " << color_image_files.size() << " files." << endl;

// 第一张图

Mat ref = imread(color_image_files[0], 0); // gray-scale image

SE3d pose_ref_TWC = poses_TWC[0];

double init_depth = 3.0; // 深度初始值

double init_cov2 = 3.0; // 方差初始值

Mat depth(height, width, CV_64F, init_depth); // 深度图

Mat depth_cov2(height, width, CV_64F, init_cov2); // 深度图方差

for (int index = 1; index < color_image_files.size(); index++) {

cout << "*** loop " << index << " ***" << endl;

Mat curr = imread(color_image_files[index], 0);

if (curr.data == nullptr) continue;

SE3d pose_curr_TWC = poses_TWC[index];

SE3d pose_T_C_R = pose_curr_TWC.inverse() * pose_ref_TWC; // 坐标转换关系: T_C_W * T_W_R = T_C_R

update(ref, curr, pose_T_C_R, depth, depth_cov2);

evaludateDepth(ref_depth, depth);

plotDepth(ref_depth, depth);

imshow("image", curr);

waitKey(1);

}

cout << "estimation returns, saving depth map ..." << endl;

imwrite("depth.png", depth);

cout << "done." << endl;

return 0;

}

bool readDatasetFiles(

const string &path,

vector<string> &color_image_files,

std::vector<SE3d> &poses,

cv::Mat &ref_depth) {

ifstream fin(path + "/first_200_frames_traj_over_table_input_sequence.txt");

if (!fin) return false;

while (!fin.eof()) {

// 数据格式:图像文件名 tx, ty, tz, qx, qy, qz, qw ,注意是 TWC 而非 TCW

string image;

fin >> image;

double data[7];

for (double &d:data) fin >> d;

color_image_files.push_back(path + string("/images/") + image);

poses.push_back(

SE3d(Quaterniond(data[6], data[3], data[4], data[5]),

Vector3d(data[0], data[1], data[2]))

);

if (!fin.good()) break;

}

fin.close();

// load reference depth

fin.open(path + "/depthmaps/scene_000.depth");

ref_depth = cv::Mat(height, width, CV_64F);

if (!fin) return false;

for (int y = 0; y < height; y++)

for (int x = 0; x < width; x++) {

double depth = 0;

fin >> depth;

ref_depth.ptr<double>(y)[x] = depth / 100.0;

}

return true;

}

// 对整个深度图进行更新

bool update(const Mat &ref, const Mat &curr, const SE3d &T_C_R, Mat &depth, Mat &depth_cov2) {

for (int x = boarder; x < width - boarder; x++)

for (int y = boarder; y < height - boarder; y++) {

// 遍历每个像素

if (depth_cov2.ptr<double>(y)[x] < min_cov || depth_cov2.ptr<double>(y)[x] > max_cov) // 深度已收敛或发散

continue;

// 在极线上搜索 (x,y) 的匹配

Vector2d pt_curr;

Vector2d epipolar_direction;

bool ret = epipolarSearch(

ref,

curr,

T_C_R,

Vector2d(x, y),

depth.ptr<double>(y)[x],

sqrt(depth_cov2.ptr<double>(y)[x]),

pt_curr,

epipolar_direction

);

if (ret == false) // 匹配失败

continue;

// 取消该注释以显示匹配

// showEpipolarMatch(ref, curr, Vector2d(x, y), pt_curr);

// 匹配成功,更新深度图

updateDepthFilter(Vector2d(x, y), pt_curr, T_C_R, epipolar_direction, depth, depth_cov2);

}

}

// 极线搜索

/**

* 极线搜索

* @param ref 参考图像

* @param curr 当前图像

* @param T_C_R ref图像与curr图像的相对位姿

* @param pt_ref 参考图像中点的位置

* @param depth_mu 深度均值

* @param depth_cov 深度方差

* @param pt_curr 当前点

* @param epipolar_direction 极线方向

* @return 是否成功

*/

// 方法见书 12.2 12.3 两节

bool epipolarSearch(

const Mat &ref, const Mat &curr,

const SE3d &T_C_R, const Vector2d &pt_ref,

const double &depth_mu, const double &depth_cov,

Vector2d &pt_curr, Vector2d &epipolar_direction) {

Vector3d f_ref = px2cam(pt_ref); //将参考点的像素点坐标转变为归一化平面上的坐标

f_ref.normalize();//确保是齐次坐标

Vector3d P_ref = f_ref * depth_mu; // 参考帧的 P 向量:转变为相机坐标系下的三维坐标;P_R_p

Vector2d px_mean_curr = cam2px(T_C_R * P_ref); // 按深度均值投影的像素,括号内得到P_C_p;再将该相机坐标系下三维坐标转变为像素坐标,得到对应当前图像下的像素坐标点

double d_min = depth_mu - 3 * depth_cov, d_max = depth_mu + 3 * depth_cov;//按照3个不确定度来确定深度的最小值和最大值

if (d_min < 0.1) d_min = 0.1;

Vector2d px_min_curr = cam2px(T_C_R * (f_ref * d_min)); // 按最小深度投影的像素

Vector2d px_max_curr = cam2px(T_C_R * (f_ref * d_max)); // 按最大深度投影的像素

Vector2d epipolar_line = px_max_curr - px_min_curr; // 极线(线段形式)

epipolar_direction = epipolar_line; // 极线方向

epipolar_direction.normalize();

double half_length = 0.5 * epipolar_line.norm(); // 极线线段的半长度

if (half_length > 100) half_length = 100; // 我们不希望搜索太多东西

// 取消此句注释以显示极线(线段)

//showEpipolarLine( ref, curr, pt_ref, px_min_curr, px_max_curr );

// 在极线上搜索,以深度均值点为中心,左右各取半长度

double best_ncc = -1.0;

Vector2d best_px_curr;

for (double l = -half_length; l <= half_length; l += 0.7) { // l+=sqrt(2)

Vector2d px_curr = px_mean_curr + l * epipolar_direction; // 待匹配点

if (!inside(px_curr))//检测一个点是否在图像边框内,如果不在,就跳过这点

continue;

// 计算待匹配点与参考帧的 NCC(归一化互相关度)

double ncc = NCC(ref, curr, pt_ref, px_curr);//选择ncc的方式计算块匹配评分

if (ncc > best_ncc) {

best_ncc = ncc;

best_px_curr = px_curr;

}

}

if (best_ncc < 0.85f) // 只相信 NCC 很高的匹配

return false;

pt_curr = best_px_curr;

return true;

}

/**

* 计算 NCC 评分

* @param ref 参考图像

* @param curr 当前图像

* @param pt_ref 参考点

* @param pt_curr 当前点

* @return NCC评分

*/

double NCC(

const Mat &ref, const Mat &curr,

const Vector2d &pt_ref, const Vector2d &pt_curr) {

// 零均值-归一化互相关

// 先算均值

double mean_ref = 0, mean_curr = 0;

vector<double> values_ref, values_curr; // 参考帧和当前帧的均值

for (int x = -ncc_window_size; x <= ncc_window_size; x++)

for (int y = -ncc_window_size; y <= ncc_window_size; y++) {

double value_ref = double(ref.ptr<uchar>(int(y + pt_ref(1, 0)))[int(x + pt_ref(0, 0))]) / 255.0;

mean_ref += value_ref;

double value_curr = getBilinearInterpolatedValue(curr, pt_curr + Vector2d(x, y));

mean_curr += value_curr;

values_ref.push_back(value_ref);

values_curr.push_back(value_curr);

}

mean_ref /= ncc_area;

mean_curr /= ncc_area;

// 计算 Zero mean NCC

double numerator = 0, demoniator1 = 0, demoniator2 = 0;

for (int i = 0; i < values_ref.size(); i++) {

double n = (values_ref[i] - mean_ref) * (values_curr[i] - mean_curr);

numerator += n;

demoniator1 += (values_ref[i] - mean_ref) * (values_ref[i] - mean_ref);

demoniator2 += (values_curr[i] - mean_curr) * (values_curr[i] - mean_curr);

}

return numerator / sqrt(demoniator1 * demoniator2 + 1e-10); // 防止分母出现零

}

/**

* 更新深度滤波器

* @param pt_ref 参考图像点

* @param pt_curr 当前图像点

* @param T_C_R 位姿

* @param epipolar_direction 极线方向

* @param depth 深度均值

* @param depth_cov2 深度方向

* @return 是否成功

*/

bool updateDepthFilter( //主要是12.2.3的知识

const Vector2d &pt_ref,

const Vector2d &pt_curr,

const SE3d &T_C_R,

const Vector2d &epipolar_direction,

Mat &depth,

Mat &depth_cov2) {

// 用三角化计算深度

SE3d T_R_C = T_C_R.inverse();

Vector3d f_ref = px2cam(pt_ref);//像素到相机坐标系转变的齐次坐标

f_ref.normalize();

Vector3d f_curr = px2cam(pt_curr);//像素到相机坐标系转变的齐次坐标

f_curr.normalize();

// 方程

// d_ref * f_ref = d_cur * ( R_RC * f_cur ) + t_RC

// f2 = R_RC * f_cur

// 转化成下面这个矩阵方程组

// => [ f_ref^T f_ref, -f_ref^T f2 ] [d_ref] [f_ref^T t]

// [ f2^T f_ref, -f2^T f2 ] [d_cur] = [f2^T t ]

Vector3d t = T_R_C.translation();

Vector3d f2 = T_R_C.so3() * f_curr;

Vector2d b = Vector2d(t.dot(f_ref), t.dot(f2));

Matrix2d A;

A(0, 0) = f_ref.dot(f_ref);

A(0, 1) = -f_ref.dot(f2);

A(1, 0) = -A(0, 1);

A(1, 1) = -f2.dot(f2);

Vector2d ans = A.inverse() * b;

Vector3d xm = ans[0] * f_ref; // ref 侧的结果

Vector3d xn = t + ans[1] * f2; // cur 结果

Vector3d p_esti = (xm + xn) / 2.0; // P的位置,取两者的平均

double depth_estimation = p_esti.norm(); // 深度值

// 计算不确定性(以一个像素为误差)

Vector3d p = f_ref * depth_estimation;

Vector3d a = p - t;

double t_norm = t.norm();

double a_norm = a.norm();

double alpha = acos(f_ref.dot(t) / t_norm);

double beta = acos(-a.dot(t) / (a_norm * t_norm));

Vector3d f_curr_prime = px2cam(pt_curr + epipolar_direction);

f_curr_prime.normalize();

double beta_prime = acos(f_curr_prime.dot(-t) / t_norm);

double gamma = M_PI - alpha - beta_prime;

double p_prime = t_norm * sin(beta_prime) / sin(gamma);

double d_cov = p_prime - depth_estimation;

double d_cov2 = d_cov * d_cov;

// 高斯融合

double mu = depth.ptr<double>(int(pt_ref(1, 0)))[int(pt_ref(0, 0))];

double sigma2 = depth_cov2.ptr<double>(int(pt_ref(1, 0)))[int(pt_ref(0, 0))];

double mu_fuse = (d_cov2 * mu + sigma2 * depth_estimation) / (sigma2 + d_cov2);

double sigma_fuse2 = (sigma2 * d_cov2) / (sigma2 + d_cov2);

depth.ptr<double>(int(pt_ref(1, 0)))[int(pt_ref(0, 0))] = mu_fuse;

depth_cov2.ptr<double>(int(pt_ref(1, 0)))[int(pt_ref(0, 0))] = sigma_fuse2;

return true;

}

// 后面这些太简单我就不注释了(其实是因为懒)

void plotDepth(const Mat &depth_truth, const Mat &depth_estimate) {

imshow("depth_truth", depth_truth * 0.4);

imshow("depth_estimate", depth_estimate * 0.4);

imshow("depth_error", depth_truth - depth_estimate);

waitKey(1);

}

/// 评测深度估计

void evaludateDepth(const Mat &depth_truth, const Mat &depth_estimate) {

double ave_depth_error = 0; // 平均误差

double ave_depth_error_sq = 0; // 平方误差

int cnt_depth_data = 0;

for (int y = boarder; y < depth_truth.rows - boarder; y++)

for (int x = boarder; x < depth_truth.cols - boarder; x++) {

double error = depth_truth.ptr<double>(y)[x] - depth_estimate.ptr<double>(y)[x];

ave_depth_error += error;

ave_depth_error_sq += error * error;

cnt_depth_data++;

}

ave_depth_error /= cnt_depth_data;

ave_depth_error_sq /= cnt_depth_data;

cout << "Average squared error = " << ave_depth_error_sq << ", average error: " << ave_depth_error << endl;

}

void showEpipolarMatch(const Mat &ref, const Mat &curr, const Vector2d &px_ref, const Vector2d &px_curr) {

Mat ref_show, curr_show;

cv::cvtColor(ref, ref_show, CV_GRAY2BGR);

cv::cvtColor(curr, curr_show, CV_GRAY2BGR);

cv::circle(ref_show, cv::Point2f(px_ref(0, 0), px_ref(1, 0)), 5, cv::Scalar(0, 0, 250), 2);

cv::circle(curr_show, cv::Point2f(px_curr(0, 0), px_curr(1, 0)), 5, cv::Scalar(0, 0, 250), 2);

imshow("ref", ref_show);

imshow("curr", curr_show);

waitKey(1);

}

// 显示极线

/**

*

* @param ref 参考图像

* @param curr 当前图像

* @param px_ref 参考图像的坐标点

* @param px_min_curr 按最小深度投影的像素

* @param px_max_curr 按最大深度投影的像素

*/

void showEpipolarLine(const Mat &ref, const Mat &curr, const Vector2d &px_ref, const Vector2d &px_min_curr,

const Vector2d &px_max_curr) {

Mat ref_show, curr_show;

cv::cvtColor(ref, ref_show, CV_GRAY2BGR);//表示将当前灰度图像转变为RGB图像

cv::cvtColor(curr, curr_show, CV_GRAY2BGR);

cv::circle(ref_show, cv::Point2f(px_ref(0, 0), px_ref(1, 0)), 5, cv::Scalar(0, 255, 0), 2);

cv::circle(curr_show, cv::Point2f(px_min_curr(0, 0), px_min_curr(1, 0)), 5, cv::Scalar(0, 255, 0), 2);

cv::circle(curr_show, cv::Point2f(px_max_curr(0, 0), px_max_curr(1, 0)), 5, cv::Scalar(0, 255, 0), 2);

cv::line(curr_show, Point2f(px_min_curr(0, 0), px_min_curr(1, 0)), Point2f(px_max_curr(0, 0), px_max_curr(1, 0)),

Scalar(0, 255, 0), 1);

imshow("ref1", ref_show);

imshow("curr", curr_show);

waitKey(1);

}

1.2 CMakeLists.txt

cmake_minimum_required(VERSION 2.8)

project(dense_monocular)

set(CMAKE_BUILD_TYPE "Release")

set(CMAKE_CXX_STANDARD 14)

############### dependencies ######################

# Eigen

include_directories("/usr/include/eigen3")

# OpenCV

find_package(OpenCV 3.1 REQUIRED)

include_directories(${OpenCV_INCLUDE_DIRS})

# Sophus

find_package(Sophus REQUIRED)

include_directories(${Sophus_INCLUDE_DIRS})

set(THIRD_PARTY_LIBS

${OpenCV_LIBS}

${Sophus_LIBRARIES} fmt)

add_executable(dense_mapping src/dense_mapping.cpp)

target_link_libraries(dense_mapping ${THIRD_PARTY_LIBS})

1.3 输出

- 运行可执行文件

./dense_mapping ../../remode_test_data/test_data

- 输出图片

- 这里就不给予演示

- 这里我是想把深度图和灰度图一起合成一个3d点云图输出,但是没有成功,也不知道为什么,以后有机会再来探讨

2. RGBD-稠密建图

- 工程所需要的

data数据,可自取:链接: https://pan.baidu.com/s/1ADHiAneTXvNkmc0AgfZECA 提取码: mgr0 - 这个代码工程不包含数据集的,自取:链接: https://pan.baidu.com/s/1IxfpG9pKZizO85PvKBG-Gg 提取码: dqnu

2.1 pointcloud_mapping.cpp

#include <iostream>

#include <fstream>

using namespace std;

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <Eigen/Geometry>

#include <boost/format.hpp> // for formating strings

#include <pcl/point_types.h>

#include <pcl/io/pcd_io.h>

#include <pcl/filters/voxel_grid.h>

#include <pcl/visualization/pcl_visualizer.h>

#include <pcl/filters/statistical_outlier_removal.h>

int main(int argc, char **argv) {

vector<cv::Mat> colorImgs, depthImgs; // 彩色图和深度图

vector<Eigen::Isometry3d> poses; // 相机位姿

ifstream fin("../data/pose.txt");

if (!fin) {

cerr << "cannot find pose file" << endl;

return 1;

}

for (int i = 0; i < 5; i++) {

boost::format fmt("../data/%s/%d.%s"); //图像文件格式

colorImgs.push_back(cv::imread((fmt % "color" % (i + 1) % "png").str()));

depthImgs.push_back(cv::imread((fmt % "depth" % (i + 1) % "png").str(), -1)); // 使用-1读取原始图像

double data[7] = {0};

for (int i = 0; i < 7; i++) {

fin >> data[i];

}

Eigen::Quaterniond q(data[6], data[3], data[4], data[5]);

Eigen::Isometry3d T(q);

T.pretranslate(Eigen::Vector3d(data[0], data[1], data[2]));

poses.push_back(T);

}

// 计算点云并拼接

// 相机内参

double cx = 319.5;

double cy = 239.5;

double fx = 481.2;

double fy = -480.0;

double depthScale = 5000.0;

cout << "正在将图像转换为点云..." << endl;

// 定义点云使用的格式:这里用的是XYZRGB

typedef pcl::PointXYZRGB PointT;

typedef pcl::PointCloud<PointT> PointCloud;

// 新建一个点云

PointCloud::Ptr pointCloud(new PointCloud);

for (int i = 0; i < 5; i++) {

PointCloud::Ptr current(new PointCloud);

cout << "转换图像中: " << i + 1 << endl;

cv::Mat color = colorImgs[i];

cv::Mat depth = depthImgs[i];

Eigen::Isometry3d T = poses[i];

for (int v = 0; v < color.rows; v++)

for (int u = 0; u < color.cols; u++) {

unsigned int d = depth.ptr<unsigned short>(v)[u]; // 深度值

if (d == 0) continue; // 为0表示没有测量到

Eigen::Vector3d point;

point[2] = double(d) / depthScale;

point[0] = (u - cx) * point[2] / fx;

point[1] = (v - cy) * point[2] / fy;

Eigen::Vector3d pointWorld = T * point;

PointT p;

p.x = pointWorld[0];

p.y = pointWorld[1];

p.z = pointWorld[2];

p.b = color.data[v * color.step + u * color.channels()];

p.g = color.data[v * color.step + u * color.channels() + 1];

p.r = color.data[v * color.step + u * color.channels() + 2];

current->points.push_back(p);

}

// depth filter and statistical removal

PointCloud::Ptr tmp(new PointCloud);

pcl::StatisticalOutlierRemoval<PointT> statistical_filter;

statistical_filter.setMeanK(50);

statistical_filter.setStddevMulThresh(1.0);

statistical_filter.setInputCloud(current);

statistical_filter.filter(*tmp);

(*pointCloud) += *tmp;

}

pointCloud->is_dense = false;

cout << "点云共有" << pointCloud->size() << "个点." << endl;

// voxel filter

pcl::VoxelGrid<PointT> voxel_filter;

double resolution = 0.03;

voxel_filter.setLeafSize(resolution, resolution, resolution); // resolution

PointCloud::Ptr tmp(new PointCloud);

voxel_filter.setInputCloud(pointCloud);

voxel_filter.filter(*tmp);

tmp->swap(*pointCloud);

cout << "滤波之后,点云共有" << pointCloud->size() << "个点." << endl;

pcl::io::savePCDFileBinary("map.pcd", *pointCloud);

return 0;

}

2.2 CMakeLists.txt

cmake_minimum_required(VERSION 2.8)

project(dense_RGBD)

set(CMAKE_BUILD_TYPE Release)

set(CMAKE_CXX_STANDARD 14)

# opencv

find_package(OpenCV 3.1 REQUIRED)

include_directories(${OpenCV_INCLUDE_DIRS})

# eigen

include_directories("/usr/include/eigen3/")

# pcl

find_package(PCL REQUIRED)

include_directories(${PCL_INCLUDE_DIRS})

add_definitions(${PCL_DEFINITIONS})

# octomap

#find_package(octomap REQUIRED)

#include_directories(${OCTOMAP_INCLUDE_DIRS})

add_executable(pointcloud_mapping src/pointcloud_mapping.cpp)

target_link_libraries(pointcloud_mapping ${OpenCV_LIBS} ${PCL_LIBRARIES})

2.3 输出

/home/bupo/my_study/slam14/slam14_my/cap12/dense_RGBD/cmake-build-debug/pointcloud_mapping

正在将图像转换为点云...

转换图像中: 1

转换图像中: 2

转换图像中: 3

转换图像中: 4

转换图像中: 5

点云共有1309800个点.

滤波之后,点云共有296567个点.

进程已结束,退出代码0

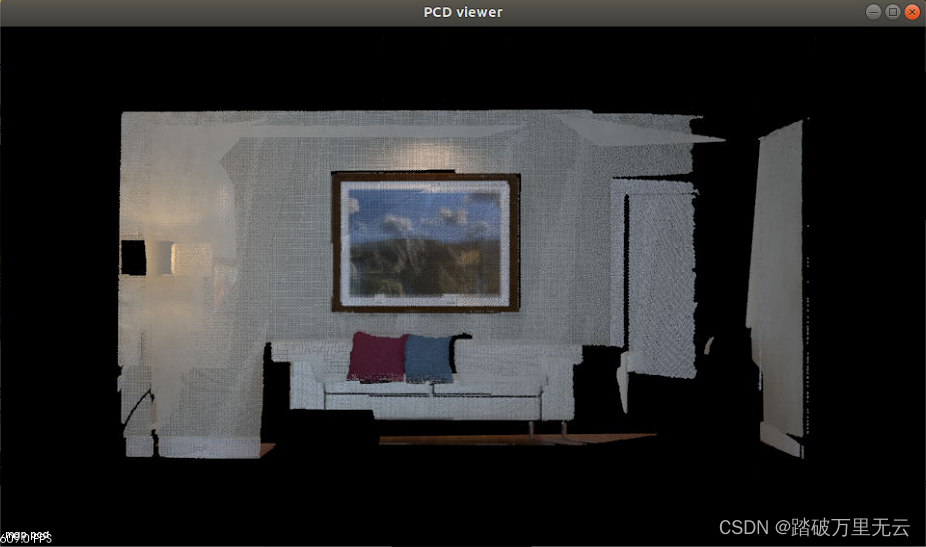

- 除此之外,会生成一个

map.pcd文件,使用pcl_viewer查:

3 从点云重建网格

3.1 surfel_mapping.cpp

//

// Created by gaoxiang on 19-4-25.

//

#include <pcl/point_cloud.h>

#include <pcl/point_types.h>

#include <pcl/io/pcd_io.h>

#include <pcl/visualization/pcl_visualizer.h>

#include <pcl/kdtree/kdtree_flann.h>

#include <pcl/surface/surfel_smoothing.h>

#include <pcl/surface/mls.h>

#include <pcl/surface/gp3.h>

#include <pcl/surface/impl/mls.hpp>

// typedefs

typedef pcl::PointXYZRGB PointT;

typedef pcl::PointCloud<PointT> PointCloud;

typedef pcl::PointCloud<PointT>::Ptr PointCloudPtr;

typedef pcl::PointXYZRGBNormal SurfelT;

typedef pcl::PointCloud<SurfelT> SurfelCloud;

typedef pcl::PointCloud<SurfelT>::Ptr SurfelCloudPtr;

SurfelCloudPtr reconstructSurface(

const PointCloudPtr &input, float radius, int polynomial_order) {

pcl::MovingLeastSquares<PointT, SurfelT> mls;

pcl::search::KdTree<PointT>::Ptr tree(new pcl::search::KdTree<PointT>);

mls.setSearchMethod(tree);

mls.setSearchRadius(radius);

mls.setComputeNormals(true);

mls.setSqrGaussParam(radius * radius);

mls.setPolynomialFit(polynomial_order > 1);

mls.setPolynomialOrder(polynomial_order);

mls.setInputCloud(input);

SurfelCloudPtr output(new SurfelCloud);

mls.process(*output);

return (output);

}

pcl::PolygonMeshPtr triangulateMesh(const SurfelCloudPtr &surfels) {

// Create search tree*

pcl::search::KdTree<SurfelT>::Ptr tree(new pcl::search::KdTree<SurfelT>);

tree->setInputCloud(surfels);

// Initialize objects

pcl::GreedyProjectionTriangulation<SurfelT> gp3;

pcl::PolygonMeshPtr triangles(new pcl::PolygonMesh);

// Set the maximum distance between connected points (maximum edge length)

gp3.setSearchRadius(0.05);

// Set typical values for the parameters

gp3.setMu(2.5);

gp3.setMaximumNearestNeighbors(100);

gp3.setMaximumSurfaceAngle(M_PI / 4); // 45 degrees

gp3.setMinimumAngle(M_PI / 18); // 10 degrees

gp3.setMaximumAngle(2 * M_PI / 3); // 120 degrees

gp3.setNormalConsistency(true);

// Get result

gp3.setInputCloud(surfels);

gp3.setSearchMethod(tree);

gp3.reconstruct(*triangles);

return triangles;

}

int main(int argc, char **argv) {

// Load the points

PointCloudPtr cloud(new PointCloud);

if (argc == 0 || pcl::io::loadPCDFile(argv[1], *cloud)) {

cout << "failed to load point cloud!";

return 1;

}

cout << "point cloud loaded, points: " << cloud->points.size() << endl;

// Compute surface elements

cout << "computing normals ... " << endl;

double mls_radius = 0.05, polynomial_order = 2;

auto surfels = reconstructSurface(cloud, mls_radius, polynomial_order);

// Compute a greedy surface triangulation

cout << "computing mesh ... " << endl;

pcl::PolygonMeshPtr mesh = triangulateMesh(surfels);

cout << "display mesh ... " << endl;

pcl::visualization::PCLVisualizer vis;

vis.addPolylineFromPolygonMesh(*mesh, "mesh frame");

vis.addPolygonMesh(*mesh, "mesh");

vis.resetCamera();

vis.spin();

}

3.2 CMakeLists.txt

cmake_minimum_required(VERSION 2.8)

set(CMAKE_BUILD_TYPE Release)

set(CMAKE_CXX_FLAGS "-std=c++11 -O2")

# opencv

find_package(OpenCV REQUIRED)

include_directories(${OpenCV_INCLUDE_DIRS})

# eigen

include_directories("/usr/include/eigen3/")

# pcl

find_package(PCL REQUIRED)

include_directories(${PCL_INCLUDE_DIRS})

add_definitions(${PCL_DEFINITIONS})

add_executable(surfel_mapping src/surfel_mapping.cpp)

target_link_libraries(surfel_mapping ${OpenCV_LIBS} ${PCL_LIBRARIES})

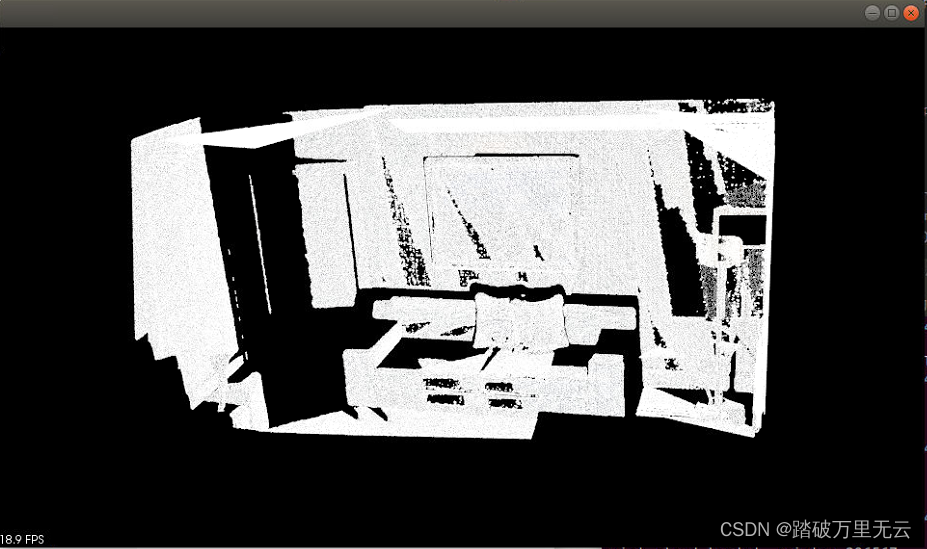

3.3 输出

- 运行可执行程序

bupo@bupo-vpc:~/my_study/slam14/slam14_my/cap12/surfel_mapping/cmake-build-debug$ ./surfel_mapping ../map.pcd

point cloud loaded, points: 296567

computing normals ...

computing mesh ...

display mesh ...

图片如下:

4. octomap的安装

- 获取官方安装包:https://github.com/OctoMap/octomap.git

- 或者网盘自取:链接: https://pan.baidu.com/s/1bGJjLnjAyOypby7O502oP 提取码: 1mcr

- 主要是安装

octomap和octovis

4.1 octomap的安装

4.1.1 安装依赖项

sudo apt-get install doxygen

sudo apt-get install cmake doxygen libqt4-dev libqt4-opengl-dev libqglviewer-dev-qt4

4.1.2 安装

- 可以查看下的安装包里面自带的readme.txt

cd octomap/octomap/

mkdir build && cd build

cmake ..

make

sudo make install

4.1.3 测试

cd octomap/octomap/build/

make test

输出

bupo@bupo-vpc:~/anzhuang/octomap/octomap/build$ make test

Running tests...

Test project /home/bupo/anzhuang/octomap/octomap/build

Start 1: MathVector

1/15 Test #1: MathVector ....................... Passed 0.01 sec

Start 2: MathPose

2/15 Test #2: MathPose ......................... Passed 0.00 sec

Start 3: InsertRay

3/15 Test #3: InsertRay ........................ Passed 0.65 sec

Start 4: InsertScan

4/15 Test #4: InsertScan ....................... Passed 0.28 sec

Start 5: ReadGraph

5/15 Test #5: ReadGraph ........................ Passed 0.01 sec

Start 6: StampedTree

6/15 Test #6: StampedTree ...................... Passed 1.02 sec

Start 7: OcTreeKey

7/15 Test #7: OcTreeKey ........................ Passed 0.00 sec

Start 8: test_scans

8/15 Test #8: test_scans ....................... Passed 0.10 sec

Start 9: test_raycasting

9/15 Test #9: test_raycasting .................. Passed 0.97 sec

Start 10: test_io

10/15 Test #10: test_io .......................... Passed 0.34 sec

Start 11: test_pruning

11/15 Test #11: test_pruning ..................... Passed 0.02 sec

Start 12: test_iterators

12/15 Test #12: test_iterators ................... Passed 0.38 sec

Start 13: test_mapcollection

13/15 Test #13: test_mapcollection ............... Passed 0.15 sec

Start 14: test_color_tree

14/15 Test #14: test_color_tree .................. Passed 0.05 sec

Start 15: test_bbx

15/15 Test #15: test_bbx ......................... Passed 0.00 sec

100% tests passed, 0 tests failed out of 15

Total Test time (real) = 3.98 sec

4.1.4 使用中的CMakeLists.txt

find_package(octomap REQUIRED)

include_directories(${OCTOMAP_INCLUDE_DIRS})

target_link_libraries(xxx ${OCTOMAP_LIBRARIES})

4.1.5 使用中的头文件

#include <octomap/octomap.h> // for octomap

4.2 octovis

4.2.1 依赖库

在之前编译 octovis 时,我们实际上安装了一个可视化程序,即 octovis:

sudo apt-get install libqglviewer-dev-qt4

sudo apt-get install liboctomap-dev octovis

4.2.2 安装

cd /octomap/octovis

mkdir build

cd build

cmake ..

make

sudo make install

4.2.3 使用

运行

bupo@bupo-vpc:~/anzhuang/octomap/octomap$ octovis

Usage: octovis [mapfile] [tree depth cutoff]

Where the optional [tree depth cutoff] is an integer from 1 to 16

5 实践:八叉树地图

5.1 octomap_mapping.cpp

#include <iostream>

#include <fstream>

using namespace std;

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <octomap/octomap.h> // for octomap

#include <Eigen/Geometry>

#include <boost/format.hpp> // for formating strings

int main(int argc, char **argv) {

vector<cv::Mat> colorImgs, depthImgs; // 彩色图和深度图

vector<Eigen::Isometry3d> poses; // 相机位姿

ifstream fin("./data/pose.txt");

if (!fin) {

cerr << "cannot find pose file" << endl;

return 1;

}

for (int i = 0; i < 5; i++) {

boost::format fmt("./data/%s/%d.%s"); //图像文件格式

colorImgs.push_back(cv::imread((fmt % "color" % (i + 1) % "png").str()));

depthImgs.push_back(cv::imread((fmt % "depth" % (i + 1) % "png").str(), -1)); // 使用-1读取原始图像

double data[7] = {0};

for (int i = 0; i < 7; i++) {

fin >> data[i];

}

Eigen::Quaterniond q(data[6], data[3], data[4], data[5]);

Eigen::Isometry3d T(q);

T.pretranslate(Eigen::Vector3d(data[0], data[1], data[2]));

poses.push_back(T);

}

// 计算点云并拼接

// 相机内参

double cx = 319.5;

double cy = 239.5;

double fx = 481.2;

double fy = -480.0;

double depthScale = 5000.0;

cout << "正在将图像转换为 Octomap ..." << endl;

// octomap tree

octomap::OcTree tree(0.01); // 参数为分辨率

for (int i = 0; i < 5; i++) {

cout << "转换图像中: " << i + 1 << endl;

cv::Mat color = colorImgs[i];

cv::Mat depth = depthImgs[i];

Eigen::Isometry3d T = poses[i];

octomap::Pointcloud cloud; // the point cloud in octomap

for (int v = 0; v < color.rows; v++)

for (int u = 0; u < color.cols; u++) {

unsigned int d = depth.ptr<unsigned short>(v)[u]; // 深度值

if (d == 0) continue; // 为0表示没有测量到

Eigen::Vector3d point;

point[2] = double(d) / depthScale;

point[0] = (u - cx) * point[2] / fx;

point[1] = (v - cy) * point[2] / fy;

Eigen::Vector3d pointWorld = T * point;

// 将世界坐标系的点放入点云

cloud.push_back(pointWorld[0], pointWorld[1], pointWorld[2]);

}

// 将点云存入八叉树地图,给定原点,这样可以计算投射线

tree.insertPointCloud(cloud, octomap::point3d(T(0, 3), T(1, 3), T(2, 3)));

}

// 更新中间节点的占据信息并写入磁盘

tree.updateInnerOccupancy();

cout << "saving octomap ... " << endl;

tree.writeBinary("octomap.bt");

return 0;

}

5.2 CMakeLists.txt

cmake_minimum_required(VERSION 2.8)

project(octomap_mapping)

set(CMAKE_BUILD_TYPE Release)

set(CMAKE_CXX_STANDARD 14)

# opencv

find_package(OpenCV REQUIRED)

include_directories(${OpenCV_INCLUDE_DIRS})

# eigen

include_directories("/usr/include/eigen3/")

# pcl

find_package(PCL REQUIRED)

include_directories(${PCL_INCLUDE_DIRS})

add_definitions(${PCL_DEFINITIONS})

# octomap

find_package(octomap REQUIRED)

include_directories(${OCTOMAP_INCLUDE_DIRS})

add_executable(octomap_mapping src/octomap_mapping.cpp)

target_link_libraries(octomap_mapping ${OpenCV_LIBS} ${PCL_LIBRARIES} ${OCTOMAP_LIBRARIES})

5.3 输出

- 运行可执行文件

bupo@bupo-vpc:~/my_study/slam14/slam14_my/cap12/octomap_mapping$ ./cmake-build-debug/octomap_mapping

输出:

/home/bupo/my_study/slam14/slam14_my/cap12/octomap_mapping/cmake-build-debug/octomap_mapping

正在将图像转换为 Octomap ...

转换图像中: 1

转换图像中: 2

转换图像中: 3

转换图像中: 4

转换图像中: 5

saving octomap ...

进程已结束,退出代码0

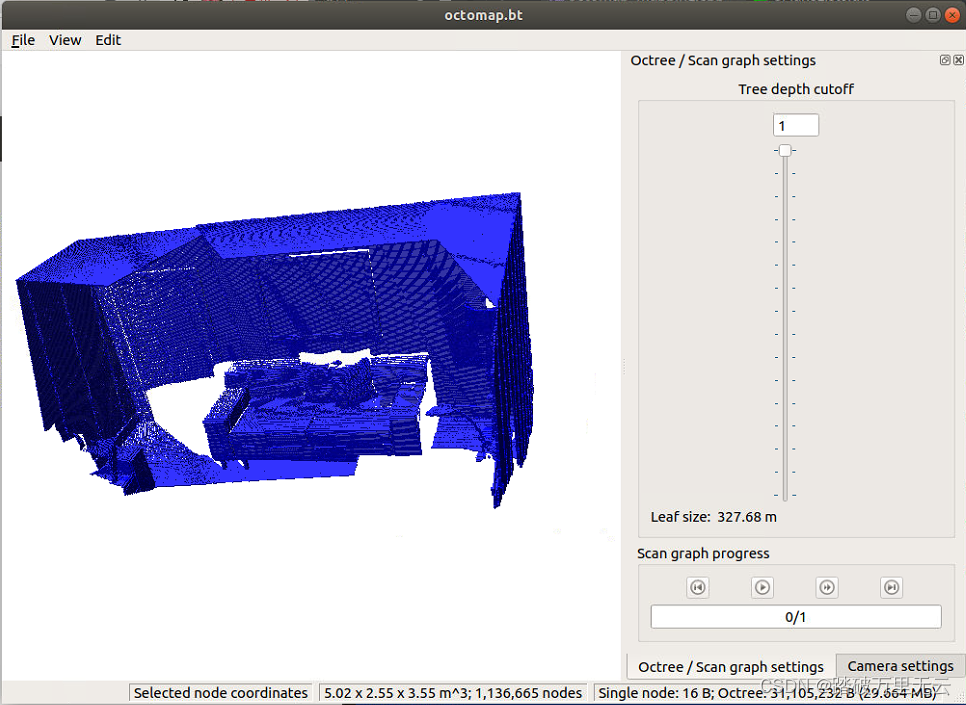

-

还会在工程主路径下生成一个

octomap.bt文件,可自取:链接: https://pan.baidu.com/s/1Bs5NnZgDRo7RZjNpvXz24g 提取码: 0aln -

然后可以使用

octovis打开

bupo@bupo-vpc:~/my_study/slam14/slam14_my/cap12/octomap_mapping/cmake-build-debug$ octovis ../octomap.bt

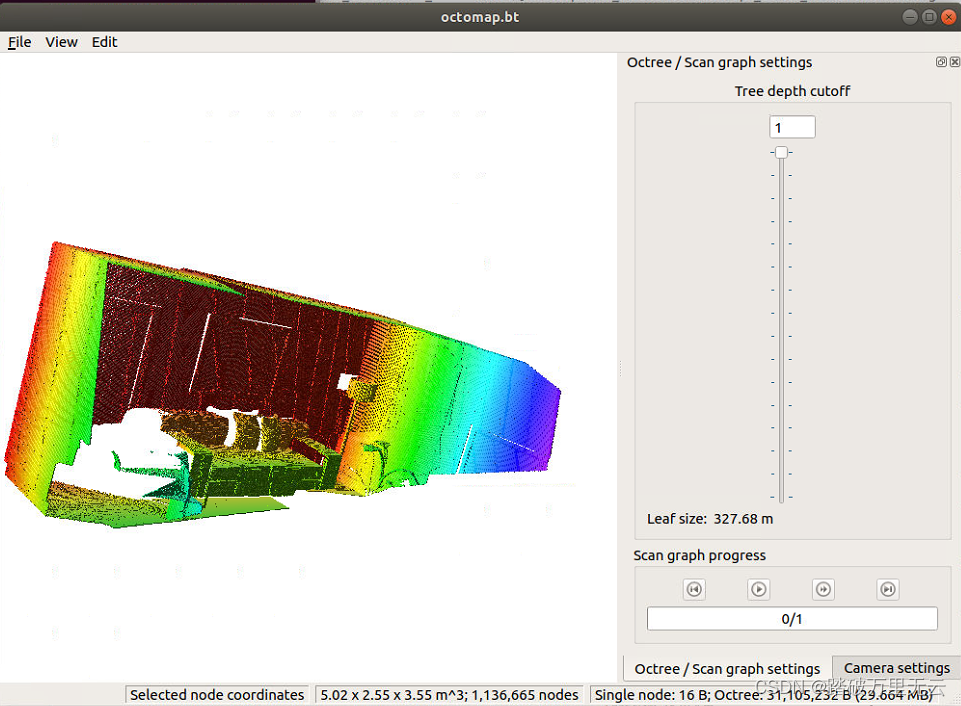

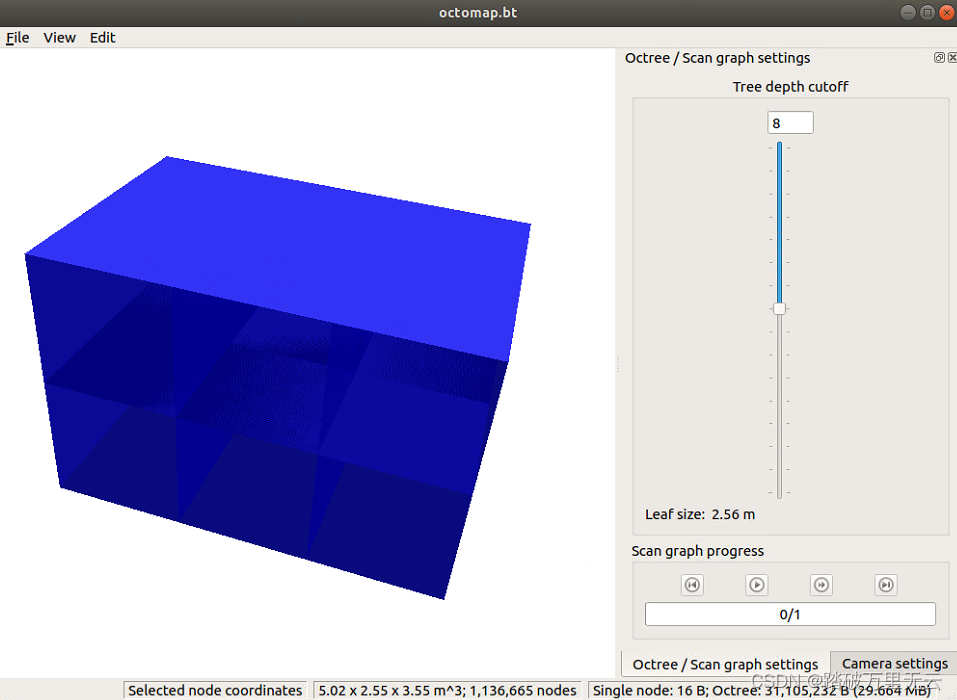

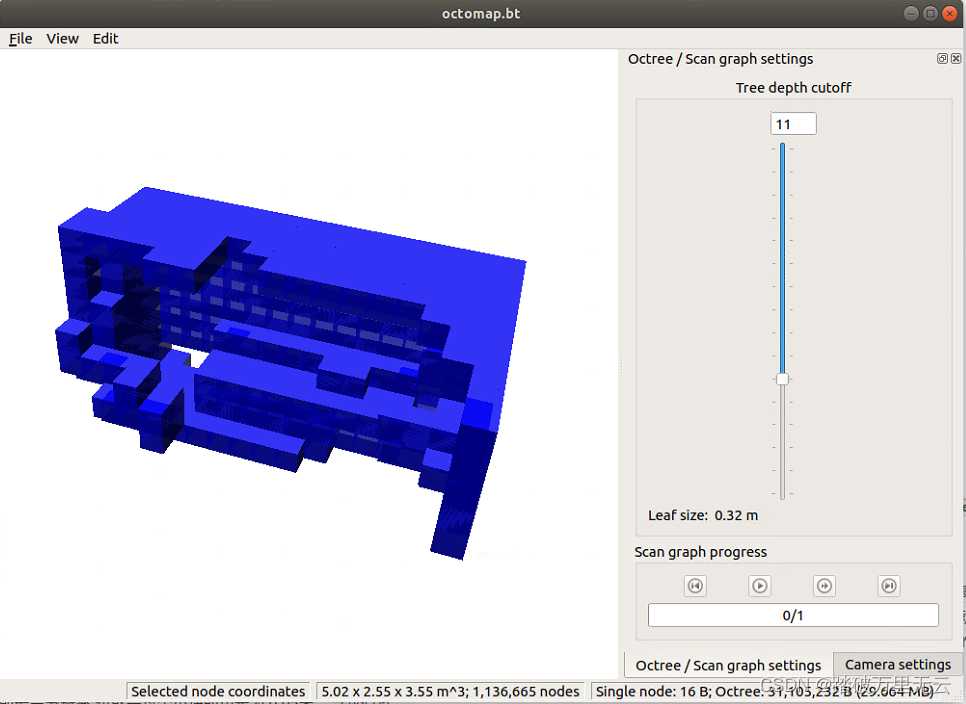

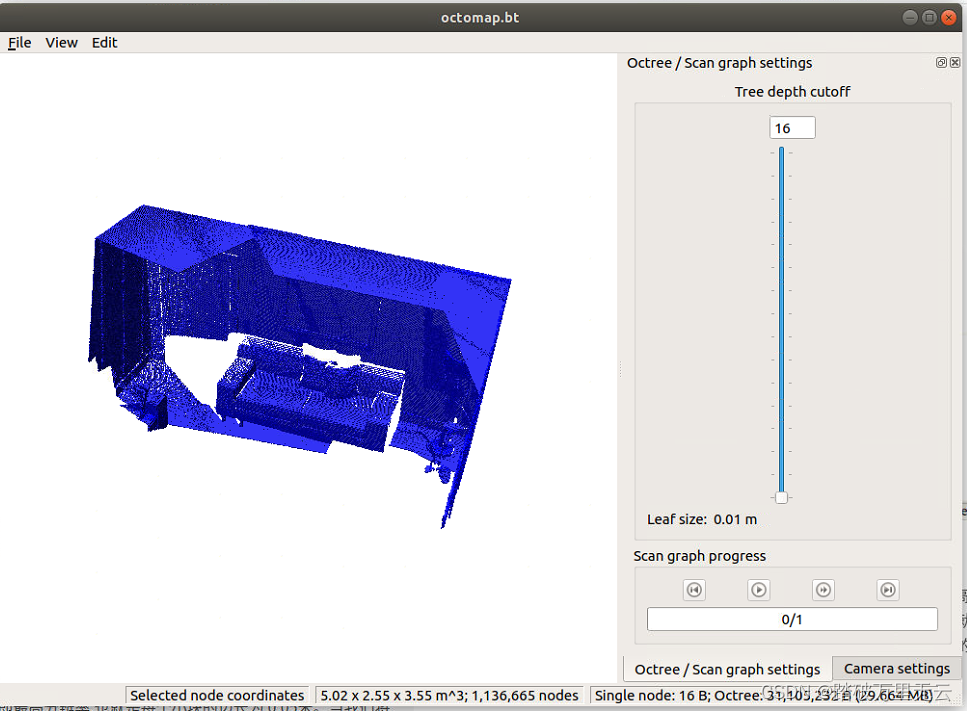

- 可视化界面简单,按下1键,可根据高度信息着色,

- 在右侧有八叉树地深度限制条,这里可以调节地图的分辨率。

- 由于我们构造时使用的默认深度是 16 层,所以这里显示 16 层的话即最高分辨率,也就是每个小块的边长为 0.05米。当我们将深度减少一层时,八叉树的叶子节点往上提了一层,每个小块的边长就增加两倍,变成 0.1 米。可以看到,我们能够很容易地调节地图分辨率以适应不同的场合。Octomap 还有一些可以探索的地方,例如,我们可以方便地查询任意点的占据概率,以此设计在地图中进行导航的方法。

6 课后题

6.1 第二题和第三题合并(使用半稠密和逆深度)

- 本工程采用的数据集是使用REMODE的测试数据集。它提供了一架无人集采集的单目俯视图像,共有200张,同时提供了每张图像的真实位姿数据集自取:链接: https://pan.baidu.com/s/1X9Y3fo8M2mFLdHh0YoN9dA 提取码: rpow

- 整个工程出去数据集自取:链接: https://pan.baidu.com/s/19pkkGewg4lhOOE595E16WQ 提取码: bf26

- 整个工程自取:

6.1.1 ban_dense_inversedepth.cpp

#include <iostream>

#include <vector>

#include <fstream>

using namespace std;

#include <boost/timer.hpp>

// for sophus

#include <sophus/se3.h>

using Sophus::SE3;

// for eigen

#include <Eigen/Core>

#include <Eigen/Geometry>

using namespace Eigen;

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

using namespace cv;

/**********************************************

* 本程序演示了单目相机在已知轨迹下的稠密深度估计

* 使用极线搜索 + NCC 匹配的方式,与书本的 13.2 节对应

* 请注意本程序并不完美,你完全可以改进它——我其实在故意暴露一些问题。

***********************************************/

// ------------------------------------------------------------------

// parameters

const int boarder = 20; // 边缘宽度

const int width = 640; // 宽度

const int height = 480; // 高度

const double fx = 481.2f; // 相机内参

const double fy = -480.0f;

const double cx = 319.5f;

const double cy = 239.5f;

const int ncc_window_size = 2; // NCC 取的窗口半宽度

const int ncc_area = (2*ncc_window_size+1)*(2*ncc_window_size+1); // NCC窗口面积

const double min_cov = 0.001; // 收敛判定:最小方差

const double max_cov = 0.5; // 发散判定:最大方差

// ------------------------------------------------------------------

// 重要的函数

// 从 REMODE 数据集读取数据

bool readDatasetFiles(

const string& path,

vector<string>& color_image_files,

vector<SE3>& poses

);

// 根据新的图像更新深度估计

bool update(

const Mat& ref,

const Mat& curr,

const SE3& T_C_R,

Mat& depth,

Mat& depth_cov

);

// 极线搜索

bool epipolarSearch(

const Mat& ref,

const Mat& curr,

const SE3& T_C_R,

const Vector2d& pt_ref,

const double& depth_mu,

const double& depth_cov,

Vector2d& pt_curr

);

// 更新深度滤波器

bool updateDepthFilter(

const Vector2d& pt_ref,

const Vector2d& pt_curr,

const SE3& T_C_R,

Mat& depth,

Mat& depth_cov

);

// 计算 NCC 评分

double NCC( const Mat& ref, const Mat& curr, const Vector2d& pt_ref, double depth, Vector2d& pt_cur, const SE3& T_C_R );

// 双线性灰度插值

inline double getBilinearInterpolatedValue( const Mat& img, const Vector2d& pt ) {

uchar* d = & img.data[ int(pt(1,0))*img.step+int(pt(0,0)) ];

double xx = pt(0,0) - floor(pt(0,0));

double yy = pt(1,0) - floor(pt(1,0));

return (( 1-xx ) * ( 1-yy ) * double(d[0]) +

xx* ( 1-yy ) * double(d[1]) +

( 1-xx ) *yy* double(d[img.step]) +

xx*yy*double(d[img.step+1]))/255.0;

}

// ------------------------------------------------------------------

// 一些小工具

// 显示估计的深度图

void plotDepth( const Mat& depth );

// 像素到相机坐标系

inline Vector3d px2cam ( const Vector2d px ) {

return Vector3d (

(px(0,0) - cx)/fx,

(px(1,0) - cy)/fy,

1

);

}

// 相机坐标系到像素

inline Vector2d cam2px ( const Vector3d p_cam ) {

return Vector2d (

p_cam(0,0)*fx/p_cam(2,0) + cx,

p_cam(1,0)*fy/p_cam(2,0) + cy

);

}

// 检测一个点是否在图像边框内

inline bool inside( const Vector2d& pt ) {

return pt(0,0) >= boarder && pt(1,0)>=boarder

&& pt(0,0)+boarder<width && pt(1,0)+boarder<=height;

}

// ------------------------------------------------------------------

int main( int argc, char** argv )

{

if ( argc != 2 )

{

cout<<"Usage: dense_mapping path_to_test_dataset"<<endl;

return -1;

}

// 从数据集读取数据

vector<string> color_image_files;

vector<SE3> poses_TWC;

bool ret = readDatasetFiles( argv[1], color_image_files, poses_TWC );

if ( ret==false )

{

cout<<"Reading image files failed!"<<endl;

return -1;

}

cout<<"read total "<<color_image_files.size()<<" files."<<endl;

// 第一张图

Mat ref = imread( color_image_files[0], 0 ); // gray-scale image

SE3 pose_ref_TWC = poses_TWC[0];

double init_depth = 3.0; // 深度初始值

double init_cov2 = 0.1; // 方差初始值

Mat depth( height, width, CV_64F, init_depth ); // 深度图

Mat depth_cov( height, width, CV_64F, init_cov2 ); // 深度图方差

for ( int index=1; index<color_image_files.size(); index++ )

{

cout<<"*** loop "<<index<<" ***"<<endl;

Mat curr = imread( color_image_files[index], 0 );

if (curr.data == nullptr) continue;

SE3 pose_curr_TWC = poses_TWC[index];

SE3 pose_T_C_R = pose_curr_TWC.inverse() * pose_ref_TWC; // 坐标转换关系: T_C_W * T_W_R = T_C_R

update( ref, curr, pose_T_C_R, depth, depth_cov );

plotDepth( depth );

imshow("image", curr);

waitKey(1);

}

cout<<"estimation returns, saving depth map ..."<<endl;

imwrite( "depth.png", depth );

cout<<"done."<<endl;

// 导出稀疏点云

ofstream points("point.xyz");

for ( int x=boarder; x<width-boarder; x++ )

{

for ( int y=boarder; y<height-boarder; y++ )

{

// 遍历每个像素

if ( depth_cov.ptr<double>(y)[x] > min_cov ) // 深度已收敛或发散

continue;

Vector3d f_ref = px2cam( Vector2d(x,y) );

f_ref.normalize();

Vector3d P_ref = f_ref*depth.ptr<double>(y)[x]; // 参考帧的 P 向量

points<<P_ref(0)<<"\t"<<P_ref(1)<<"\t"<<P_ref(2)<<"\t"

<<int(ref.ptr<uchar>(y)[x])<<"\t"<<int(ref.ptr<uchar>(y)[x])<<"\t"<<int(ref.ptr<uchar>(y)[x])<<"\t"<<endl;

}

}

return 0;

}

bool readDatasetFiles(

const string& path,

vector< string >& color_image_files,

std::vector<SE3>& poses

)

{

ifstream fin( path+"/first_200_frames_traj_over_table_input_sequence.txt");

if ( !fin ) return false;

while ( !fin.eof() )

{

// 数据格式:图像文件名 tx, ty, tz, qx, qy, qz, qw ,注意是 TWC 而非 TCW

string image;

fin>>image;

double data[7];

for ( double& d:data ) fin>>d;

color_image_files.push_back( path+string("/images/")+image );

poses.push_back(

SE3( Quaterniond(data[6], data[3], data[4], data[5]),

Vector3d(data[0], data[1], data[2]))

);

if ( !fin.good() ) break;

}

return true;

}

// 对整个深度图进行更新

bool update(const Mat& ref, const Mat& curr, const SE3& T_C_R, Mat& depth, Mat& depth_cov )

{

#pragma omp parallel for

for ( int x=boarder; x<width-boarder; x++ )

#pragma omp parallel for

for ( int y=boarder; y<height-boarder; y++ )

{

// 此处就是把梯度明显的区域挑选出来

// 进而从稠密深度估计变成半稠密深度估计

// 计算梯度

Eigen::Vector2d delta (

ref.ptr<uchar>(y)[x+1] - ref.ptr<uchar>(y)[x-1],

ref.ptr<uchar>(y+1)[x] - ref.ptr<uchar>(y-1)[x]

);

// 把梯度小的区域过滤到

if ( delta.norm() < 50 )

continue;

// 遍历每个像素

if ( depth_cov.ptr<double>(y)[x] < min_cov || depth_cov.ptr<double>(y)[x] > max_cov ) // 深度已收敛或发散

continue;

// 在极线上搜索 (x,y) 的匹配

Vector2d pt_curr;

bool ret = epipolarSearch (

ref,

curr,

T_C_R,

Vector2d(x,y),

depth.ptr<double>(y)[x],

sqrt(depth_cov.ptr<double>(y)[x]),

pt_curr

);

if ( ret == false ) // 匹配失败

continue;

// 取消该注释以显示匹配

// showEpipolarMatch( ref, curr, Vector2d(x,y), pt_curr );

// 匹配成功,更新深度图

updateDepthFilter( Vector2d(x,y), pt_curr, T_C_R, depth, depth_cov );

}

}

// 极线搜索

// 方法见书 13.2 13.3 两节

bool epipolarSearch(

const Mat& ref, const Mat& curr,

const SE3& T_C_R, const Vector2d& pt_ref,

const double& depth_mu, const double& depth_cov,

Vector2d& pt_curr )

{

// 此处是为逆深度做准备

// 因此需要根据方差求解出逆深度的范围

double d_min = 1/depth_mu-3*depth_cov, d_max = 1/depth_mu+3*depth_cov;

// 防止小于0

if(d_min<0) d_min = 0.2;

// 求出深度范围

double dd_max = 1/d_min;

double dd_min = 1/d_max;

if ( dd_min<0.1 ) dd_min = 0.1;

// 在极线上搜索,以深度均值点为中心,左右各取半长度

double best_ncc = -1.0;

Vector2d best_px_curr;

for ( double l=dd_min; l<=dd_max; l+=0.1 ) // l+=sqrt(2)

{

// 计算待匹配点与参考帧的 NCC

Vector2d px_curr;

// 此处是增加了放射变换的NCC计算

double ncc = NCC( ref, curr, pt_ref, l, px_curr, T_C_R);

if ( ncc>best_ncc )

{

best_ncc = ncc;

best_px_curr = px_curr;

}

}

if ( best_ncc < 0.85f ) // 只相信 NCC 很高的匹配

return false;

pt_curr = best_px_curr;

return true;

}

double NCC (

const Mat& ref, const Mat& curr,

const Vector2d& pt_ref, double depth,

Vector2d& pt_cur,

const SE3& T_C_R

)

{

// 零均值-归一化互相关

// 先算均值

double mean_ref = 0, mean_curr = 0;

vector<double> values_ref, values_curr; // 参考帧和当前帧的均值

// 以下代码中又进行放射变换计算的代码

// 其核心思路是假设参考影像上一点附近都为一个平面且深度都一样

for ( int x=-ncc_window_size; x<=ncc_window_size; x++ )

for ( int y=-ncc_window_size; y<=ncc_window_size; y++ )

{

// 从像平面坐标系到像空间坐标系

Vector2d pointRef(int(x+pt_ref(0,0)),int(y+pt_ref(1,0)));

Vector3d f_ref = px2cam( pointRef );

f_ref.normalize();

double value_ref = double(ref.ptr<uchar>( int(pointRef(1,0)) )[ int(pointRef(0,0)) ])/255.0;

mean_ref += value_ref;

// 根据放射变换算出到参考影像上的坐标

Vector3d P_ref = f_ref*depth; // 参考帧的 P 向量

Vector2d px_curr = cam2px( T_C_R*P_ref ); // 按深度均值投影的像素

if( x==0 && y==0 )

{

pt_cur = px_curr;

}

if ( !inside(px_curr) )

return -1.0;

double value_curr = getBilinearInterpolatedValue( curr, px_curr );

mean_curr += value_curr;

values_ref.push_back(value_ref);

values_curr.push_back(value_curr);

}

mean_ref /= ncc_area;

mean_curr /= ncc_area;

// 计算 Zero mean NCC

double numerator = 0, demoniator1 = 0, demoniator2 = 0;

for ( int i=0; i<values_ref.size(); i++ )

{

double n = (values_ref[i]-mean_ref) * (values_curr[i]-mean_curr);

numerator += n;

demoniator1 += (values_ref[i]-mean_ref)*(values_ref[i]-mean_ref);

demoniator2 += (values_curr[i]-mean_curr)*(values_curr[i]-mean_curr);

}

return numerator / sqrt( demoniator1*demoniator2+1e-10 ); // 防止分母出现零

}

bool updateDepthFilter(

const Vector2d& pt_ref,

const Vector2d& pt_curr,

const SE3& T_C_R,

Mat& depth,

Mat& depth_cov

)

{

// 我是一只喵

// 不知道这段还有没有人看

// 用三角化计算深度

SE3 T_R_C = T_C_R.inverse();

Vector3d f_ref = px2cam( pt_ref );

f_ref.normalize();

Vector3d f_curr = px2cam( pt_curr );

f_curr.normalize();

// 方程

// d_ref * f_ref = d_cur * ( R_RC * f_cur ) + t_RC

// => [ f_ref^T f_ref, -f_ref^T f_cur ] [d_ref] = [f_ref^T t]

// [ f_cur^T f_ref, -f_cur^T f_cur ] [d_cur] = [f_cur^T t]

// 二阶方程用克莱默法则求解并解之

Vector3d t = T_R_C.translation();

Vector3d f2 = T_R_C.rotation_matrix() * f_curr;

Vector2d b = Vector2d ( t.dot ( f_ref ), t.dot ( f2 ) );

double A[4];

A[0] = f_ref.dot ( f_ref );

A[2] = f_ref.dot ( f2 );

A[1] = -A[2];

A[3] = - f2.dot ( f2 );

double d = A[0]*A[3]-A[1]*A[2];

Vector2d lambdavec =

Vector2d ( A[3] * b ( 0,0 ) - A[1] * b ( 1,0 ),

-A[2] * b ( 0,0 ) + A[0] * b ( 1,0 )) /d;

Vector3d xm = lambdavec ( 0,0 ) * f_ref;

Vector3d xn = t + lambdavec ( 1,0 ) * f2;

Vector3d d_esti = ( xm+xn ) / 2.0; // 三角化算得的深度向量

double depth_estimation = d_esti.norm(); // 深度值

// 计算不确定性(以一个像素为误差)

Vector3d p = f_ref*depth_estimation;

Vector3d a = p - t;

double t_norm = t.norm();

double a_norm = a.norm();

double alpha = acos( f_ref.dot(t)/t_norm );

double beta = acos( -a.dot(t)/(a_norm*t_norm));

double beta_prime = beta + atan(1/fx);

double gamma = M_PI - alpha - beta_prime;

double p_prime = t_norm * sin(beta_prime) / sin(gamma);

// 逆深度的方差更新方式

double d_cov = 1/p_prime - 1/depth_estimation;

double d_cov2 = d_cov*d_cov;

// 高斯融合

double mu = depth.ptr<double>( int(pt_ref(1,0)) )[ int(pt_ref(0,0)) ];

double sigma2 = depth_cov.ptr<double>( int(pt_ref(1,0)) )[ int(pt_ref(0,0)) ];

double mu_fuse = (d_cov2/mu+sigma2/depth_estimation) / ( sigma2+d_cov2);

double sigma_fuse2 = ( sigma2 * d_cov2 ) / ( sigma2 + d_cov2 );

depth.ptr<double>( int(pt_ref(1,0)) )[ int(pt_ref(0,0)) ] = 1/mu_fuse;

depth_cov.ptr<double>( int(pt_ref(1,0)) )[ int(pt_ref(0,0)) ] = sigma_fuse2;

return true;

}

// 后面这些太简单我就不注释了(其实是因为懒)

void plotDepth(const Mat& depth)

{

imshow( "depth", depth*0.4 );

waitKey(1);

}

6.1.2 CMakeLists.txt

cmake_minimum_required(VERSION 2.8)

project(ban_dense_inversedepth)

set(CMAKE_BUILD_TYPE Release)

set(CMAKE_CXX_STANDARD 14)

#sophus

find_package(Sophus REQUIRED)

include_directories(${Sophus_INCLUDE_DIRS})

set(Sophus_LIBRARIES "/usr/local/lib/libSophus.so")

# opencv

find_package(OpenCV REQUIRED)

include_directories(${OpenCV_INCLUDE_DIRS})

# eigen

include_directories("/usr/include/eigen3/")

# pcl

find_package(PCL REQUIRED)

include_directories(${PCL_INCLUDE_DIRS})

add_definitions(${PCL_DEFINITIONS})

add_executable(ban_dense_inversedepth src/ban_dense_inversedepth.cpp)

target_link_libraries(ban_dense_inversedepth ${OpenCV_LIBS} ${PCL_LIBRARIES} ${Sophus_LIBRARIES} fmt)

6.1.3 输出

- 运行可执行程序

bupo@bupo-vpc:~/my_study/slam14/slam14_my/cap12/ban_dense_inversedepth/cmake-build-debug$ ./ban_dense_inversedepth ../../remode_test_data/test_data/

read total 202 files.

*** loop 1 ***

*** loop 2 ***

*** loop 200 ***

*** loop 201 ***

estimation returns, saving depth map ...

done.

- 会生成两个文件

depth.png和point.xyz,可自取:链接: https://pan.baidu.com/s/1116Fa71ktWl1Fko0hZSgUg 提取码: lb2k - 这里

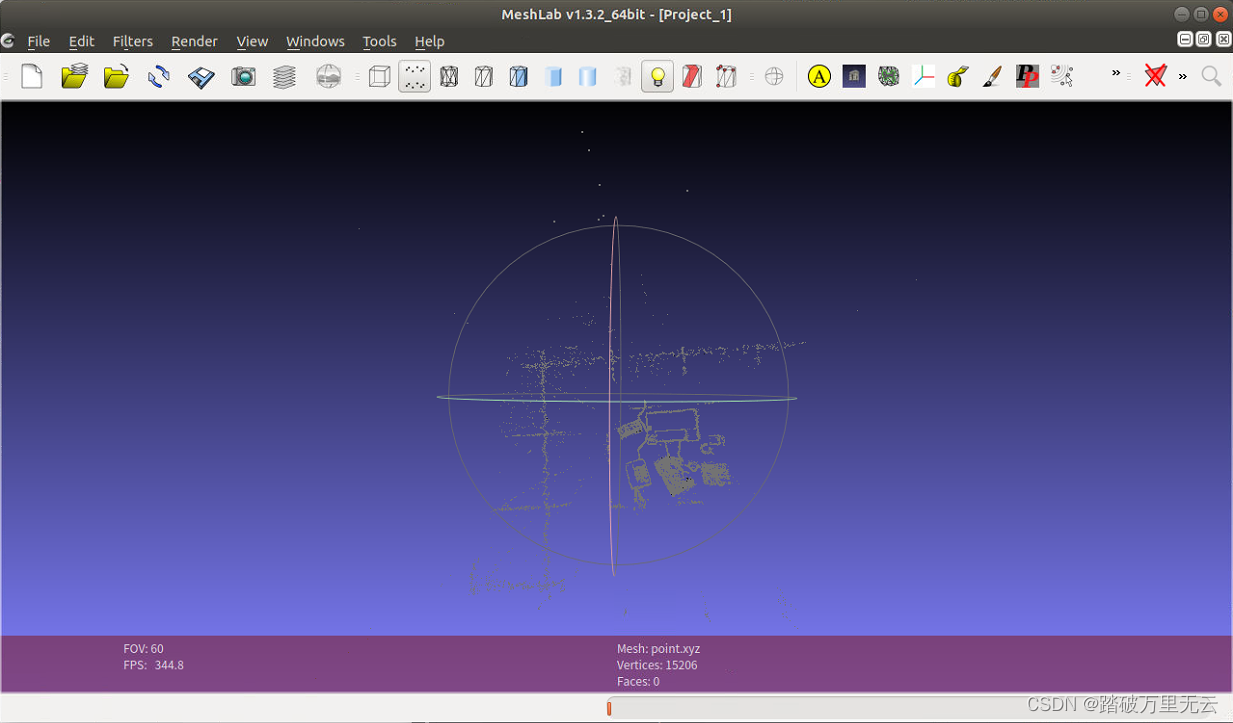

point.xyz要使用meshLab查看,其安装教程可参考:【slam十四讲第二版】【课本例题代码向】【第九讲~后端Ⅰ】【安装Meshlab】【BAL数据集格式】【ceres求解BA】【g2o求解BA】的1 安装Meshlab: 三维几何网格处理 - 可视化如下"

2144

2144

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?