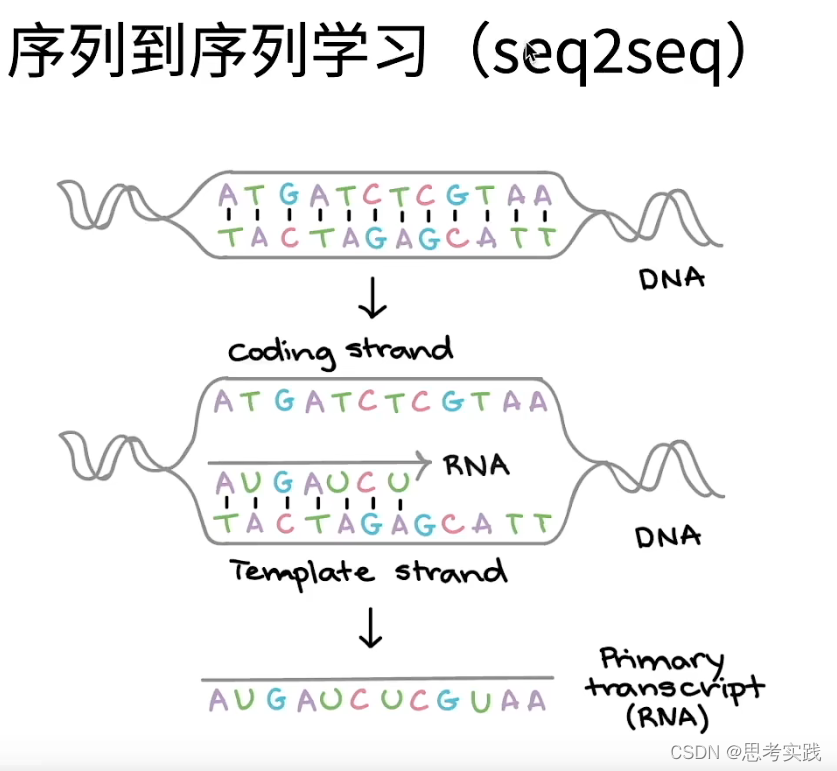

编码器解码器架构

后续自然语言处理都基本使用这个架构来实现的

Seq2Seq最早是用来做机器翻译的,现在用Bert比较多,Seq2Seq是一个Encoder-Decoder的架构,Seq2Seq模型的编码器使用的Rnn,解码器也是Rnn,编码器把最后那个hidden state传给解码器

双向Rnn经常用在encoder里面(给一个句子过来正向看一下,反向看一下),decoder需要预测,decoder不需要双向,解码器也是一个Rnn输出,

Seq2Seq训练和推理不太一样

Add attention

Improve accuracy will consume more computation.

RNN模型与NLP应用(8/9):Attention (注意力机制)_哔哩哔哩_bilibili //Brief and clear

Add self-attention

C1 is the weighted average of ho and h1.(h0 is a vector full of elements Zero), then c1=h1

Reference

seq2seq、attention、self-attention//very brief and clear,highly recommended for newbie in this filed

573

573

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?