原文地址http://blog.csdn.net/aptx704610875/article/details/51490201 支持原创,感谢半闲居士和易科实验室大牛

最近写完了windows上的实时rgbd_slam后,读了些论文,想着怎么改进程序,想在闭环检测的方面尝试一下。最近很火的ORB_SLAM2使用了DBoW2(ORB词袋)的方法,极大的提高了速度和匹配准确度,windows版的orb_slam2还没跑成功(一部分库的编译出现了问题,不过等研究做完了,会继续跑windows版本的),这几天一直在尝试ubuntu版的orb_slam的实时重建,今天终于成功了!~(感谢高博士为我们提供了加了3D建图模块的libORB_SLAM2.so(高博的博客:半闲居士))

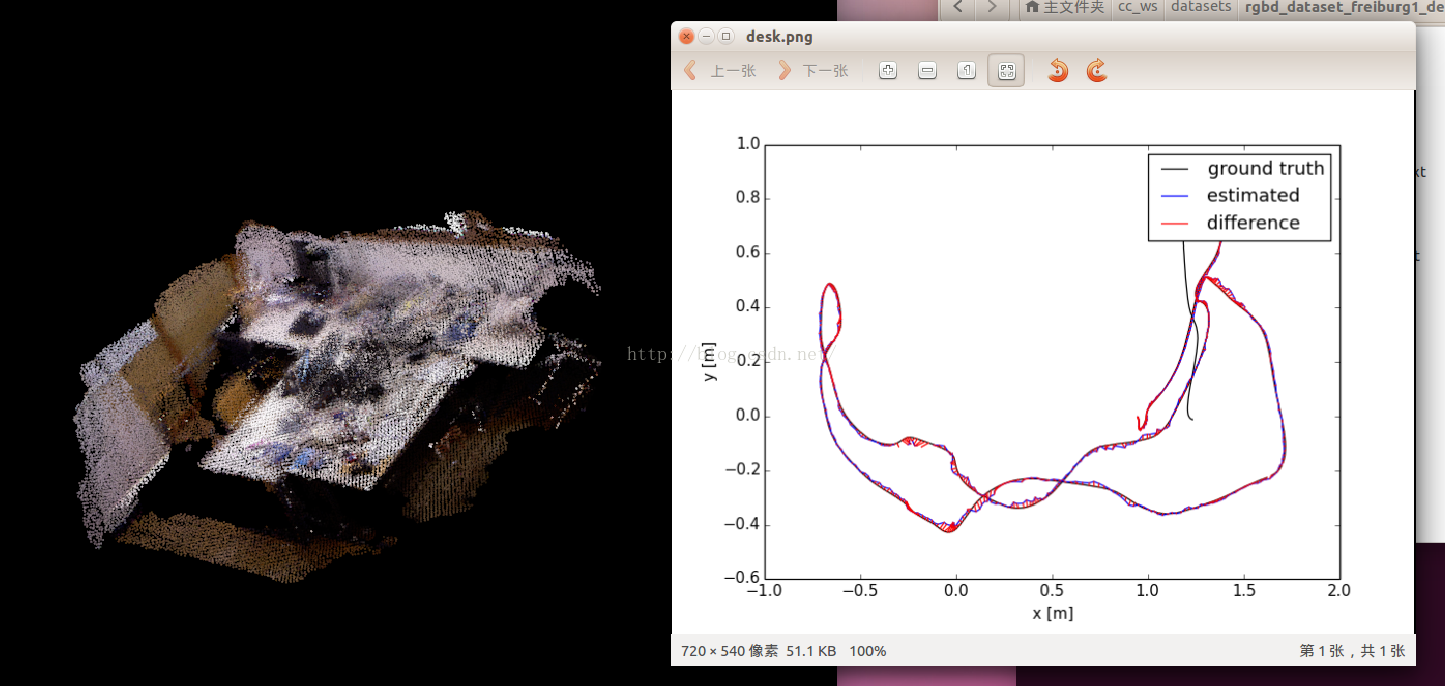

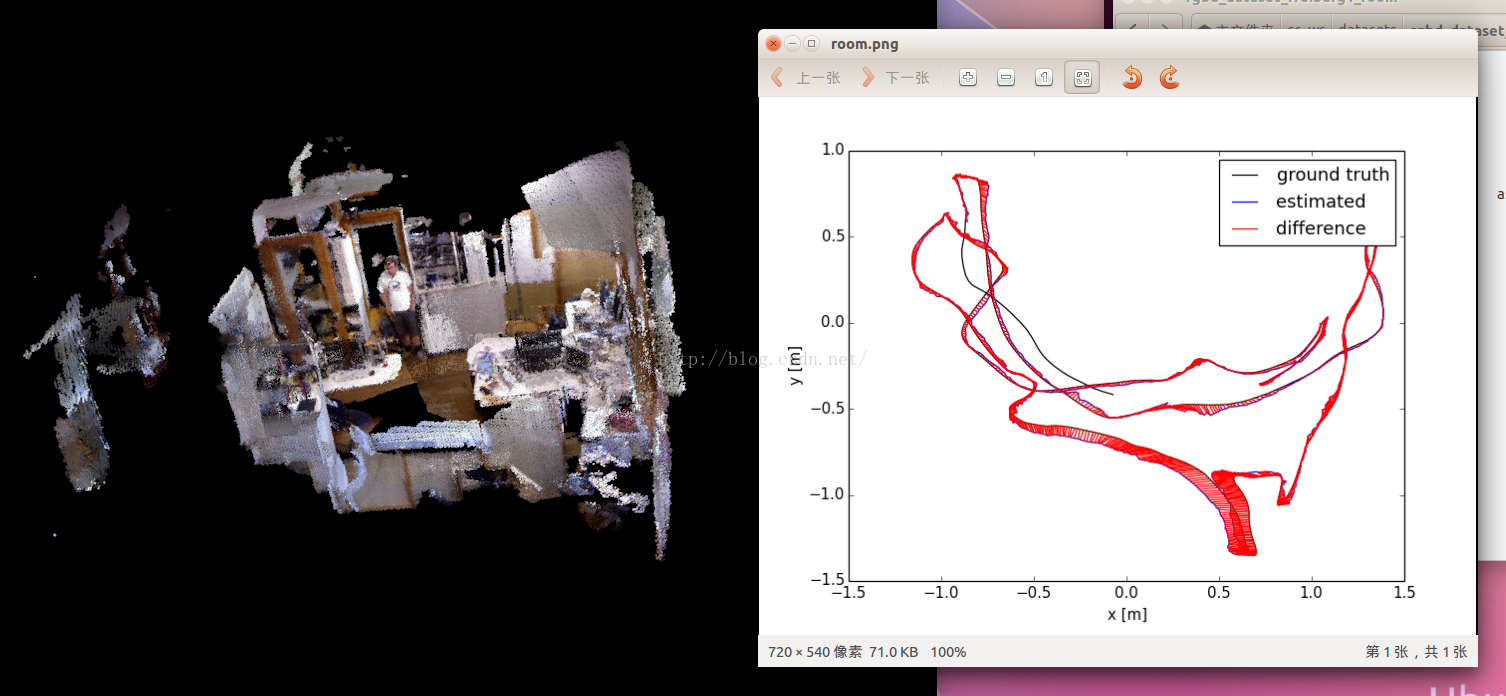

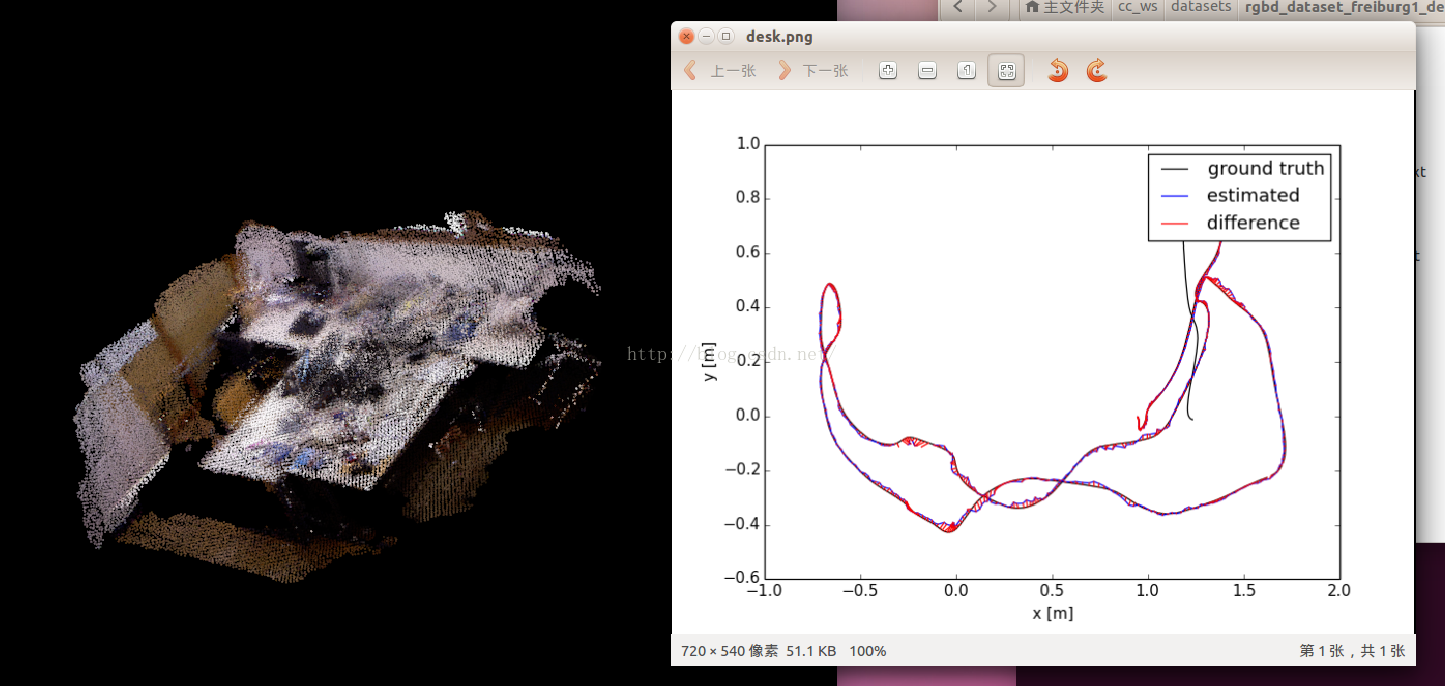

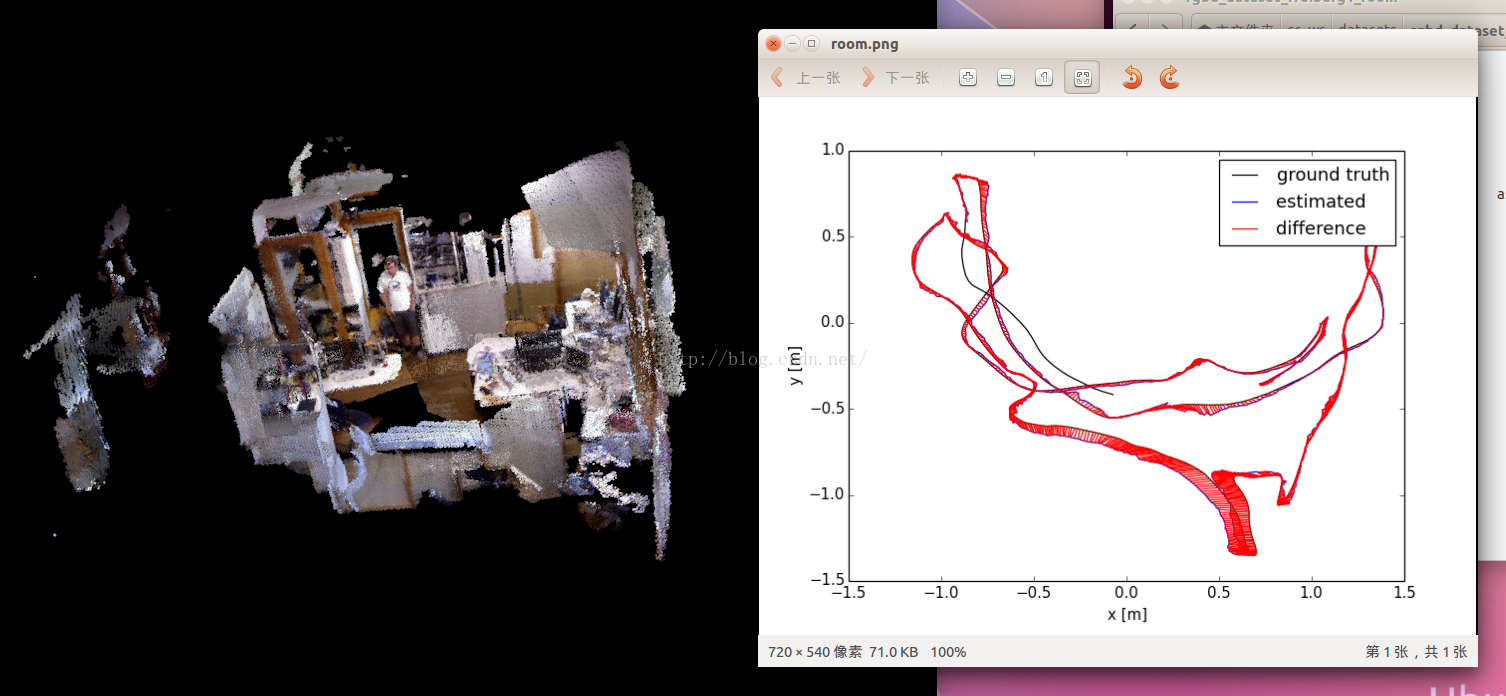

首先orb_slam2的话,github下载源码编译很容易,按照官方github下面的教程走就行。晒几张TUM数据集的结果:

desk:

room:

效果很棒,模拟轨迹和groundtruth的绝对误差真的和论文上说的一样小。我觉得ORB_SLAM2真的是现在视觉SLAM里最优秀的一版,考虑的非常全面。

那么如果我们要用到自己的项目中,该怎么调用呢?特别棒的一点是,原作者提供了libORB_SLAM2.so给我们,加上头文件System.h,我们就可以把ORB_SLAM作为一个整体加到我们的项目中。但是源码中并没有3D建图的模块,需要做相应改变,高博士为我们提供了加了3D建图模块的libORB_SLAM2.so,这时我们就可以根据自己的需求(kinect,xtion或其他可以获得点云的sensors)。高博的博客中有一篇是用的kinect2,在ROS运行的orb_slam2,今天我们来试一试不用ROS,通过OpenNI2直接调用xtion获取rgb数据和depth数据来重建环境(之前有windows上运行openni2_xtion的经验)。我的建议,要么xtion要么kinect2, 因为kinect很鸡肋,xtion比它轻巧,kinect2比它分辨率高。(源码下载csdn 源码下载github)(词袋文件太大,各位可以从官方github下载)

扯了这么多,现在拉回主线。在ORB_SLAM2/Examples/RGB-D/中,创建rgbd_xtion_cc.cpp:

- #include <iostream>

- #include <algorithm>

- #include <fstream>

- #include <chrono>

- #include <OpenNI.h>

- #include <opencv2/core/core.hpp>

- #include <opencv2/highgui/highgui.hpp>

- #include <opencv2/imgproc/imgproc.hpp>

-

- #include <System.h> // orb_slam2

-

- using namespace std;

- using namespace openni;

- using namespace cv;

-

- void showdevice(){

-

- Array<DeviceInfo> aDeviceList;

- OpenNI::enumerateDevices(&aDeviceList);

-

- cout << "电脑上连接着 " << aDeviceList.getSize() << " 个体感设备." << endl;

-

- for (int i = 0; i < aDeviceList.getSize(); ++i)

- {

- cout << "设备 " << i << endl;

- const DeviceInfo& rDevInfo = aDeviceList[i];

- cout << "设备名: " << rDevInfo.getName() << endl;

- cout << "设备Id: " << rDevInfo.getUsbProductId() << endl;

- cout << "供应商名: " << rDevInfo.getVendor() << endl;

- cout << "供应商Id: " << rDevInfo.getUsbVendorId() << endl;

- cout << "设备URI: " << rDevInfo.getUri() << endl;

-

- }

- }

-

- Status initstream(Status& rc, Device& xtion, VideoStream& streamDepth, VideoStream& streamColor)

- {

- rc = STATUS_OK;

-

-

- rc = streamDepth.create(xtion, SENSOR_DEPTH);

- if (rc == STATUS_OK)

- {

-

- VideoMode mModeDepth;

-

- mModeDepth.setResolution(640, 480);

-

- mModeDepth.setFps(30);

-

- mModeDepth.setPixelFormat(PIXEL_FORMAT_DEPTH_1_MM);

-

- streamDepth.setVideoMode(mModeDepth);

- streamDepth.setMirroringEnabled(false);

-

-

- rc = streamDepth.start();

- if (rc != STATUS_OK)

- {

- cerr << "无法打开深度数据流:" << OpenNI::getExtendedError() << endl;

- streamDepth.destroy();

- }

- }

- else

- {

- cerr << "无法创建深度数据流:" << OpenNI::getExtendedError() << endl;

- }

-

-

- rc = streamColor.create(xtion, SENSOR_COLOR);

- if (rc == STATUS_OK)

- {

-

- VideoMode mModeColor;

- mModeColor.setResolution(640, 480);

- mModeColor.setFps(30);

- mModeColor.setPixelFormat(PIXEL_FORMAT_RGB888);

-

- streamColor.setVideoMode(mModeColor);

- streamColor.setMirroringEnabled(false);

-

- rc = streamColor.start();

- if (rc != STATUS_OK)

- {

- cerr << "无法打开彩色图像数据流:" << OpenNI::getExtendedError() << endl;

- streamColor.destroy();

- }

- }

- else

- {

- cerr << "无法创建彩色图像数据流:" << OpenNI::getExtendedError() << endl;

- }

-

- if (!streamColor.isValid() || !streamDepth.isValid())

- {

- cerr << "彩色或深度数据流不合法" << endl;

- OpenNI::shutdown();

- rc = STATUS_ERROR;

- return rc;

- }

-

-

- if (xtion.isImageRegistrationModeSupported(

- IMAGE_REGISTRATION_DEPTH_TO_COLOR))

- {

- xtion.setImageRegistrationMode(IMAGE_REGISTRATION_DEPTH_TO_COLOR);

- }

-

- return rc;

- }

-

- int main(int argc, char **argv)

- {

- if(argc != 3)

- {

- cerr << endl << "Usage: ./rgbd_cc path_to_vocabulary path_to_settings" << endl;

- return 1;

- }

-

-

- ORB_SLAM2::System SLAM(argv[1],argv[2],ORB_SLAM2::System::RGBD,true);

-

- cout << endl << "-------" << endl;

- cout << "Openning Xtion ..." << endl;

-

- Status rc = STATUS_OK;

-

- OpenNI::initialize();

- showdevice();

-

- Device xtion;

- const char * deviceURL = openni::ANY_DEVICE;

- rc = xtion.open(deviceURL);

-

- VideoStream streamDepth;

- VideoStream streamColor;

- if(initstream(rc, xtion, streamDepth, streamColor) == STATUS_OK)

- cout << "Open Xtion Successfully!"<<endl;

- else

- {

- cout << "Open Xtion Failed!"<<endl;

- return 0;

- }

-

-

- cv::Mat imRGB, imD;

- bool continueornot = true;

-

- VideoFrameRef frameDepth;

- VideoFrameRef frameColor;

- namedWindow("RGB Image", CV_WINDOW_AUTOSIZE);

- for (double index = 1.0; continueornot; index+=1.0)

- {

- rc = streamDepth.readFrame(&frameDepth);

- if (rc == STATUS_OK)

- {

- imD = cv::Mat(frameDepth.getHeight(), frameDepth.getWidth(), CV_16UC1, (void*)frameDepth.getData());

- }

- rc = streamColor.readFrame(&frameColor);

- if (rc == STATUS_OK)

- {

- const Mat tImageRGB(frameColor.getHeight(), frameColor.getWidth(), CV_8UC3, (void*)frameColor.getData());

- cvtColor(tImageRGB, imRGB, CV_RGB2BGR);

- imshow("RGB Image",imRGB);

- }

- SLAM.TrackRGBD( imRGB, imD, index);

- char c = cv::waitKey(5);

- switch(c)

- {

- case 'q':

- case 27:

- continueornot = false;

- break;

- case 'p':

- cv::waitKey(0);

- break;

- default:

- break;

- }

- }

-

- SLAM.Shutdown();

- SLAM.SaveTrajectoryTUM("trajectory.txt");

- cv::destroyAllWindows();

- return 0;

- }

思路很简单,首先创建orb_slam系统,传入词袋和xtion/orb参数; 然后从xtion得到彩色图和深度图,调用slam的tracking线程处理得到位姿(当然也有loop线程的闭环检测和g2o下线程的优化),融合点云到同一个坐标下并显示(pointcloudmapping.h / cc里有声明和定义)。

然后在ORB_SLAM2/CMakeLists中添加:

- find_package(OpenNI2 REQUIRED)

- include_directories("/usr/include/openni2/")

- LINK_LIBRARIES( ${OpenNI2_LIBRARY} )

- target_link_libraries(${PROJECT_NAME}${OpenNI2_LIBRARY})

- add_executable(rgbd_xtion_cc

- Examples/RGB-D/rgbd_xtion_cc.cpp)

- target_link_libraries(rgbd_xtion_cc ${PROJECT_NAME})

然后就可以在ORB_SLAM2/build/里cmake .. 和 make了。完成后可以看到ORB_SLAM2/Examples/RGB-D/里有可执行文件rgbd_xtion_cc。(rgbd_tum是跑TUM数据集的, rgbd_cc是跑自己的数据集的,这两个都是预先采集好彩色图和深度图)

最后在ORB_SLAM2/Examples/RGB-D/里创建xtion的参数文件xtion.yaml (包含了ORB参数信息),大家根据标定(OpenCV,ROS,MATLAB等)结果自行修改内参(rgb内参和畸变):

- %YAML:1.0

-

- #--------------------------------------------------------------------------------------------

- # Camera Parameters. xtion 640*480

- #--------------------------------------------------------------------------------------------

-

- # Camera calibration and distortion parameters (OpenCV)

- Camera.fx: 558.341390

- Camera.fy: 558.387543

- Camera.cx: 314.763671

- Camera.cy: 240.992295

-

- Camera.k1: 0.062568

- Camera.k2: -0.096148

- Camera.p1: 0.000140

- Camera.p2: -0.006248

- Camera.k3: 0.000000

-

- Camera.width: 640

- Camera.height: 480

-

- # Camera frames per second

- Camera.fps: 30.0

-

- # IR projector baseline times fx (aprox.)

- Camera.bf: 40.0

-

- # Color order of the images (0: BGR, 1: RGB. It is ignored if images are grayscale)

- Camera.RGB: 0

-

- # Close/Far threshold. Baseline times.

- ThDepth: 50.0

-

- # Deptmap values factor

- DepthMapFactor: 1000.0

-

- #--------------------------------------------------------------------------------------------

- # ORB Parameters

- #--------------------------------------------------------------------------------------------

-

- # ORB Extractor: Number of features per image

- ORBextractor.nFeatures: 1000

-

- # ORB Extractor: Scale factor between levels in the scale pyramid

- ORBextractor.scaleFactor: 1.2

-

- # ORB Extractor: Number of levels in the scale pyramid

- ORBextractor.nLevels: 8

-

- # ORB Extractor: Fast threshold

- # Image is divided in a grid. At each cell FAST are extracted imposing a minimum response.

- # Firstly we impose iniThFAST. If no corners are detected we impose a lower value minThFAST

- # You can lower these values if your images have low contrast

- ORBextractor.iniThFAST: 20

- ORBextractor.minThFAST: 7

-

- #--------------------------------------------------------------------------------------------

- # Viewer Parameters

- #--------------------------------------------------------------------------------------------

- Viewer.KeyFrameSize: 0.05

- Viewer.KeyFrameLineWidth: 1

- Viewer.GraphLineWidth: 0.9

- Viewer.PointSize:2

- Viewer.CameraSize: 0.08

- Viewer.CameraLineWidth: 3

- Viewer.ViewpointX: 0

- Viewer.ViewpointY: -0.7

- Viewer.ViewpointZ: -1.8

- Viewer.ViewpointF: 500

-

- #--------------------------------------------------------------------------------------------

- # PointCloud Mapping

- #--------------------------------------------------------------------------------------------

- PointCloudMapping.Resolution: 0.01

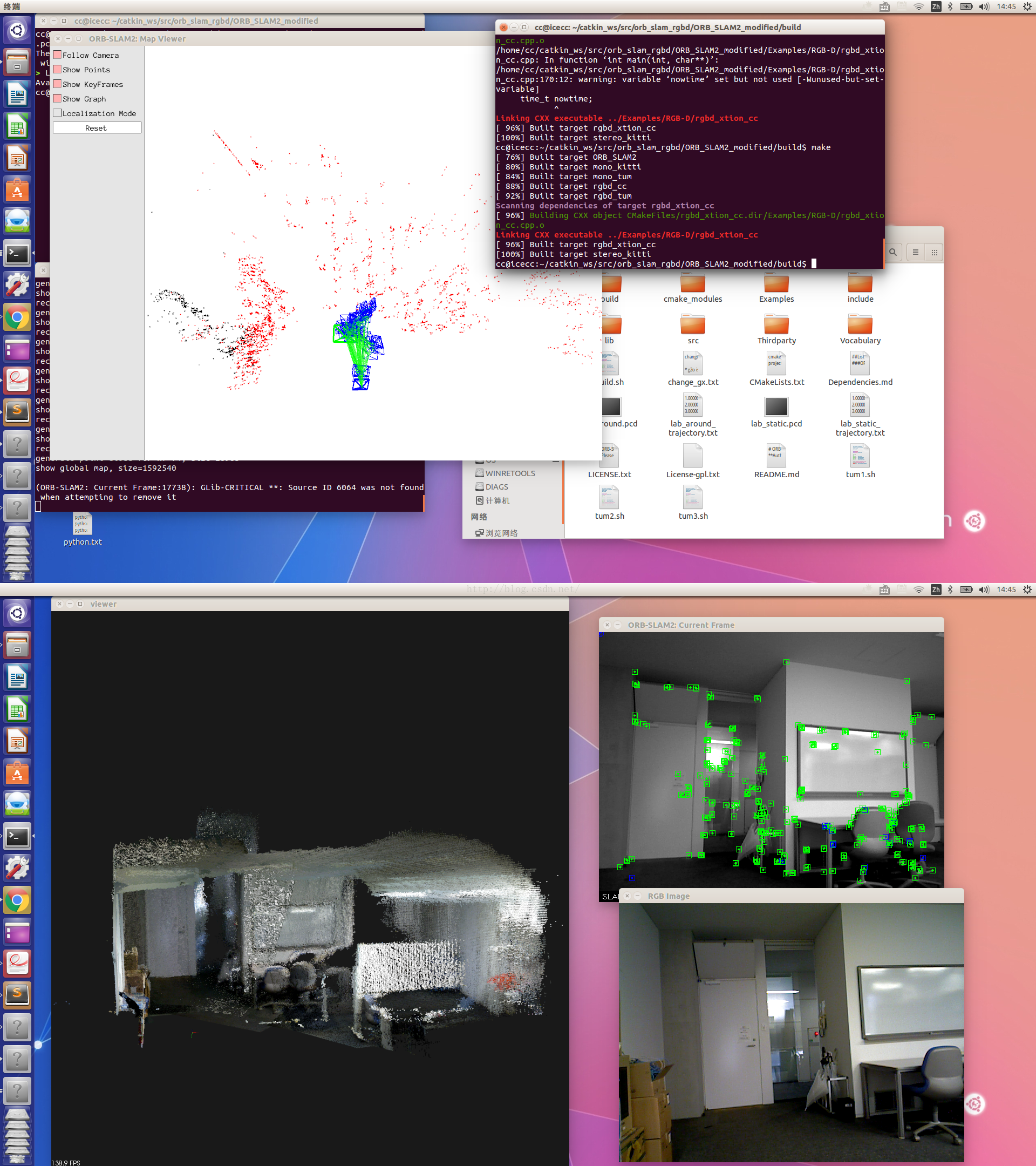

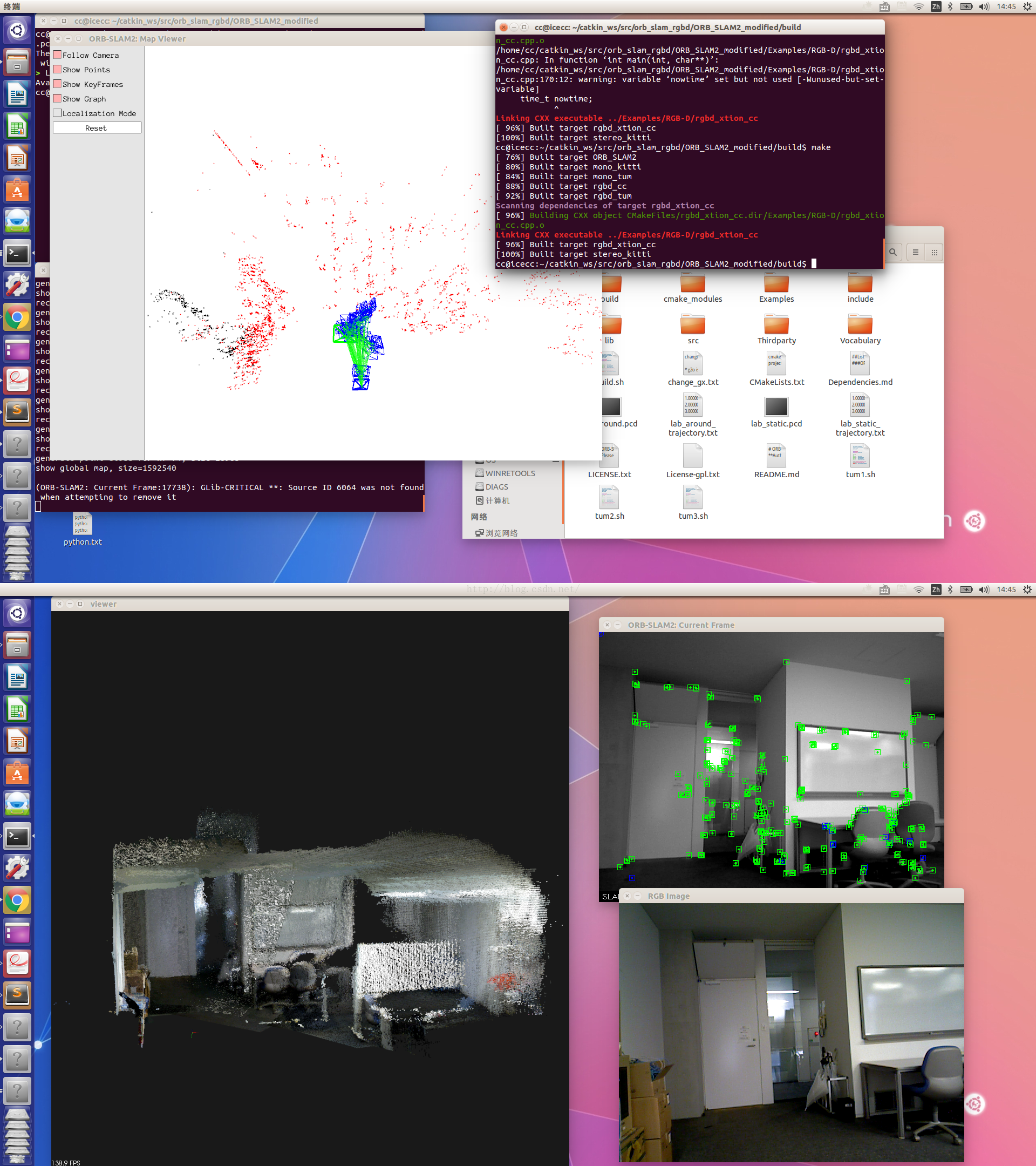

现在来运行吧~ 在ORB_SLAM2/下打开终端,输入 ./Examples/RGB-D/rgbd_xtion_cc Vocabulary/ORBvoc.txt Examples/RGB-D/xtion.yaml 系统加载ORB词袋,然后打开xtion设备,采集图像处理,显示角点,轨迹和点云:

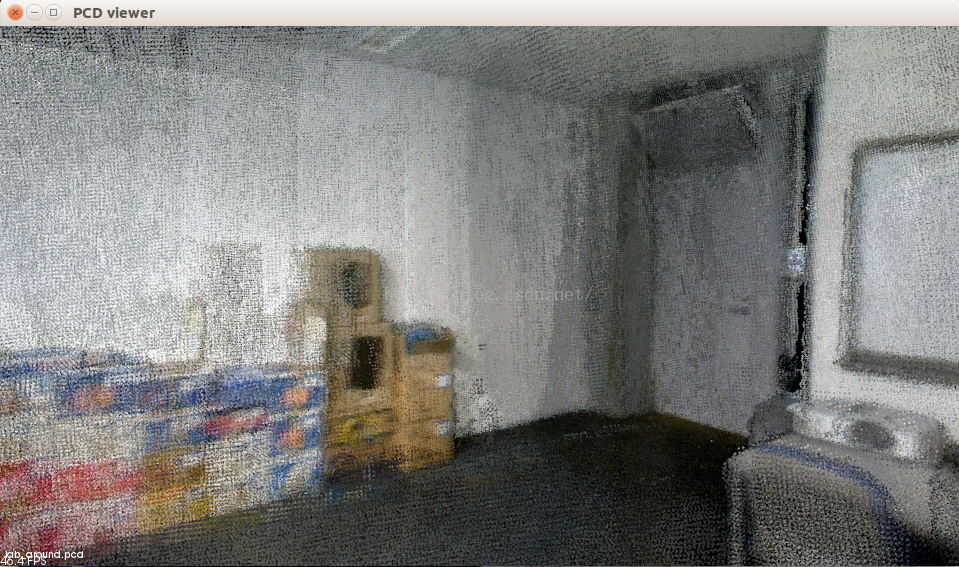

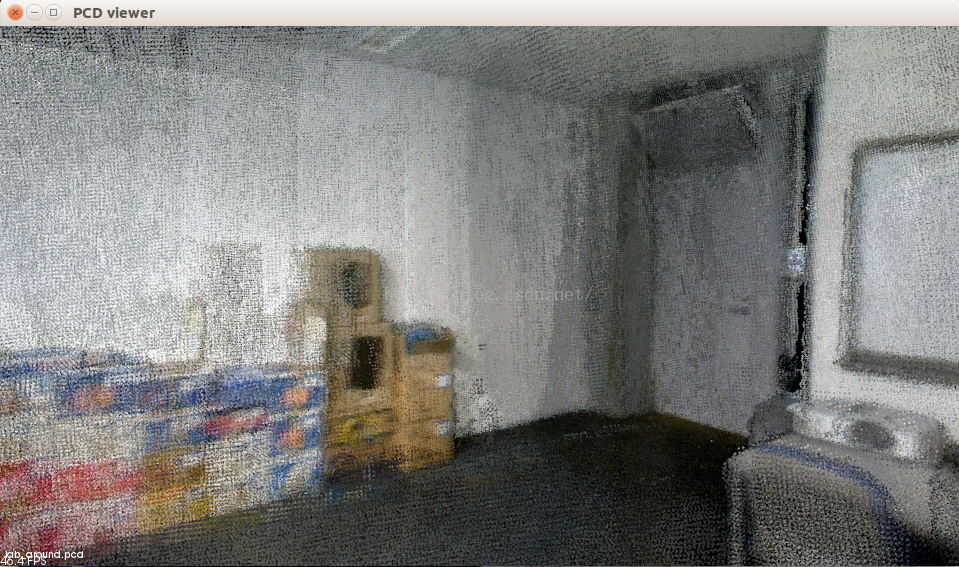

按‘q’或esc程序退出,自动保存估计的轨迹和点云pcd文件到ORB_SLAM2/下(帧数较多时如3000帧,保存时间较长20s左右)。运行pcl_viewer xx.pcd 即可查看。保存优化后的点云的代码在pointcloudmapping.cc里:

- globalMap->clear();

- for(size_t i=0;i<keyframes.size();i++)

- {

- cout<<"keyframe "<<i<<" ..."<<endl;

- PointCloud::Ptr tp = generatePointCloud( keyframes[i], colorImgs[i], depthImgs[i] );

- PointCloud::Ptr tmp(new PointCloud());

- voxel.setInputCloud( tp );

- voxel.filter( *tmp );

- *globalMap += *tmp;

- viewer.showCloud( globalMap );

- }

- PointCloud::Ptr tmp(new PointCloud());

- sor.setInputCloud(globalMap);

- sor.filter(*tmp);

- globalMap->swap( *tmp );

- pcl::io::savePCDFileBinary ( "optimized_pointcloud.pcd", *globalMap );

- cout<<"Save point cloud file successfully!"<<endl;

局部1:

局部2:

局部3:

接下来准备改进词袋,尝试加入3d特征描述words,训练然后提高匹配精准度,拭目以待。

///

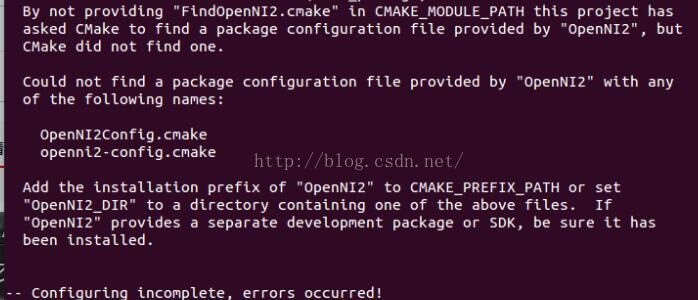

2016/6/23补充:

很多同学没有FindOpenNI2.cmake, 导致了系统找不到openni2的库

这里给大家提供了一个FindOpenNI2.cmake文件,复制内容到新的cmake文件,

保存后存到 /usr/share/cmake-2.8/Modules/中去就好了。

- #

- # Try to find OPenNI2 library and include path.

- # Once done this will define

- #

- #

-

- FIND_PATH( OpenNI2_INCLUDE_PATH OpenNI.h

- /usr/include

- /usr/local/include

- /sw/include

- /opt/local/include

- DOC "The directory where OpenNI.h resides")

- FIND_LIBRARY( OpenNI2_LIBRARY

- NAMES OpenNI2 openni2

- PATHS

- /usr/lib64

- /usr/lib

- /usr/local/lib64

- /usr/local/lib

- /sw/lib

- /opt/local/lib

- DOC "The OpenNI2 library")

-

- IF (OpenNI2_INCLUDE_PATH)

- SET( OpenNI2_FOUND 1 CACHE STRING "Set to 1 if OpenNI2 is found, 0 otherwise")

- ELSE (OpenNI2_INCLUDE_PATH)

- SET( OpenNI2_FOUND 0 CACHE STRING "Set to 1 if OpenNI2 is found, 0 otherwise")

- ENDIF (OpenNI2_INCLUDE_PATH)

-

- MARK_AS_ADVANCED( OpenNI2_FOUND )

///

2016/8/12补充:

(回答4楼的问题,由于CSDN回复功能的渣过滤技术,只能写在这里了)

1。Camera.bf中的b指基线baseline(单位:米),f是焦距fx(x轴和y轴差距不大),bf=b*f,和ThDepth一起决定了深度点的范围:bf * ThDepth / fx即大致为b * ThDepth。 基线在双目视觉中出现的比较多,如ORB-SLAM中的双目示例中的EuRoC.yaml中的bf为47.9,ThDepth为35,fx为435.2,则有效深度为47.9*35/435.3=3.85米;KITTI.yaml中的bf为387.57,ThDepth为40,fx为721.54,则有效深度为387.57*40/721.54=21.5米。这里的xtion的IR基线(其实也可以不这么叫)bf为40,ThDepth为50,fx为558.34,则有效深度为3.58米(官方为3.5米)。

2。DepthMapFactor: 1000.0这个很好理解,depth深度图的值为真实3d点深度*1000. 例如depth值为2683,则真是世界尺度的这点的深度为2.683米。 这个值是可以人为转换的(如opencv中的convert函数,可以设置缩放因子),如TUM中的深度图的DepthMapFactor为5000,就代表深度图中的5000个单位为1米。

未来,属于一心想要改变世界的人。

-cc

332

332

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?